How to Understand Consensus Forecasting Methods vs Statistical Forecasting Methods

Executive Summary

- The statistical forecasting is typically combined with judgment methods produced by sales and marketing to arrive at a consensus forecast.

- We compare these different forecasting approaches.

Introduction to Consensus Forecasting

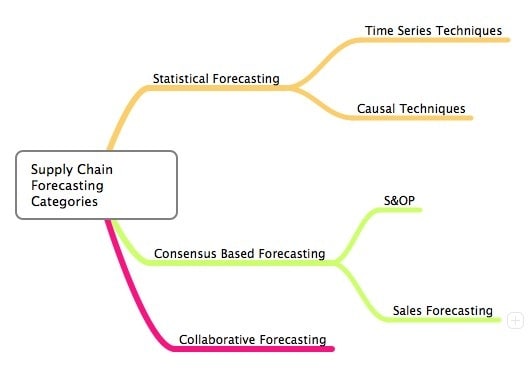

There are three categories of obtaining forecast information from different people who apply to supply chain forecasting:

- Consensus forecasting

- Manual overrides to statistical forecasting systems

- Collaborative forecasting

See our references for this article and related articles at this link.

The Conventional Wisdom

Presently, there is a general concept at play that getting more people involved in and adjusting the forecast will improve the forecast accuracy. This is partly a holdover from consensus forecasting, which requires many people to produce a forecast. However, the lessons from both practical observation and the research are that restricting access to adjusting the forecast is one of the most critical components of improving forecast accuracy.

This is discussed in my book Supply Chain Forecasting Software, and I have included a quote below from the book:

“Many stories on CBF seem to center around just getting more people to participate, as if un-moderated participation has been proven to generate high quality forecasts. The real story about CBF is considerably more complicated. In fact, CBF is very much a process of receiving input and then performing analytical filtering to remove or reduce the impact of individuals or groups with poor forecasting accuracy. This part of CBF is under-emphasizes, probably because it’s not as “feel good” of a story, and the next logical question is “who is going to get their input reduced?” It also brings up the question of how this topic is raised during the implementation of CBF projects.”

Important Rules Around Consensus Forecasting – CBF

And what applies to consensus forecasting is true of the second two methods that I have listed.

Not all manual forecast adjustments are created equal. Some groups of individuals, as well as some specific people, tend to have better forecast accuracy than others. Secondly, the most efficient manual forecast adjustments are when the forecast is significantly reduced. Michael Gilliland explains a research study on this topic:

“Robert Fildes and Paul Goodwin from the UK reported on a study of 60,000 forecasts at four supply chain companies and published the results in the Fall 2007 issue of Foresight: The International Journal of Applied Forecasting. They found that about 75 percent of the time, the statistical forecasts were manually adjusted – meaning that 45,000 forecasts were changed by hand! Perhaps the most interesting finding was that small adjustments had essentially no impact on forecast accuracy. The small adjustments were simply a waste of time. However, big adjustments, particularly downward adjustments, tended to be beneficial.”

Collaborative forecasting success tends to be strongly related to the percentage of business the collaborating company represents to its partner. Partners with less at stake tend not to put effort into providing timely and beneficial forecasts.

Dealing with Reality

This is a hard pill to swallow, but not all people in the organization who touch the forecast are trying to improve its accuracy or have the domain expertise to improve accuracy. It is pretty surprising how many companies allow so much manual adjustment into the forecast without tracking the impact of its adjustments on forecast accuracy. (although, to be fair, many applications do not make this a focal point; one of the few that does is Right90, which specializes in sales forecasting software) The goal should not simply increase the number of participants but include only those members who add value to the forecasting process.

Consensus Forecasting and Judgement Forecasting Methods and Bias

For those of you who have read other posts on this blog, you will know I am a big fan of the book Demand-Driven Forecasting by Charles Chase. Charles has the following things to say about consensus forecasting vs statistical forecast methods.

Judgement methods are not as robust as quantitative methods when it comes to sensing and predicting the trend, seasonality and cyclical elements… Unfortunately judgement techniques are still the most widely used forecasting methods in business today. Such methods are applied by individuals or committees in a consensus process to gain agreement and make decisions… However, judgmental methods tend to be biased toward the individual or the committee developing the forecast. They are not consistently accurate over time due to their subjective nature… However, over my 20 years as a forecasting practitioner, I have found that quantitative methods have been proven to outperform judgement methods 90 percent of the time due to the structured unbiased approach.

This statement is supported by research, which is rarely discussed in the industry.

Statistical Methods and Bias

Statistical methods have less bias.

Many recent research studies demonstrate that statistical methods are preferable to consensus forecasting or judgment forecasting methods when demand history exists. This information from the research does not seem to reach the industry effectively, as many companies are pinning their hopes on consensus forecasting without having a plan for adjusting for the inherent bias in judgment methods.

The Issue with Political Management of Bias Control

Forecasting is an intensely political activity at most companies. Let me rephrase. Forecasting is an intensely political activity at all companies. However, some companies can reduce the political bias from entering the forecast, but most are not.

Whose Interests are Reflected in the Consensus Forecast?

The fact that different groups in a company have different incentives is well documented. These incentives can cause the forecast to be higher or lower than the rationale. The issue is that while this is known, not enough companies do enough to reduce this known bias. Those who control the final forecast must understand that, as pointed out by Michael Gilliland, not every person who adjusts the forecast has forecast accuracy as an objective.

Some want a lot of stock available in the system.

The Focus of Forecasting in Books

If you read most forecasting books, they tend to focus very heavily on the mechanics of forecasting. They discuss forecasting methodologies (simple exponential smoothing, regression, etc.). However, not enough get into the business process or how forecasts are used in real life.

When I was younger and less experienced and read these types of books, I came away with the impression that forecasting was mainly about following a rational process and selecting your algorithm. That way of thinking misses an entire forecasting dimension, which typically exists in the manual overrides to the system.

This emphasis is getting even more lopsided. This is because the current concept or trend in forecasting is that statistical methodologies are less critical to the forecast than creating a consensus forecast that incorporates many inputs from within the company and collaborative forecasting, which includes the forecast input from external business partners.

Errors in Cognition

It is common for the past to be misinterpreted and for a new “paradigm” to arise based upon an incomplete history analysis in any area. A few areas that have been misinterpreted in forecasting in recent years include the following:

Errors in interpreting the history of forecasting include the following:

- Statistical Forecasting: There was never any evidence that sophisticated algorithms would significantly increase forecast accuracy. Vendors and consultants pushed this idea on the industry.

- Consensus Forecasting: There has never been evidence that statistical forecasting methods provide poor output compared to consensus and collaborative forecasting methods.

The Origins of this Myth on Consensus Forecasting

These two myths are at the heart of the current problem of the recent trend in forecasting, which is to try to get more inputs into forecasting. The reason for the first myth was based on the incentives of software companies and consultants to sell the business. These groups convinced the industry to invest in complex software with the promise of better forecasts but without proving that these more complex solutions would improve forecasts.

The second myth is related to the first myth.

The argument goes that since the more advanced methods did not improve the forecast, those statistical methods are now less important than getting more groups to have input into the forecast.

Why Political Bias is Unmeasured

The problem with the current myth is that it does not account for political bias. Getting more inputs on the forecast does not address the incentives of these groups. It also tends to assume that all the groups forecast at a similar accuracy, which cannot be true. Even if different groups had equivalent forecasting capabilities, their incentive structures would skew the resulting forecast to be consistently above and below their actual forecast capability.

The current concept regarding collaborative and consensus forecasting is not wrong, but it is incomplete, which can lead to the same outcome as being wrong. The issue is one of measurement. That is, the accuracy of all the groups providing their forecasts to the process must be measured. Over time, the groups with more accurate forecasts should be weighed more heavily than those with a poorer record. This would seem to be the best way to manage the political dimension of the forecasting process.

Of course, simply instituting a forecasting process where poorer performing forecasting groups have their weighing decreased is its political activity.

The Popularity of Consensus Forecasting

When you see the light on something, it sometimes can be surprising that the item is far less prevalent than you imagined. Working in companies as a consultant in the supply chain, the term consensus forecasting is frequently used.

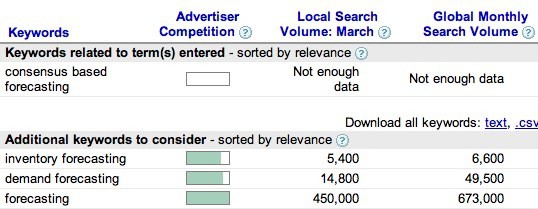

I have written articles on how the pendulum has swung from overemphasizing statistical methods to overemphasizing consensus forecasting. So, imagine my surprise when I performed a search in Google Adwords Keyword Search Tool and found a volume level so low that Google does not measure it. See the image below, which shows how CBF scored.

<br ” />The next closest term, which is collaborative forecasting, is far more popular and about equal in popularity to the term “sales and operations planning.” Of course, collaborative forecasting is not the same as CBF, but the concept is similar. There can be confusion among different supply chain terms, so it is possible that some people meant CBF when they typed collaborative forecasting into Google.

More Evidence on LinkedIn

More Evidence on LinkedIn

Another example of CBF’s low profile in public consciousness is the LinkedIn groups related to the topic. On Linked In, there are two groups for people who want to keep up with collaborative forecasting.

These two groups have a combined membership of 127 members. The only group on LinkedIn for CBF is the group I started a few weeks ago and has only three members, including me.

Consensus Forecasting Books?

I was checking in on the consensus forecasting group I created on LinkedIn and noticed that I did not have a single request to be added to the group after roughly four months. Other groups I have created do tend to get requests to be added. I next decided to check Amazon.com for books on consensus forecasting, as this tends to provide insight into how mature a topic is and how much it is on people’s radar.

How Important is Consensus Forecasting Again?

I find it very strange that I work on different clients where many people say how important consensus forecasting is, and yet there are no books directly on the topic. Some articles declare that statistical forecasting is no longer where the focus should be. Most of the real potential for improving forecast accuracy resides in consensus forecasting.

I don’t know if this is true, and I have never seen any research to substantiate the claim. The claim makes no sense because each forecasting category provides opportunities for improvement, and I don’t know how one would begin to quantify where the majority of opportunity resides. However, I bring this up to demonstrate that consensus forecasting is certainly on people’s minds.

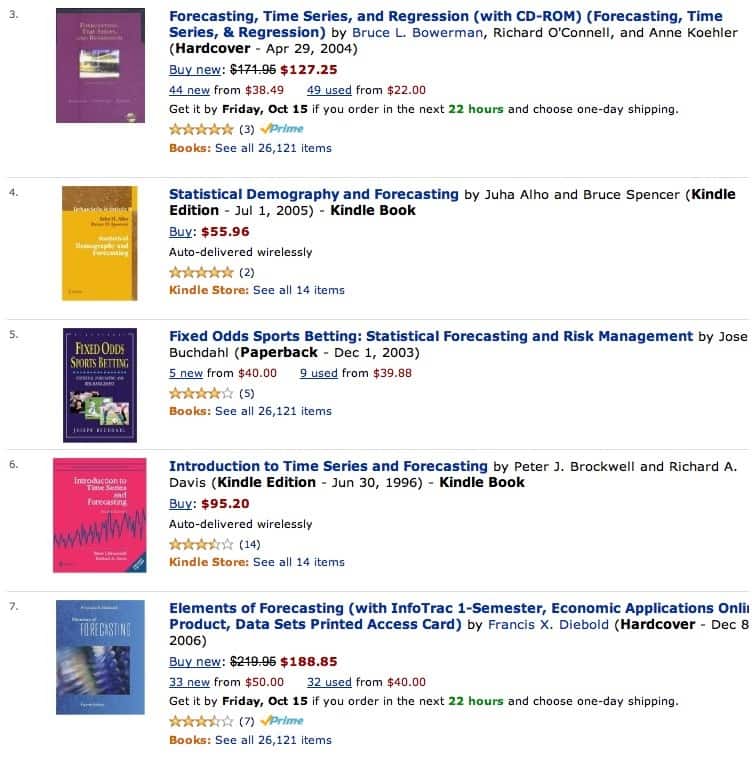

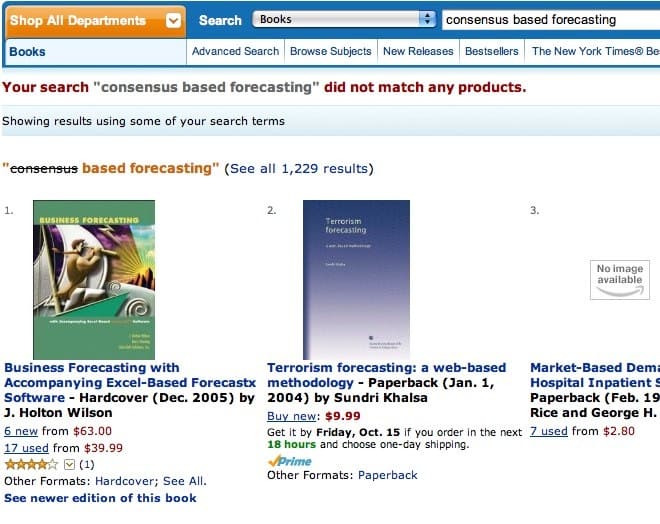

Below are the books related to consensus forecasting.

Interestingly, S&OP forecasting, a type of forecasting that is a subset of consensus forecasting, has quite a few titles on Amazon.com.

Interestingly, S&OP forecasting, a type of forecasting that is a subset of consensus forecasting, has quite a few titles on Amazon.com.

The mind map of forecasting and how the different categories are related is listed below.

The mind map of forecasting and how the different categories are related is listed below.

There are not a shortage of books on statistical forecasting. Here you can see the number of books on statistical methods; and in fact, statistical forecasting methods dominate the forecasting category.

Does This Mean that Consensus Forecasting is Not Covered?

No, it does not, but it is covered lightly. Consensus forecasting is covered here and there, as several forecasting books have some coverage on consensus forecasting. On several that I checked, consensus forecasting was only briefly described in a 1/2 page summary before moving on to another, something like collaborative forecasting.

Important information about the organizational challenges and the controls related to forecasting quality is often left out. There is quite a lot more to successful consensus forecasting than simply obtaining the forecast input from the right parties.

The Solution

The business community must appreciate how crucial specialized consensus forecasting software is to support their process before the vendors that provide the best solutions in this space can take off. So, collectively, the vendors and consulting companies need to begin to raise the profile of consensus forecasting software so that companies can learn about the great tools in this space.

After an objective has been set, the next question is always where to allocate time and resources. In my view, much of the emphasis in education should be on explaining how consensus based forecasting success should not be expected for using standard demand planning software that was never designed for the task.

What is One of the Most Surprising Things About Collaborative Forecasting?

Many forecasts that are shared between companies are never used.

Ways of Performing Collaborative Forecasting

- With documents (EDI, XML, Excel)

- With collaborative applications (SAP SNC, E2Open, etc..)

The Depressing News About Consensus Forecasting

Consensus forecasting is a significant focus area many companies want to implement in the coming years. However, whenever a major initiative is taken, it’s crucial to analyze the history concerning the software type to be implemented and, at the same time, read “The Fortune Sellers,” a book that takes a very realistic view of forecasting effectiveness in multiple areas, such as weather forecasting and financial forecasting.

The book does not focus on supply chain planning forecasting but still has many lessons that can be generalized to the supply chain space. The book has the following about research into the improvement expected from consensus forecasting methods.

Consensus forecasts offer little improvement. Averaging faulty forecasts does not yield a highly accurate prediction. Consensus forecasts are theoretically slightly more accurate than the predictions of individual forecasters by only a few percentage points, due to the average effect that evens out the egregious errors that individual forecasters periodically make. But consensus forecasts are no more likely to predict key turning points in the economy than the individual forecasts on which they are based, and the few extra points of accuracy gained by averaging do not necessarily make them superior to the naive forecast. – William A. Sherden

I will have to research this myself as to why, which will go into a future book on the history of supply chain planning. However, this is consistent with other research into the Delphi method that the RAND Corporation designed.

How Executives Increase Demand Forecasting Error

There are several ways in which executives reduce demand forecasting accuracy. They include the following:

- They are directly reducing accuracy through the direct demand forecasting adjustment.

- Unrealistic forecast goal-setting causes inaccuracies in forecasting measurement.

- I am selecting inappropriate demand forecasting software and marginalizing the users from the software selection process.

- They are not hiring the right people and spending the necessary money to get expert forecasting knowledge in their companies. This connects to poor software selection, and the company cannot properly differentiate between various vendor claims and, therefore, often purchases software primarily based on the brand.

Executive Forecast Adjustment

While the present concept in companies is that getting more people to touch the forecast is a good thing, the large body of research and practical experience on forecasting input is that only individuals with the combination of domain expertise and limited bias should be allowed to adjust demand forecasting. Individuals need to earn the right to adjust the forecast but have their input sandboxed.

The input is taken and analyzed over time to see its adjustment to the forecast process. If the individual’s input would have improved demand forecasting, then after enough observational time, they can then be allowed to adjust the forecast. Their input must be monitored over time to ensure it stays high-quality.

While they can change forecasts, executives are not experts in product demand and tend to have a strong bias. This means that they are not good candidates to provide input to the forecast. Executive input has been tested over time and has not been found to improve the forecast.

Michael Gilliland describes this in “Worst Practices in Forecasting.”

“This style of report should be easy to understand. We see that the overall process is adding value compared to the naïve model, because in the bottom row the approved forecast has a MAPE of 10 percentage points less than the MAPE of the naïve forecast. However, it also shows that we would have been better off eliminating the executive review step, because it actually made the MAPE five percentage points worse than the consensus forecast. It is quite typical to find that executive tampering with a forecast just makes it worse.”

Who can tell executives that their input does not improve demand forecasting? Their employees won’t tell them, consultants won’t tell them as they want to sell them more services, and criticism is not the way to accomplish this goal. Criticizing people lower in the organization is ok. Poorly selected software, which was never a good fit for the company, is typically hung on users being “resistant to change.” Therefore, executive input into the forecast continues.

Setting Unrealistic Goals

Because companies don’t baseline their forecasts against the naive forecast, they don’t know what good forecasting accuracy is for their products. Executives tend to be type-A personalities who want improvement. What should the improvement goal be? Arbitrary goals don’t work very well, as Michael Gilliland of SAS described in “Worst Practices in Forecasting.”

“Your goal is now set to 60 percent forecast accuracy or you will be fired. So what do you do next? Given the nature of the behavior you are asked to forecast – the tossing of a fair coin – your long-term forecast accuracy will be 50 percent, and it is impossible to consistently achieve 60 percent accuracy. Under these circumstances, your only choices are to resign, stay around and get fired, or figure out a way to cheat!”

Selecting Inappropriate Demand Forecasting Software

Most companies have selected poorly performing and inappropriate forecasting software for their needs, which has often been purchased due to the brand association rather than the application being able to improve forecast accuracy. That is a bold statement, so what evidence do I have of this?

It’s simple.

I repeatedly run into clients who can’t do forecasting activities that I can perform efficiently in some of the best-of-breed applications that I have access to (which are also much less expensive than the applications they have). When I demonstrated this software, the response I get is, “Well, our software can do that.”

I then ask how long they have been trying to get it to work, and they say..

“a year and a half.”

Rather than taking this as evidence that they have made a poor software selection, they will tell me.

“this is a major vendor; we just can’t believe it can’t do (fill in the blank).”

The second piece of evidence is that it is expected to see consensus forecasting attempted in a statistical forecasting application. This means that many executives are not familiar with the different specialized software as it applies to the distinct forecasting processes, a topic which I address in insignificant detail in my upcoming book Supply Chain Forecasting Software.

Who Gets to Influence Demand Forecasting Software Selection?

Forecasting software selections often are limited to the executives the vendors, a large consulting company (which is trying to get the company to use its services, which are typically trained only in the large brands). Analyst firms like Gartner are not in the room but have influence, and Gartner tends to write from a strategic perspective and not directly at the application level. (they also derive more income from large vendor contributions)

Their strategic perspective also tends to favor the most prominent vendors. Every influencing party in the room is aligned with the major vendors. So the executives often purchase a big brand, often with lagging functionality, which can be beneficial if the forecasting application fails to deliver very much value (which is often the case with forecasting software from mega-vendors); as the executive can always say,

We did our due diligence, we bought SAP, it’s a major brand, what else can one do?

It turns out quite a bit more.

Perhaps I am naive, have a different perspective on risk, and don’t understand executive politics very well. I prefer a successful implementation with a best-of-breed vendor rather than a failed one, which must be defended by explaining that a major brand was selected. It’s a sad state of affairs when buying an uncompetitive application based on brand is considered the lowest-risk solution, and buying from a best-of-breed vendor requires “bravery” from an executive.

When executives buy software in the standard way, planners get an application that is difficult to use and cannot help them get their job done. Strangely, executives should want to remove the input from the people they will hold accountable for using the system. Executives should see their role as putting the best tool in planners’ hands, allowing them to improve forecast accuracy. I am unconcerned with the software that other people use, and as long as they like it, they can use it effectively to meet their objectives.

Not Hiring the Right People to Perform Demand Forecasting

People with deep forecasting expertise tend not to work permanently for companies but tend to work for best-of-breed vendors or consulting companies. This is a matter of budget. A company needs several experts in forecasting because consultants and vendors are selling something to the company.

A company needs experts who can be relied upon to provide feedback on ideas and concepts for forecast improvement. Large companies can easily afford to staff this type of person but tend not to. That is a mistake that can easily be changed.

A-List of the Best Consensus Forecasting Vendors

I am occasionally asked if I know a list of the best consensus-based forecasting vendors. I provide a list of three vendors, two that only do consensus forecasting and one that can do both statistical and consensus-based forecasting (depending upon the requirement) in this article. However, companies should also find other lists for a company to triangulate with other sources.

I have not found this list that I can recommend.

One reason is that consensus forecasting is still not interpreted as requiring a specialized forecasting solution by either large consulting companies or analysts like Gartner or Forrester. The problems large consulting firms have in selecting software are thoroughly explained here. Currently, large consulting companies are giving out incorrect information on consensus forecasting and the solutions that exist to meet the needs of a consensus forecasting process. This is primarily because the large consulting firms do not have relationships with these best of breed consensus forecasting vendors, and don’t have anyone trained they can staff on these applications, they won’t recommend them.

Financial Bias in Software Selection

However, consulting firms usually don’t create lists that they publish on the best software because they have no unbiased research capability. To them, the best software for their clients is one they have trained resources for which they can bill. The question here is more pertinent for analysts. However, these analysts both see consensus forecasting as simply one way of using any forecasting system. Unfortunately, this is one of companies’ biggest mistakes when beginning their consensus forecasting project. One of the primary reasons that so many consensus forecasting projects provide mediocre results is that an inappropriate solution is used. Using the wrong tool, companies have many challenges, even getting input from the necessary departments yet adjusting the results. If companies continue to do this, eventually, consensus forecasting will decline as a trend, and the pendulum will swing back to statistical forecasting in terms of emphasis.

I could easily see this happening, with all of the evidence that consensus forecasting is overrated being based upon companies that used statistical packages to perform consensus forecasting. That is literally how unscientific this process can be.

Where Major Vendors Stand with Consensus Forecasting

Consensus forecasting-specific software is an innovation area in the supply chain software market. Innovative market areas, such as consensus forecasting or inventory optimization and multi-echelon planning, concern the significant vendors because their model is to put as little development effort as possible into their applications and try to adjust the perception of their products through slick marketing. Any new area they will attempt to, and often be, successfully co-opt with salesmanship, marketing, and leveraging their pre-existing relationships with existing clients. This is the luxury enjoyed by prominent vendors. They can wait for innovation to be generated in the smaller vendors, then co-opt it after it becomes popular. They want to convince their customers that they already have anything new and innovative in their suite, and customers should not look elsewhere. They win if they can convince companies that a one-size-fits-all approach will work in every domain of the supply chain.

Why The Analysts Don’t Differentiate Consensus Forecasting Solutions

I think there are a few reasons that these analyst firms take this approach. However, most likely, the most critical factor is that vendors pay them. SAP alone pays them several million dollars annually, and generally, the larger the vendor, the more they can afford to pay. The analysts do not disclose or publish that vendors pay them, and their business model is similar to that of financial rating agencies. The exception is that, unlike rating agencies, they are paid exclusively by those who want their products rated. The producers and the consumers pay the analysts, so their income sources are more balanced.

However, while they present themselves as having one customer (those who buy their research), they have two clients, the vendors being the second. Within these vendors, the most significant pay the most, so the research results are slanted in their direction. The companies I think are the best in consensus forecasting are small and don’t pay Gartner to be listed. Secondly, the prominent vendors would lose if consensus forecasting were perceived and explained as a separate solution requiring separate software. This is because their solutions are extremely weak in consensus forecasting and require the company to adjust around the application rather than the application being inherently usable for the consensus forecasting process.

Consensus-Based Forecasting and Statistical Forecasting in One Application

The statement regarding the specialization of consensus forecasting applications requires some further clarification. I know two pure CBF applications I would be comfortable implementing. There is one application that has its “heritage” in statistical forecasting but which I would be comfortable using as a consensus forecasting application.

However, this is the only dual (stat and consensus forecasting) application I am comfortable discussing. The other statistical applications I have worked with do not have any ability to add value to the consensus forecasting process.

This is not a criticism as much as it is not their design. Therefore, pure consensus forecasting or combined stat-consensus forecasting is a bit less cut and dried than consensus forecasting applications, which should be used for consensus forecasting because there is at least one exception. However, the selection of different applications must be driven by how the company intends to forecast consensus. If consensus forecasting is closer to an S&OP process where the input is taken from other teams by conference calls and the consensus items are adjusted by one group or person. The combined stat-consensus forecasting application would work fine. If, instead, the process requires direct system input, then a pure consensus forecasting application should be selected.

Conclusion

It seems strange, as domain expertise is often distributed across multiple individuals. Also, S&OP, a type of consensus forecasting, has to bring together individuals to make a shared forecast because no group would allow just operations or finance to create the overall forecast. One point of weakness of the research may also be the software that is used. Many companies attempt consensus forecasting with statistical software, and few focus on reducing bias. Therefore, as implemented by companies who don’t focus on high-quality implementation, it is easy to see how consensus forecasting can show so little improvement.

Sharing This Article

Very few know the information contained in this article. Why not share this article with someone you know so they can also learn about it? It’s easy to do. Just copy this article link and paste it into your email.