How to Understand Test Results: Monthly Versus Weekly Forecasting Buckets

Executive Summary

- This study was performed for a customer to verify a client’s hypothesis that weekly forecasting improves forecast accuracy versus monthly forecasting.

Why I Performed this Study

It is common for companies to consider moving towards a weekly statistical forecasting bucket for supply chain management. I recently came upon a company that had already moved towards weekly forecasting planning buckets.

See our references for this article and related articles at this link.

I brought up the topic of monthly forecasting planning buckets, and they asked me why I suggested moving to monthly forecasting.. In fact, at most companies I have worked in, the supply chain forecast planning bucket has been monthly. Interestingly, many companies that are on monthly forecasting buckets move of discussion moving to weekly forecasting buckets.

Background and Research into Weekly Forecasting Versus Monthly Forecasting

When I went to find research on the question of weekly versus monthly forecasting in a research database that I use, I could not find an article on this topic. I looked under “weekly versus monthly forecasting,” “forecasting planning bucket.” I could not find anything. I also performed Google searches on these terms and did not find anything related to forecasting error. I thought that strange.

And in the first version of this article, I stated that I did not find anything, yet as you can see, Simon Clarke commented on this post and pointed out that there were a few good articles under the title of “temporal aggregation.” That was the missing key. I was using incorrect terminology.

One of these articles was titled Demand Forecasting by Temporal Aggregation. I was humorous to find the exact issue which I had found when I looked into this topic. Here is a quotation from this paper.

“Aggregation of demand in lower-frequency “time buckets” enables the reduction of the presence of zero observations in the case of intermittence or, generally, reduces uncertainty in the case of fast demand. Intermittent demand items (such as spare parts) are known to cause considerable difficulties in terms of forecasting and inventory modeling. The presence of zeroes has significant implications in terms of: (i) difficulty in capturing underlying time series characteristics and fitting standard forecasting models; (ii) difficulty in fitting standard statistical distributions such as the normal; (iii) deviations from standard inventory modeling assumptions and formulations—that collectively render the management of these items a very difficult exercise.”

Exactly!

Improving the Reduction of Zero Period Observations

In the article titled Improving Forecasting vs. Multiple Temporal Aggregation, I found this quotation:

“In the case of intermittent demand data, moving from higher (monthly) to lower (yearly) frequency data reduces (or even removes) the intermittence of the data (Nikolopoulos et al., 2011), minimizing the number of periods with zero demands. This can make conventional forecasting methods applicable to the problem.”

I was surprised to see that what I performed before ever reading these articles was called temporal aggregation and has a definition laid out in the article Demand Forecasting by Temporal Aggregation:

“Temporal aggregation in particular refers to the process by which a low-frequency time series (e.g., quarterly) is derived from a high-frequency time series (e.g., monthly) [22]. This is achieved through the summation (bucketing) of every m periods of the high-frequency data, where m is the aggregation level. Deviations from the degree of variability accommodated by the normal distribution often render standard forecasting and inventory theory inappropriate.” [11, 29, 35].

When is a Service Part Data Set Not a Service Part Data Set?

It was, in fact, the case that I had a forecast dataset with a large number of alternate sales histories. This was not a service parts data set, but it looked like a service parts data set because the company in question had performed so much product proliferation. They did not prune its low-selling items. This is a topic I want to cover in a future article, how out of control marketing is leading to more and more data sets that look like they are service parts data sets when they aren’t.

Getting the Forecasting Timing Terminology Correct

To begin, I wanted to distinguish the following forecast timings before we get into this topic in depth.

These are the most important timings in demand planning:

Forecasting Terminology

| Term | Definition |

|---|---|

| Forecast Planning Bucket | This is the increment of time for which forecasts are generated. This is most common in daily, weekly, or monthly buckets. In most cases, the planning bucket corresponds to the forecast generation frequency. Thus, you would generate weekly forecasts each week, monthly forecasts each month, etc. However, this is not essential. The planning bucket could be longer or shorter than the forecast generation frequency. |

| Forecast Horizon | This is how far into the future the forecast is generated. |

| The Forecast Generation Frequency | This is how frequently the statistical forecast is generated. |

Understanding Forecasting Timings

For this article, we will only be discussing the last timing, the planning bucket. We will not cover the first two timings except where we need to explain when these other timings are incorrectly commingled with the planning bucket timing. In my experience, planning timings are some of the most confusing aspects of the subject. For instance, what does the term” weekly forecasting” even mean? I could be referring to the “Forecast Generation Frequency,” or to the “Forecast Planning Bucket.”

Common Reasons for Moving Forecasting Monthly Bucket to a Weekly Forecasting Bucket

There are several common reasons often given for moving to a weekly statistical forecast. The common ones that I have heard are the following:

- Recent Sales History Updating: Weekly forecasting allows a company to pick up the most recent sales history and incorporates it into its forecast.

- Weekly Pattern Recognition: There are patterns within the month that are not accounted for if the forecast is generated monthly (and then as is usually the case disaggregated to a weekly planning bucket when sent to the supply planning system.

The first reason is incorrect. This is because sales history is continuously updated. That is sent from the sales or order management system to the forecasting system. At least that has been the case in all the companies that I have worked for. Thus incorporating the latest sales history information into the statistical forecast has to do with the Forecast Generation Frequency (as described above). The Forecast Generation Frequency and the Forecast Bucket are unrelated.

Example

Here is an example of why:

- One can have a weekly forecast generation frequency and a monthly planning bucket.

- Or one can have a monthly forecast frequency and a weekly planning bucket.

At every company I have consulted for the statistical forecasting job is set to run weekly and on the weekend. Now, this is not to say that statistical forecasting cannot be triggered at any time for a particular product location or group of product locations. And it frequently is. A demand planner would have the ability to run an ad-hoc statistical forecast at any time.

The regeneration of the forecast in batch by a scheduled job occurs in most companies that perform supply chain forecasting on a weekly basis.

Picking Up Patterns

This means that the actual valid argument around performing weekly forecasting is isolated to picking up patterns. That is point number two above. Before we get to proving whether weekly forecasting does a better job at forecast accuracy than monthly forecasting, which is disaggregated to a week, it is important to note that in many cases, it is not relevant whether the weekly forecast is more accurate. This is because companies have vendor minimums or economic order quantities on their products. If, say, the average sales per week for a particular product location is five units.

Yet the product location has a minimum order quantity of 20, moving to weekly forecasting will not help supply planning, or not never much. Even if the weekly forecast error is more accurate. This is because, on average, the company will not be able to action the forecast that is broken out to a week. Thus, the ability to leverage improved forecast accuracy when the forecast planning bucket is a week depends upon the relationship between the sales history and the minimum order quantity.

If the previous statement is true does point to supply planning receiving benefits from weekly forecast generation (that is, the average sales volume per week is significantly above the minimum order quantity), then we can move to the next question.

The Logic for the Weekly Forecasting Pattern Versus A Monthly Forecasting Pattern

Now the logic is that if a weekly pattern exists that weekly forecasting will do a better job capturing this. The complication is that one gives up a model selection to gain this benefit. This is because one of the problems with forecasting on a small planning bucket such as a week is that it reduces the volume of the forecasted item. When the planning bucket is a week, this means the forecasting system is using weeks of sales history to create the forecast.

So while a monthly sales history maybe 40, the weekly history is bound to be more variable, and when the sales volume is low for a product location combination, the sales history week is much more likely to be intermittent.

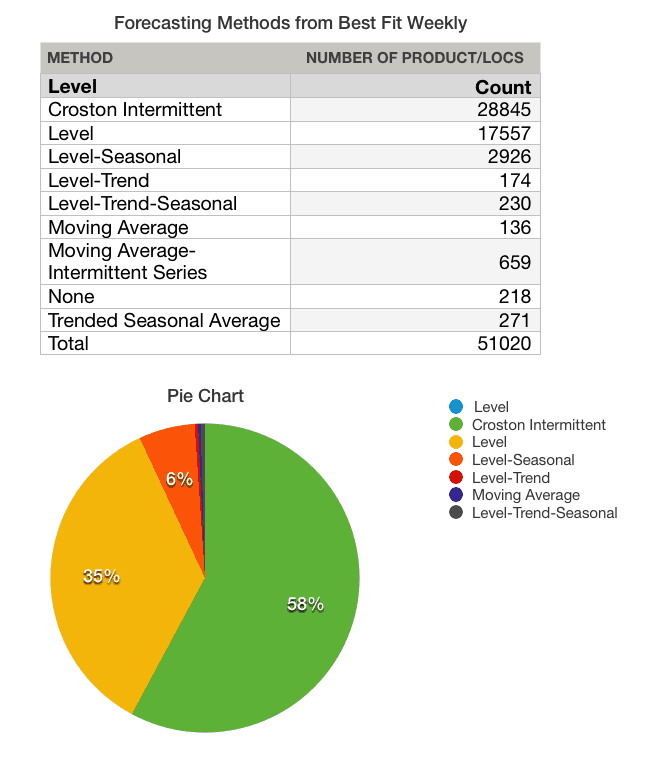

To show this on a real data set, I compared the forecast models that were assigned to the product location combinations when the forecast was created in a weekly planning bucket versus when it was assigned in a monthly planning bucket.

The most important features to consider here are:

- This was performed on the same dataset.

A best-fit procedure was performed to automatically assign the product locations to the model with the lowest fitted forecast error.

The Weekly Best Fit

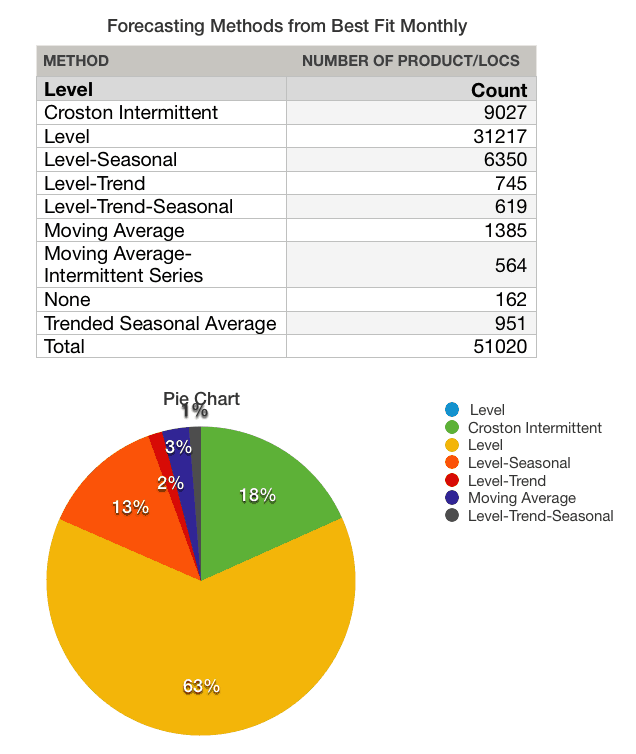

Now let us look at the same dataset when performing monthly forecasted.

The Monthly Best Fit

- Notice that the models applied became higher quality models, or should I say more predictive models.

- The first model selection had almost 57% of the data set going out on the Crostons’ Intermittent model. This model was designed for forecasting service parts. Service parts are considered the most difficult products to forecast, and they have a high monthly forecast error.

- When the same product location combinations were set to a monthly forecast bucket, now the Crostons’ model was assigned to only 18% of the product location combinations. Notice that the most predictive models like Level Seasonal, Level Trend, etc.. have increased.

One can predict from looking at these two model selections compared that the second selection would have a lower forecast error. In cases where companies perform weekly ordering, that is not good enough. One must prove that when the monthly forecast is disaggregated to a weekly forecast that the forecast error will be lower than when a straight weekly forecast is generated.

That was what I tested.

Testing the Hypothesis of Weekly Forecasting Versus Monthly Forecasting

I tested this hypothesis with client data. I can’t say that the results of this study would be the same for every data set from every company. What is interesting is that this client, in particular, was very confident that moving to weekly forecasting was a good idea.

Before we get into the results, let us discuss the method I used for performing time-based disaggregation, or in more basic terms, how I disaggregated from the monthly generated forecast value to a weekly value.

Time-Based Forecast Disaggregation

Once you move to a monthly forecast bucket, you must disaggregate to a week. This happens all the time when the forecast is eventually sent to supply planning. In this case, because we are comparing accuracy at a weekly level, we need to disaggregate the forecast from the month to the week to perform the calculation.

Is Time Based Aggregation Complicated?

Time-based aggregation needn’t be that complicated. Generally, using something similar to the actual breakdown of the days in a month will do. This might be something like the following: 21%, 21%, 21%, 21%, 18% (for the last week of a five-week month). I applied these disaggregation percentages when I disaggregated from a month to weeks in this study. This is not fiscal months. I discussed this issue with a colleague who pointed out that it gets very messy to try to forecast in actual calendar months and that when possible, it should be encouraged to use fiscal months as the planning bucket.

The Results of Weekly Forecasting Versus Monthly Forecasting

What I found is that the forecast error for a disaggregated month was lower than when compared to the forecast generated for a week. It was over three percentage points lower in weighed MAPE* (mean absolute percentage error). Not a large amount, but still significant. Furthermore, forecasting in weekly buckets is more work than forecasting in a monthly bucket. There is much more data processing required, and this can limit the speed of the system. Thus, to justify forecasting in weekly buckets, the forecast error for forecasting weekly would have to be lower than forecasting monthly and then disaggregating to weekly.

Essentially what happened is that the weekly pattern was outweighed by the improvement in the model selection that was enabled by the use of the monthly forecasting bucket.

Notes

*Weighed MAPE is the forecast error calculation that I usually use. It controls for the forecast error that is biased towards lower-volume items (that will tend to have a higher forecast error). wMAPE’s only real weakness is that it will not cap very large errors from pulling the error to be larger than it is. There were other assumptions I used related to the calculation of zeros in the sales history or forecast. I will cover this in a future article.

*As a side note, I also tried a different time-based disaggregation that was based on the creation of an index that used the average of the sales for the week in question over the past three years and then was normalized. This disaggregation approach worked less well in this instance than the simpler disaggregation scheme.

Conclusion

- This study showed the importance of testing a different hypothesis when it comes to configuring forecasting systems. Not enough companies test hypotheses before setting the forecast system to work one way or another. It is very easy to develop a hypothesis, but it is much more work to test a hypothesis. I have repeatedly come upon companies that have been forecasting in some way that they never proved improved forecast accuracy. It is most common for people within companies to propose that they know what will improve the forecast. A major reason for the lack of testing a forecasting hypothesis.

- I have checked forecast databases for nine companies now. I would love to have more, but I am still coming upon surprises due to how the data changes per client.

- Overall, my experience in testing forecasting data sets leads me to believe that it is more likely than not that forecasting monthly and disaggregating is better than forecasting weekly. I cannot say for sure on any particular company’s situation sure without performing the same test I performed here for that particular data set. The only way that this would not be the case is if that company had an extremely variable weekly demand. Secondly, to benefit from forecasting in a weekly bucket, the average demand and minimum order quantities of the product locations would need to justify the work put into developing the weekly forecast.

The Connection to Supply Planning Buckets

To make supply chain planning work, it is necessary to connect up demand with supply planning.

One very common statement is that supply planning needs to be placed into weekly buckets. However, when one evaluates this statement, it turns out that there is not very much support it. Normally what the company actually means when they bring up this topic is that they want to see the data in the Planning Book in weekly periods. The article below explains both how time bucket storage and time bucket display are performed and how they are not necessarily the same. As this article shows, data can be stored and displayed in different periodicity (month, week, day).

Of course, the Storage Bucket Profile should always be smaller than the Time Buckets Profile (i.e., the display).

Weekly Display or Weekly Order Batching?

Any reasoning that the supply plan should be actually batched into weekly buckets is not a logical request, even though it’s quite common to hear the statement “we plan in weekly buckets” when working on projects. The reason for this is assigning all materials a “weekly order batch” is simply arbitrary and not a good practice. Secondly, the supply plan must eventually be released to the production planning engine and procurement system, and orders broken down by day will be expected and are desirable. These parameters, which should be thoroughly analyzed and reviewed prior to the go-live, and then periodically after that, set the appropriate order aggregation. In some cases, the economic order quantities will be 20 days supply; in other cases, it may be 1 or 2 days supply. This is where order batching should be controlled in any supply planning system, and not through the bucket periodicity.

The Next Question

The next question I have is whether a quarterly forecast, disaggregated per week, would perform better than a monthly forecast disaggregated per week. I have never tested this before. I was asked by my client if I was proposing monthly versus weekly forecasting why I would not also recommend quarterly forecasting and then disaggregate this quarterly forecasting to a weekly planning bucket.