How AI Projects Human Features onto Inanimate Objects

Executive Summary

- AI has engaged in the projection of human consciousness onto software since its beginnings.

- We cover the problems with these projections.

Introduction

Since the earliest days of AI, the emphasis has been on emulating humans. As we cover in the article Who Was More Accurate, Marvin Minsky or Hubert Dreyfus on AI?, a significant belief that held back AI’s development, sending it down dead ends, was that the human brain worked like a digital computer. The Turning Test is a test proposed by Alan Turing that stated that the measure of AI was its ability to fool humans. The following quotations explain the usefulness of this test. And what happens when you try to push a chatbot, which is attempting to simulate a human, in a direction outside of its domain.

Our References for This Article

If you want to see our references for this article and other related Brightwork articles, see this link.

Is the Turning Test Important?

“..the Turing test isn’t actually a good measure of algorithmic intelligence. For one thing, it’s easy to pass the Turing test if you can make the topic of conversation narrow enough. I chatted with the Whole Foods Market bot on Facebook to test this theory.

Whole Foods: Hi Janelle! We’re here to help you find recipes as simple as their ingredients.

Me: Do you have a recipe for guacamole?

Whole Foods: <posts a recipe: Guacamole with Cilantro>

Me: Is it okay to make guacamole from green peas?

Whole Foods: <posts a recipe: Green Pea Guacamole>

Me: Give me a recipe that uses avacado. Anything but guacamole.

Whole Foods: <posts a recipe: Avacado Salsa with Olives and Cilantro>

At this point, the conversation is a bit ambiguous. The thing I’m chatting with hasn’t specifically said whether it’s human or a bot and is completely handling questions that can be answered by posting a recipe. But when I stray from this narrow format, the bot quickly reveals itself.

Me: How can I tell if an avacado is ripe?

Whole Foods: <posts a recipe: Edamame Guacamole>

Me: Do you have a favorite Star Wars character?

Whole Foods: <posts a recipe: Sauteed Shrimp with Polenta and Mango>” – You Look Like a Thing and I Love You

Its Called Anthropomorphizing

Anthropization of a dead computer, intelligence in the province of biological entities.

This is expressed in the following quotation.

“Humans often anthropomorphize by assuming that animals, trees, trains and other non-human objects have human traits. In children’s stories and fairy tales, for example, pigs build houses that wolves blow down and foxes talk to gingerbread men. Think about these stories for a minute. The three little pigs have human characteristics reflected in the houses they build of straw, sticks, or bricks. The wolf uses various ruses to try to lure the pigs out of the brick house, but they outwit him and then put a cauldron of boiling water in the fireplace when they realize that the wolf is climbing up the roof in order to come down the chimney.

The gingerbread man is baked by a childless woman, but then run away from the woman, her husband, and others, taunting his pursuers by shouting, “Run, run as fast as you can! You can’t catch me. I’m the Gingerbread Man!” in some versions, a fox tricks the gingerbread man into riding on his head in order to cross a river and then eats him. In the version read to me when I was a child, a wily bobcat tries to lure the gingerbread man into his house for dinner, but birds in a nearby tree warn the gingerbread man that he is the dinner. The gingerbread man flees while the bobcat snarls, “Botheration!” The gingerbread man runs back home, where he is welcomed by his family and promises never to run away again. These are enduring fairy tales because we are so willing, indeed eager, to assume that animals (and even cookies) have human emotions, ideas, and motivees. In the same way, we assume that computers have emotions, ideas and motives. They don’t.

The success of movies such as Terminator and Matrix has convinced many that this is our future and it will be here soon. Even luminaries such as Stephen Hawking and Elon Musk have warned of robotic rebellions. In 2014, Musk told a group of MIT students “With artificial intelligence we are summoning the demon…In all those stories where there’s the guy with the pentagram and the holy water, it’s like yeah he’s sure he can control the demon. Didn’t work out.” Three years later, Musk posted a photo of a poster with the ominous warning “In the end, the machines will win.” – The AI Delusion

This is expressed in the following quotation.

“True intelligence is the ability to recognized and assess the essence of a situation. Humans understand this essence by drawing analogies to other experiences and they use this essence to add to their collection of experiences. Hofstadter argues that human intelligence is fundamentally about collecting and categorizing human experiences, which can then be compared, contrasted and combined. Limiting the scope of computer science research has limited its potential. Computers will never be truly intelligent in the way human minds are intelligent if programmers don’t even try. Hofstadter lamented that, “to me, as a fledgling [AI] person, it was self-evident that I did not want to get involved in that trickery. It was obvious: I don’t want to be involved in passing off some fancy program’s behavior for intelligence when I know that it has nothing to do with intelligence.”

Processing power is not the bottleneck for computers becoming smarter than humans. Being able to process one million, one billion, or one trillion words per second doesn’t have much to do with whether computers can truly think like humans — using common sense, logical reasoning, and emotions and deducing general principles from a specific situation and applying these principles in other contexts.” – The AI Delusion

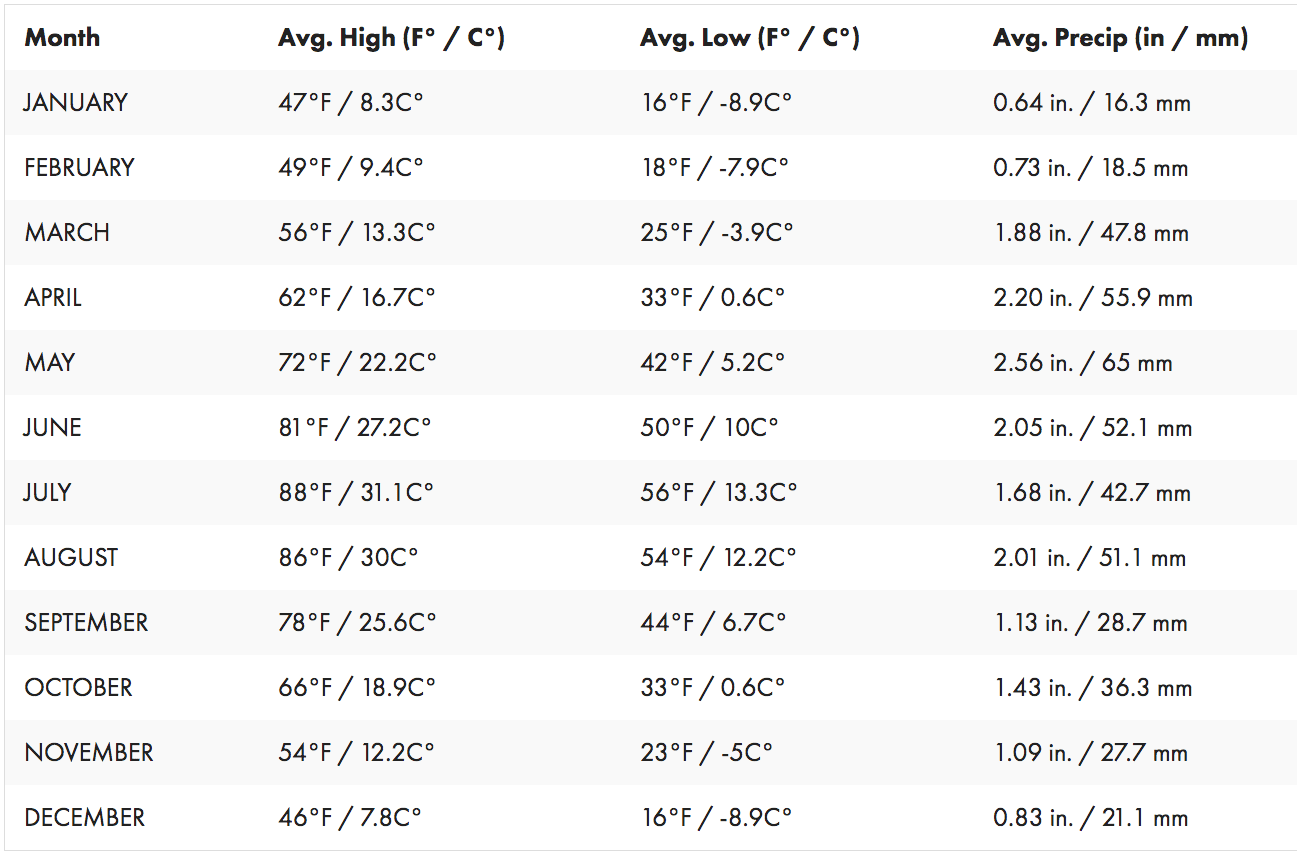

A table is not intelligent.

This is a table of the weather per month in Denver, Co. This same table can be printed out on paper.

Is the table useful?

Undoubtedly.

Is the table intelligent? Is it more intelligent if a computer displays it or if it is printed out on paper? Does the table show and “understanding” of the weather in Denver, or is the understanding “created” when reviewed by a human who can interpret the table? A table is not any more conscious than advanced AI.

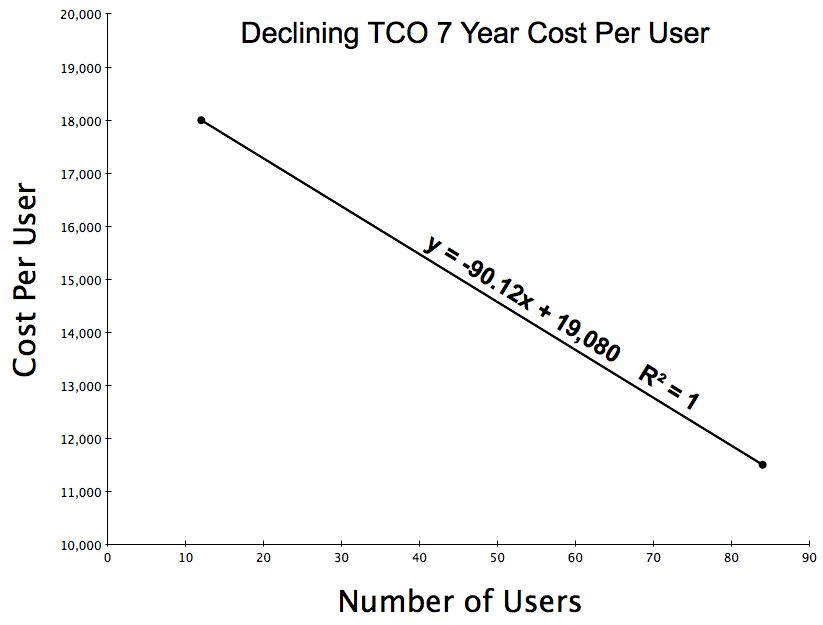

This is a regression formula that fits a line to data points. Regression is a type of Machine Learning. The software performed the fit, but what did the “machine” actually learn? It followed its programmed instructions, which told it to run a routine to fit the data points.

We were told, in error, that AI would surpass human consciousness before 2001. This inaccuracy has been a constant feature of AI research.

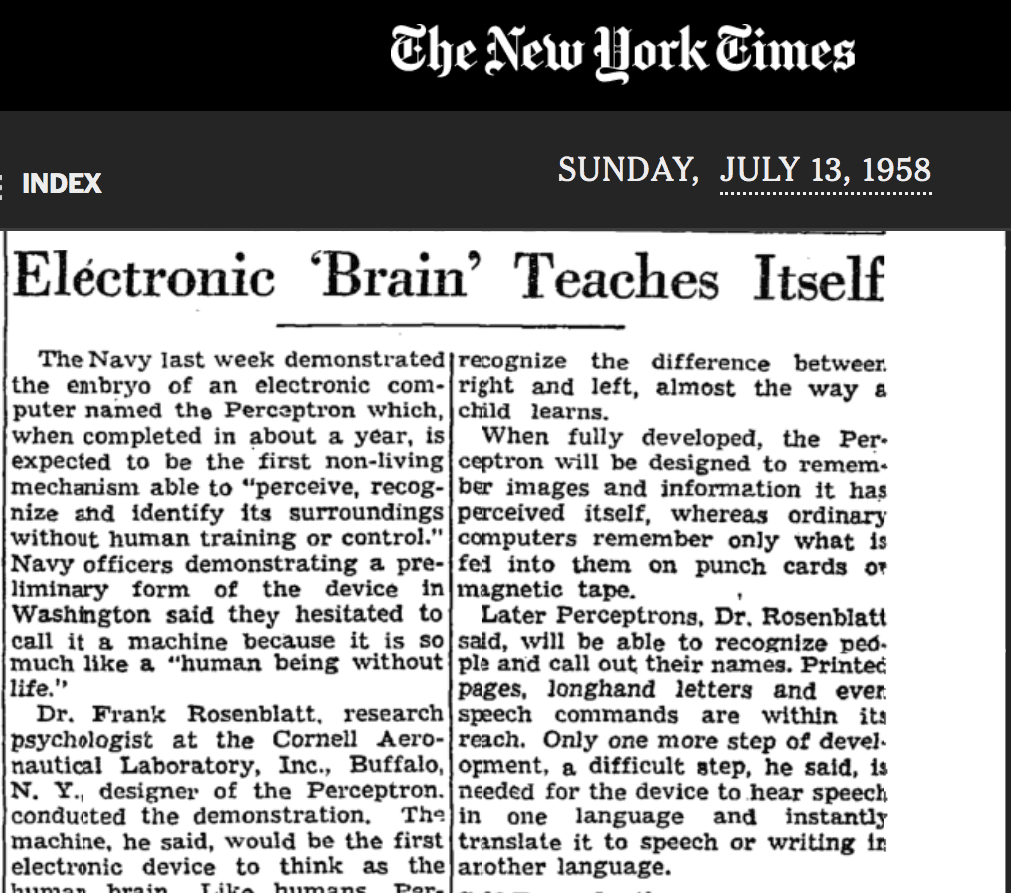

From 1958 in the New York Times, this article jumps directly to projecting conscious machine entities, or “electronic brains,” from the most basic capabilities.

Conclusion

Perhaps most curious is how inaccurate AI proponents have been when projecting human qualities onto inanimate objects. This projecting goes back to the earliest AI research stages and does not seem to be abating any time soon.