How Microsoft Somehow Compensated NIST to Write Fake Research on Linux Security

Executive Summary

- Microsoft Windows has had long-term security problems, so Microsoft decided to pay the Department of Commerce to say that Linux is less secure.

- We cover the typical Microsoft dirty trick.

Video Introduction: How Microsoft Somehow Compensated NIST to Write Fake Research on Linux Security

Text Introduction (Skip if You Watched the Video)

NIST released a laughable report in 2020 that placed Windows as a more secure operating system than Linux. NIST did not include in the report that it was promoted to write this fake report by the relationship between The Department of Commerce (which runs NIST). You will learn about how NIST produced one of the worst software analyses ever written.

Our References for This Article

If you want to see our references for this article and other related Brightwork articles, see this link.

Reviewing the Ratings and Scoring by NIST

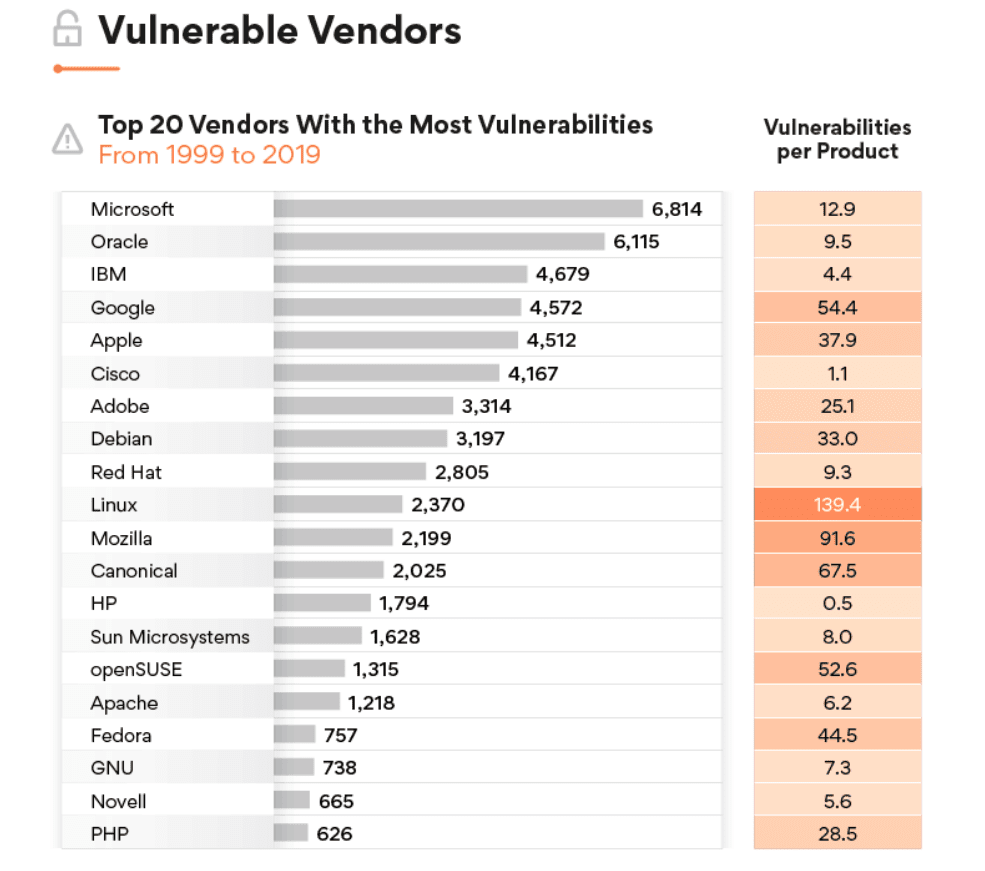

The following shows the scoring of the rating of security by the vendor.

There are so many problems with this list. Let us review some questions that come to mind.

Issue #1: Is Linux an Operating System?

No. This might be news to NIST, but Linux is a kernel. This kernel is used in multiple distributions. Canonical is a company or vendor that distributes Ubuntu, which combines several GNU and other packages to complete OS. openSUSE is a vendor. But Fedora and Linux, and Debian are not companies. They are distributions that use Linux.

GNU is even more complicated to explain. And the idea that GNU is listed as if it is the same as Canonical is inaccurate. Futhermore, GNU is used across the Linux distributions.

What Does NIST Think GNU Is?

Let us review some important quotes from Wikipedia on GNU.

GNU (/ɡnuː/) is an extensive collection of free software, which can be used as an operating system or can be used in parts with other operating systems. The use of the completed GNU tools led to the family of operating systems popularly known as Linux. Most of GNU is licensed under the GNU Project’s own General Public License (GPL).

The GNU project maintains two kernels itself, allowing the creation of pure GNU operating systems, but the GNU toolchain is also used with non-GNU kernels. Due to the two different definitions of the term ‘operating system’, there is an ongoing debate concerning the naming of distributions of GNU packages with a non-GNU kernel.

The system’s basic components include the GNU Compiler Collection (GCC), the GNU C library (glibc), and GNU Core Utilities (coreutils), but also the GNU Debugger (GDB), GNU Binary Utilities (binutils), the GNU Bash shell. GNU developers have contributed to Linux ports of GNU applications and utilities, which are now also widely used on other operating systems such as BSD variants, Solaris and macOS.

However, NIST repeatedly refers to all of these entities as vendors. This is very peculiar and indicates that NIST does not understand how the Linux ecosystem works, or they are deliberately miscategorizing things to achieve a predetermined outcome. However, any person reading this list who does not already know the Linux ecosystem will be confused about how the ecosystem functions. One knows less after reading this list (with many readers assuming that NIST knows what they are talking about) than before they read the list. So not only is the primary conclusion wrong, but the explanation of and categorization performed by NIST is also wrong — and one does not have to be an advanced Linux user to figure this out. Anyone with even brief exposure to Linux would be able to see the flaws in NIST’s categorizations.

Issue #2: What is Being Tracked by NIST?

The Linux organization has roughly 170 projects.

The vulnerabilities that NIST is reporting on are related to which products? If one does the math and divides the number of vulnerabilities by the vulnerabilities per product, one arrives at 17. This implies that NIST thinks Linux has 17 products. Why would NIST count in this way? If the Linux kernel, which is relevant for the desktop’s operating systems, why would 17 products be used?

How Many Linux Kernels Are There?

There is not just one Linux kernel. I recently watched an installation video of a Linux distribution and found that there were roughly 300 Linux kernels available. Wouldn’t the new math be 2370 vulnerabilities / 300 or 7.9 vulnerabilities per kernel?

The math that NIST uses is bizarre and unsupportable and appears to have been used to rig the results in favor of Microsoft versus Linux.

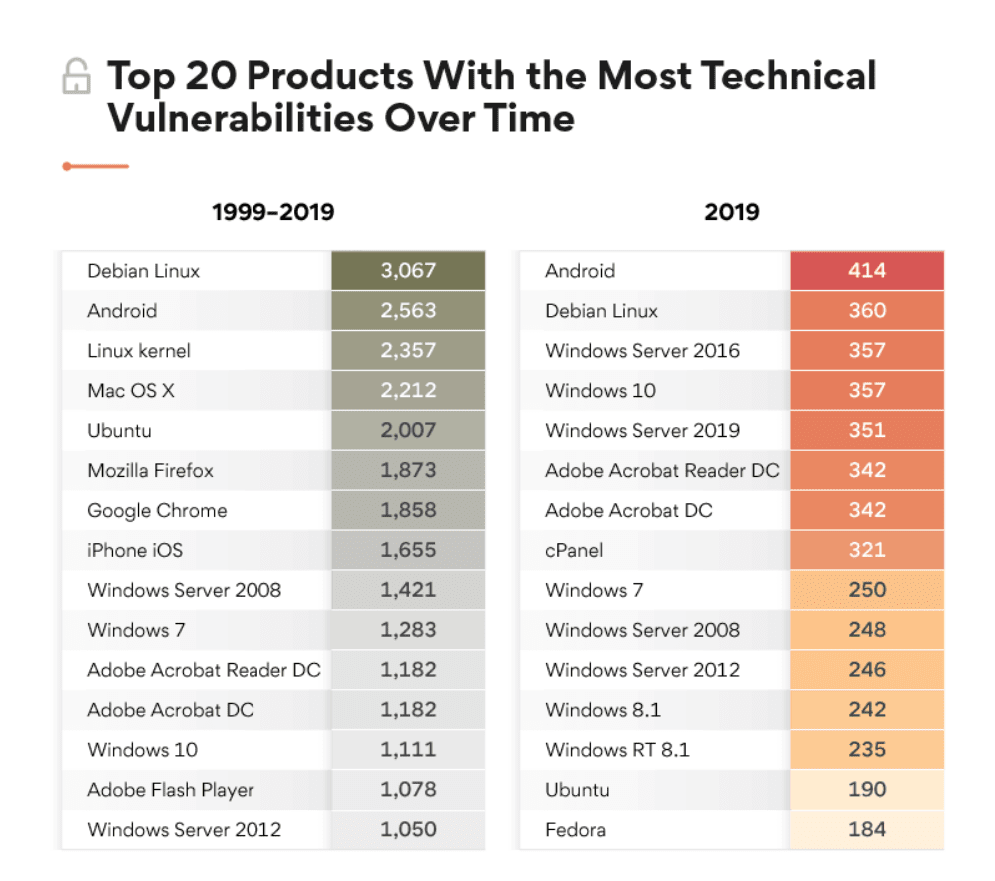

This list is again laughable. This shows Windows as having far fewer vulnerabilities than Debian cannot be true. Debian is knowns as a rock-solid and secure Linux distribution. But this leads to the next problem or issue with how NIST has compared these products to one another.

Issue #3: The Durations of the Products Do Not Match

Debian is also one of the oldest Linux distributions, but it is compared against far younger products.

Let us review just a few of the items compared against one another.

- Debian was introduced in 1993

- Chrome was introduced in 2008

- Windows 10 was introduced in 2015

How can one compare the number of vulnerabilities if the products have been in existence for different periods of time? If we look at the comparison of Debian to Windows 10, we end up with a product that has been around for 27 years (this study by NIST was published in 2020, so we will measure from that date) to a product (Windows 10) that has been around for 5 years. Debian has been around for 27/5 = 5.4x longer – yet NIST compares their vulnerabilities without adjusting for the duration of the product’s life.

Issue #4: Accounting for Reporting Differences Between Commerican Versus Open Source Vulnerabilities?

The Linux ecosystem is open source. This means that bugs and vulnerabilities are reported immediately, and the entire system is transparent. Commercial products do not work like this.

Therefore, any comparison which uses published bugs by open source with the closed source will end up with the number of bugs being counted differently between open source and closed source. NIST does not mention this anywhere in their report and seems to think of the bug/vulnerability reporting methods of open source as being perfectly comparable to commercial software.

Linux YouTube Channels Critique the NIST Study

The NIST report received a big laugh from the Linux community.

And in this video as well. This YouTuber noticed that nearly all the articles covering the rigged NIST study were written virtually the same. They appear to have been written off of a central and likely a Microsoft talking point sheet or press release. Microsoft marketing would have paid IT media entities to publish the article based upon their release.

Who is Writing Reports for NIST?

This illustrates that NIST did not assign anyone to this study that knew a thing about either Linux or operating systems. NIST wanted to fake this study, and appears to have been directed by The Department of Commerce as to what Microsoft wanted the study to say, but NIST was unwilling to hire people that knew enough about Linux to create a competent fake. For example, if NIST had hired someone with Linux knowledge, they would have been able to create a far more realistic fake. One must have domain expertise to create a fake, just as a person must be an excellent painter to create a convincing fake of a Monet. Hiring a child with their first painting kit is not going to do the trick. You have to be able to fool a person who is familiar with Monet’s work.

What is The Department of Commerce?

The Department of Commerce, which is a lobby group for companies. The Department of Commerce is odd because it is technically part of the US government but should not be. NIST benefits from this association because it makes it seem like they are a “government lab.” However, the reality of NIST is not generally understood. The Department of Commerce’s mandate is to advocate for business interests. In this case, the business interest is Microsoft.

- But how can a supposed scientific entity like NIST function within a department whose role is to advocate for business interests?

- This makes no sense, and why not privatize The Department for Commerce and get it out of the government.

- Why do taxpayers have to pay for corporate advocacy?

- NIST produces less than worthless research, like this study.

NIST’s History of False Reports

NIST created false reports on the World Trade Centers. The beginning of these reports shows hundreds of people contributing to those reports. And yet, the report comes to impossible conclusions. Their reports on the collapse of the World Trade Center were as politically rigged as the 9/11 Commission Report. Both of these reports were targeted towards those with moderate to low mental functioning and were designed to create the false impression that the collapses had been investigated. NIST was always going to support the story of the George W Bush Administration. They were never going to investigate independently. One can make their own case for what they think happened on 9/11, but there is no way the work by NIST can be defended on technical grounds.

What is NIST?

NIST is a political fake research entity that works backward from the conclusion and then makes up the evidence to support the conclusion.

How Microsoft’s Mighty Marketing Engine Distributed the Fake NIST Report

Furthermore, after somehow compensating the Department of Commerce, Microsoft paid media outlets to get the story out after it was published. The evidence is that nearly all of the articles on this topic appear to have been written by the same author. There is no analysis of the study, just parroting of the conclusions.

All of that came before Monday, when we learned from The New York Times that Ross “threatened to fire top employees” at the National Oceanic and Atmospheric Administration if they did not back up Trump’s claim that Hurricane Dorian was headed for Alabama, when in fact was not. Birmingham’s National Weather Service officials were forced to issue a statement reassuring Alabamians that the storm was not coming their way, after fielding calls from people worried they that should evacuate. That prompted Trump to continue to insist he had been right about his Alabama claim, even using a Sharpie to ridiculously alter a Weather Service map to falsely show the state within the storm’s potential path. Obviously, people rely on National Weather Service reports to decide whether to stay or to evacuate during a major storm. But Ross’s involvement makes the story one layer more corrupt. – Foreign Policy

Conclusion

I found an article where Microsoft partnered with NIST to work on “computer security.” See the following quotation.

Microsoft will “soon” kick off an effort to help enterprise organizations better patch their software, with help from the National Institute of Standards and Technology (NIST).

So Microsoft, which has the worst history of security in enterprise software and deliberately pushed its browser into the kernel of Windows for monopolistic reasons to shut out Netscape, is partnering with NIST, which knows nothing about computer security to improve security?

The best way to improve the nation’s security is to stop using Microsoft Windows. That would be step #1. The US Government could, for instance, begin migrating millions of Windows machines over to Linux and from Microsoft Office to open source alternatives like LibreOffice or WPS Office or FreeOffice (the last two are not open source but free).

These changes would improve the security of the US government overnight (in addition to saving large amounts of money). This would be a boon to the Linux community and lead to even more adoption of Linux in the private sector.

However, NIST would never be allowed to come to this conclusion as long as they are controlled by the corrupt Department of Commerce that has Microsoft executives over tea.

Does Anyone Care About NIST?

The US Government has real research entities that do cutting edge work. There are national laboratories like Los Alamos National Laboratories and Sandia National Labratories. However, NIST is not one of these as they are captured by corporate interests. Furthermore, NIST has virtually no thought leadership or footprint in information technology. In my career in IT and in research, I cannot recall a single time where anyone said.

We better check the NIST websites for what they think on this topic.

The US should have a US Government research entity that focuses on information technology, but NIST is not it. There are enormous opportunities to publish important work that would contradict the marketing drivel of software vendors and consulting firms. However, these interests would not allow such an independent entity. SAP would like everyone to get their information from The Hasso Plattner Institute (a real thing incidentally). Larry Ellison has yet to create his “institute,” but it can’t be far off. At this institute, you will learn that Oracle Cloud is the best cloud, and take a seminar on how to chisel your customers.

The Reality of Private Research

This exposure highlights a long-term pattern we have covered in the article How to Understand Why No Functioning Research Entities that Cover SAP that there are close to no functioning research entities that work in the IT space. All of what is called private research in the iT space is sponsored studies like the NIST study analyzed in this article. Normally Microsoft would go to Forrester or Gartner for this type of manufactured research. However, in this case, they went to NIST.