How to Get Started with Database Migration with AWS RDS and Google Cloud SQL

Executive Summary

- AWS and Google Cloud are offering great opportunities for database migration.

- Learn why cloud services are enabling databases.

Introduction

Migrating databases and applications to AWS or Google Cloud has numerous benefits that have already changed how IT is managed at a wide array of companies.

These benefits include the following:

- Reducing fixed costs

- Gaining economies of scale

- The ability to test services and to learn new things

- Increased flexibility

- Reducing lock-in

- Gaining access to new solutions

- Gaining access to more open source solutions

- Improved visibility to spend and leveraging the strong innovation from AWS and Google Cloud.

The List Of Improvements

The list of improvements is quite extensive. Amazon RDS manages the work involved in setting up a relational database. This is from provisioning the infrastructure capacity you request to installing the database software. Once your database is up and running, Amazon RDS automates everyday administrative tasks such as performing backups and patching the software that powers your database. With optional multi-availability zone deployments, Amazon RDS also manages synchronous data replication across Availability Zones with automatic failover. Using RDS is only one of the options for migrating an RDBMS to AWS. The other option is to use EC2.

AWS describes the advantages and disadvantages of EC2 vs. RDS thusly.

“Choosing Between Amazon RDS and Amazon EC2 for Your Oracle Database Both Amazon RDS and Amazon EC2 offer different advantages for running Oracle Database. Amazon RDS is easier to set up, manage, and maintain than running Oracle Database in Amazon EC2, and lets you focus on other tasks rather than the day-to-day administration of Oracle Database. Alternatively, running Oracle Database in Amazon EC2 gives you more control, flexibility, and choice. Depending on your application and your requirements, you might prefer one over the other. Many AWS customers use a combination of Amazon RDS and Amazon EC2 for their Oracle Database workloads”

However, the RDS is a fully managed database, and it also provides significant economies of scale as AWS manages so many databases inside each RDS instance. But as AWS observes, RDS is not right for every usage, and universality of application is not the impression we intend to provide in this chapter.

On Innovation in AWS

On the topic of innovation, one thing that catches everyone off guard has been that AWS, in particular, has been and will be adding so many features and capabilities. A challenge with AWS is the rate of innovation is high-speed compared to most customers, so there’s a significant learning curve involved, and that learning curve keeps extending out to new things as soon as one “thing” is mastered. Many of us in technology have been told that we have to be dedicated to continual learning. However, AWS pushes the envelope of what is possible for a company to absorb.

As observed by AWS in their AWS Migration Whitepaper, the best way to migrate is incremental. A significant reason for this is simply the learning curve to figure out AWS and how to best leverage the AWS services.

Massive and Immediately Scalability

Unlike on-premises databases, AWS offers virtually unlimited scalability regarding database size and the computational resources that the database consumes. One can have a large database with fewer computational resources (and less cost) or a smaller database with more computational resources. All of this depends upon the application’s particular need.

Getting Started with Backups

One easy way to get started with AWS or Google Cloud is backing up data to either one. Every company needs a remote backup to get the process started and begin scaling up in familiarity. AWS explains this in the following quotation.

“Organizations are looking for ways to reduce their physical data center infrastructure. A great way to start is by moving secondary or tertiary workloads, such as long-term file retention and backup and recovery operations, to the cloud. Also, organizations want to take advantage of the elasticity of cloud architectures and features to access and use their data in new on-demand ways that a traditional data center infrastructure can’t support.”

Long-term storage can go with AWS Glacier or Google Cloud Nearline.

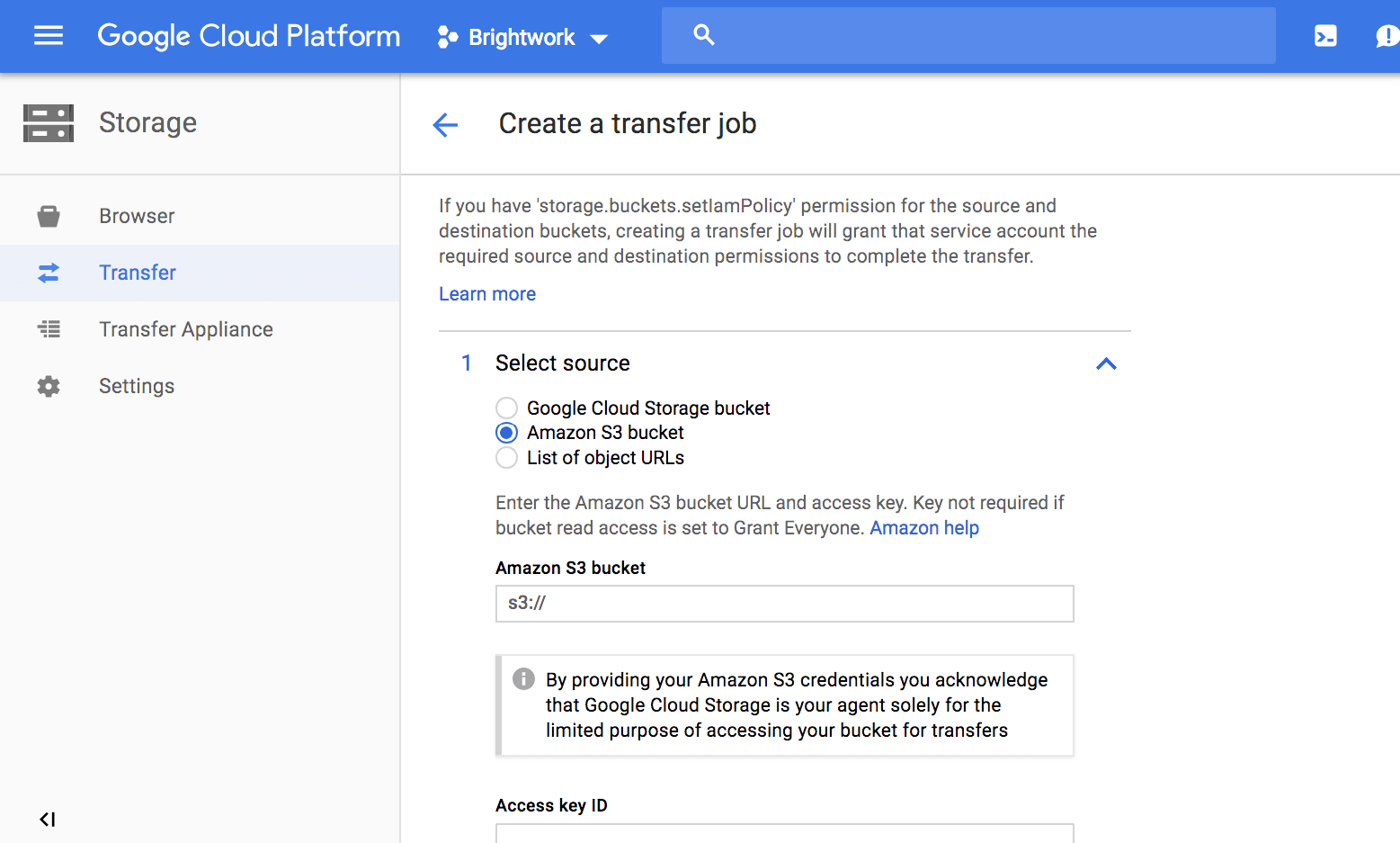

As we discussed earlier, one has a lot of flexibility when using both AWS and Google Cloud. Here we can begin by backing up our data on AWS, and if we choose, switch to Google Cloud.

Right after creation, data can be set up for migration.

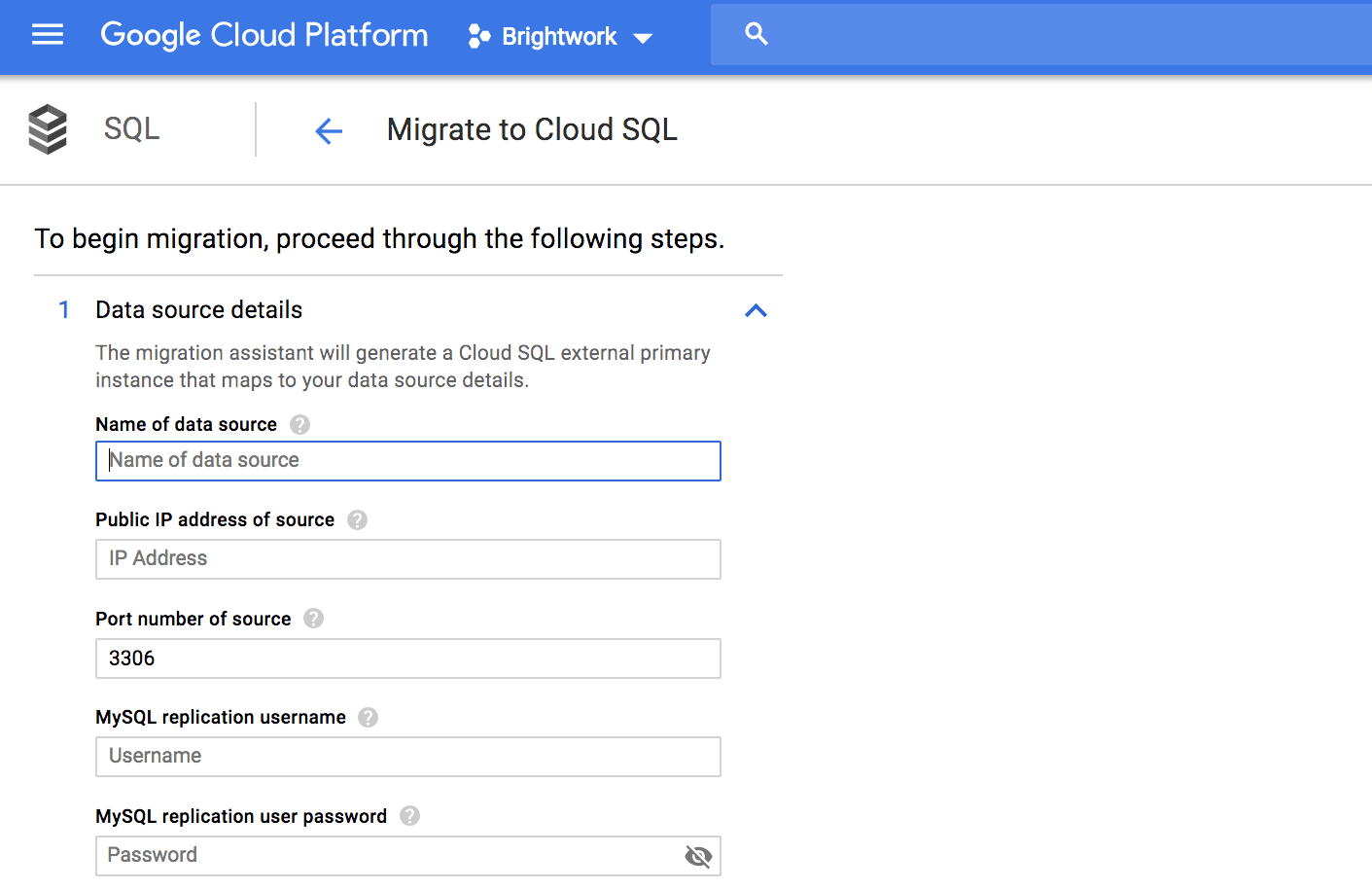

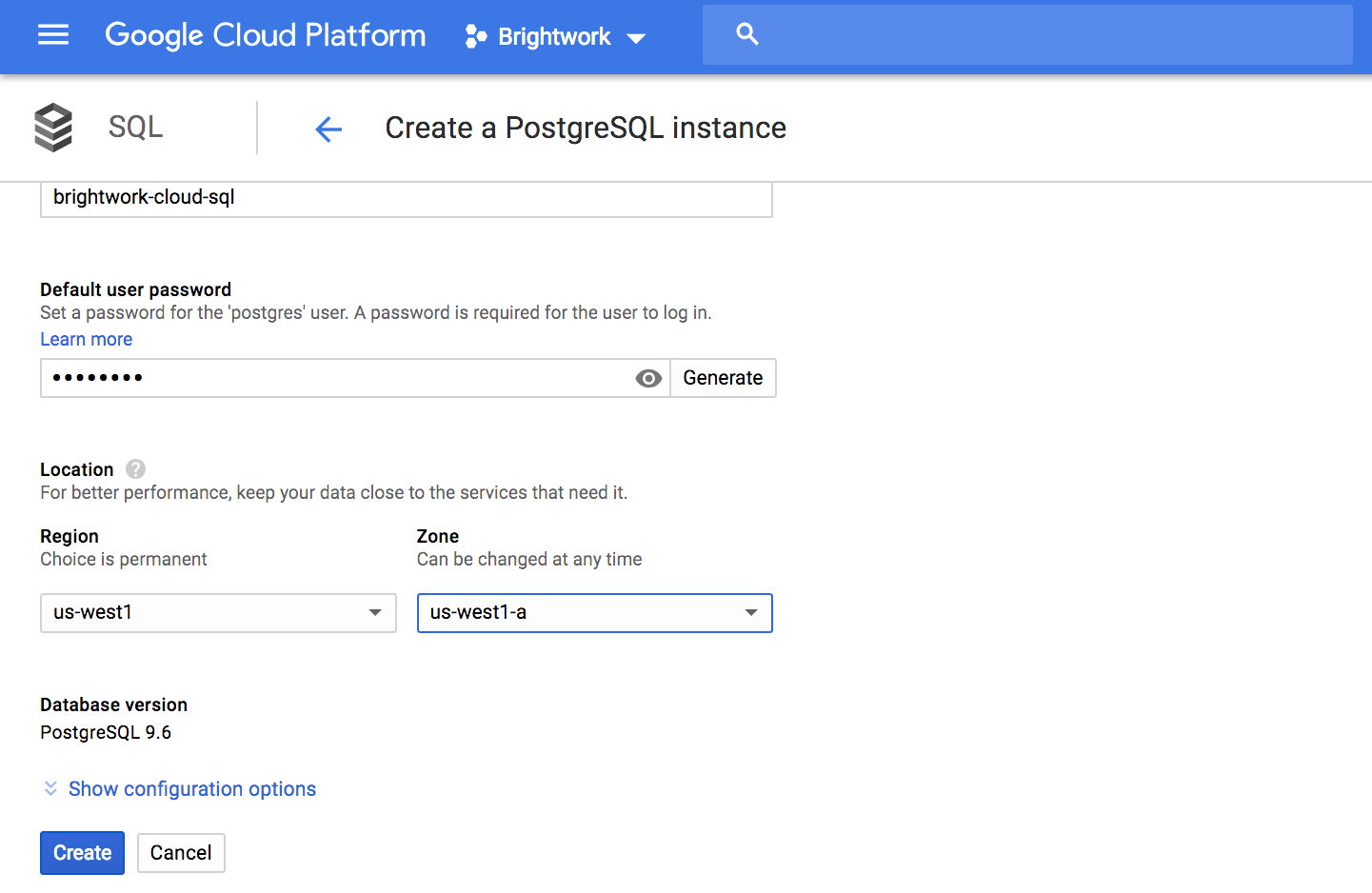

A second natural progression is to migrate some databases from an on-premises SQL or AWS RDS after backing up data. PostgreSQL is a particular favorite of ours. Companies that migrated from the Oracle DB to PostgreSQL often never look back.

We are in a golden age of resource management. One can bring up instances, test them, and bring them down in ways that would have been unthinkable in the past.

Moving Past Stage One of Using AWS and Google Cloud

Backups and database migration are just the starting points for getting one’s feet wet with AWS and Google Cloud. Rather, a testing-based migration of what makes sense to AWS starts with the low-hanging fruit and moves up to more advanced items. We also identify what has been low-hanging fruit from our exposure to AWS and Google Cloud and recommend starting places. It is easy to start small with AWS and Google Cloud that the testing approach is the natural default position. The overall testing approach and low cost and low-risk capability to perform testing are some of the areas that strongly differentiate AWS and Google Cloud from SAP and Oracle. This shortens the time by which feedback is received. This changes the overall decision-making process in IT organizations. It means that the IT organizations can rely less upon projections and sales information and more on trying out the various AWS offerings instead of having to project.

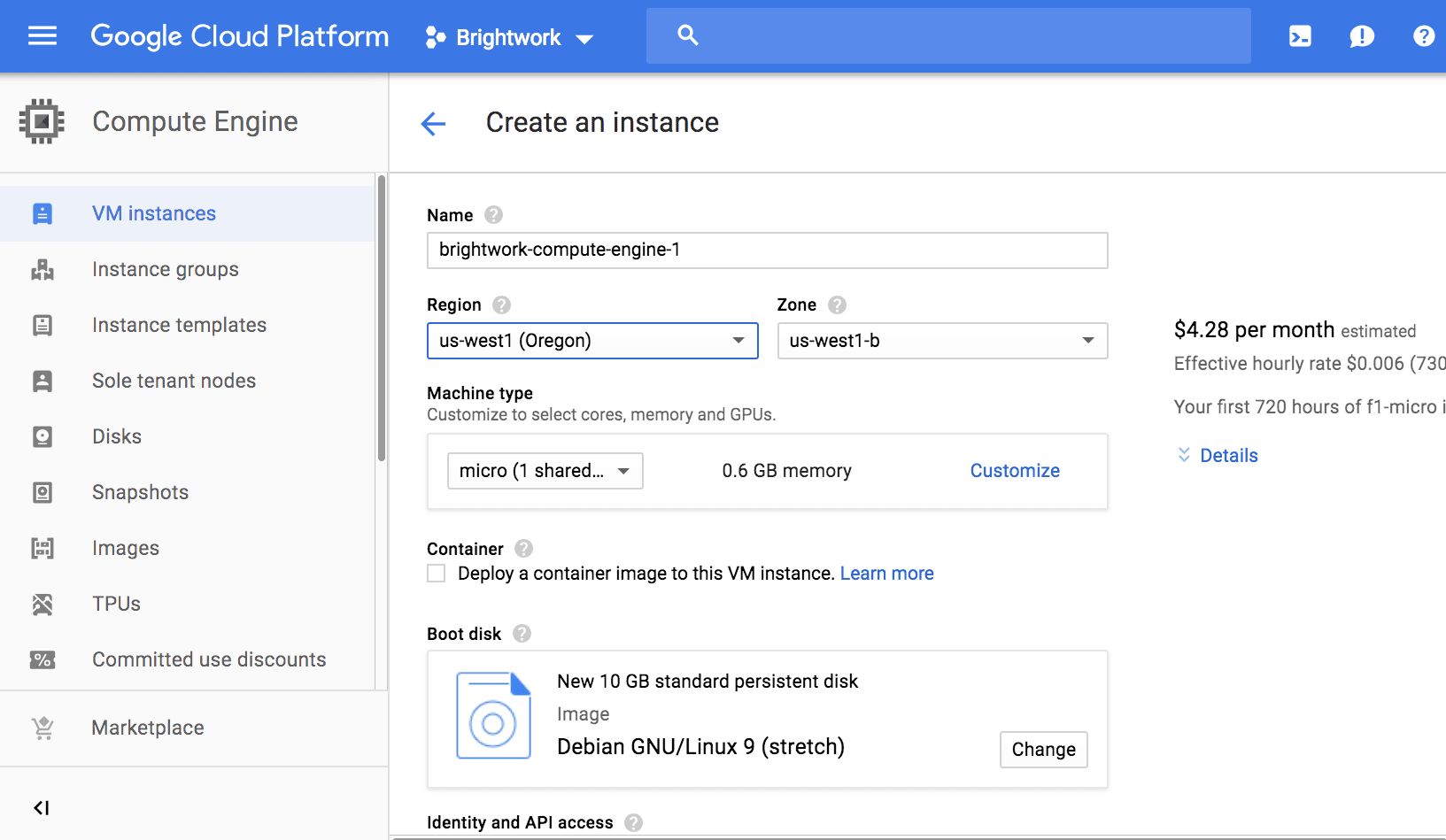

Virtual machines are a primary building block of the cloud. Notice we can even set up a micro virtual machine to create a small test environment.

A Breath of Fresh Air for Database

Migrating databases to AWS will be a breath of fresh air for SAP and Oracle customers. While SAP and Oracle base their business on lock-in, which perpetuates a mentality where old investments need to be protected (as they cannot easily be switched off), SAP and Oracle want you as a customer to buy specific things.

AWS RDS is offered for the following databases.

- Aurora: This is the only database in this list created/owned by AWS (and not open source), shares many similarities with MySQL, and known for high scalability and native high availability)

- MySQL: The original open-source database continues to have high popularity, but due to being undermined by Oracle, it is losing to other open-source projects like MariaDB that have forked off of MySQL.

- Oracle: (Oracle offers support for both 12 and 11, as so many Oracle customers are still on 11)

- MariaDB: MySQL “2.0” with a list of increasingly impressive features, MariaDB is used by both Wikipedia and Google (internally).

- SQL Server: Microsoft’s database is declining but gets high marks for ease of use and is one of the top four databases in general use.

- PostgreSQL: The performance heavyweight in the relational, open-source market. PostgreSQL is a problem for Oracle because it undercuts their performance argument. The number of use cases that require Oracle over PostgreSQL shrinks every year.

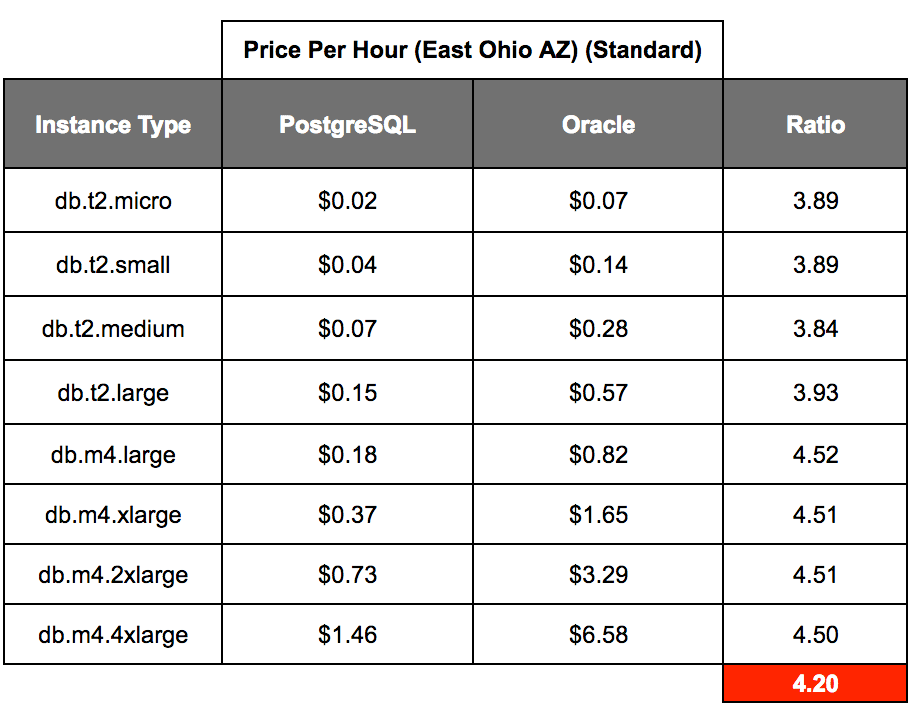

Of the different RBBMS databases offered by RDS, Oracle is by far the most expensive. The graphic above compared the instances that were available for both PostgreSQL and the Oracle database. On average, Oracle costs over 4 times as much to run per hour on AWS as did PostgreSQL. This analysis was performed against the Standard Edition 1 of Oracle. Standard Edition 2 was roughly another 8% more than Standard Edition 1.

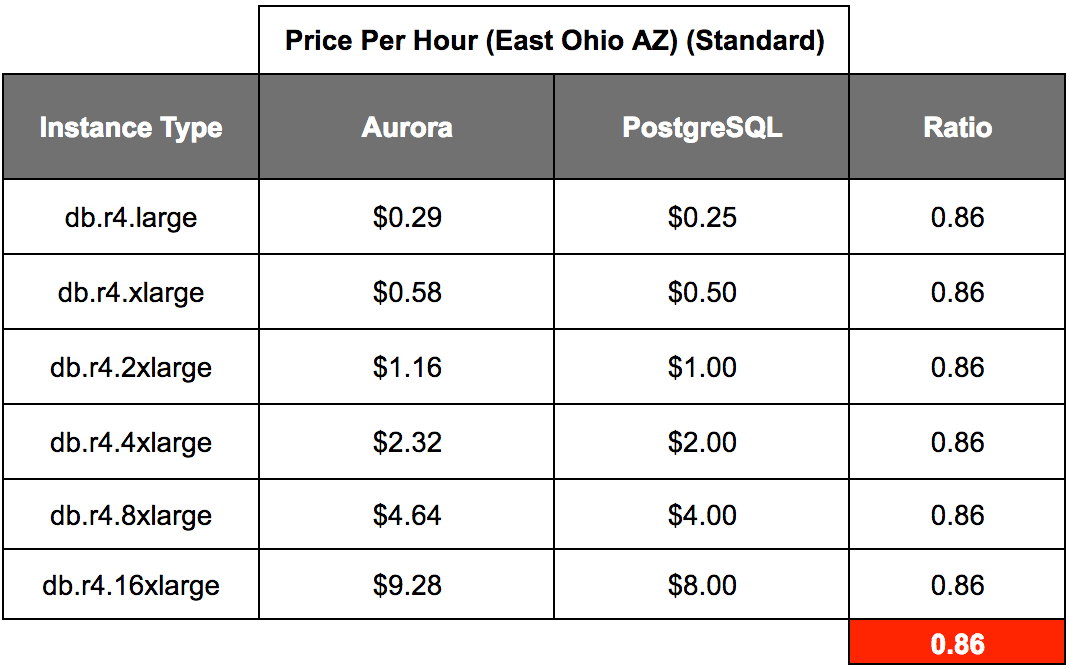

One of the topics is whether AWS charges more for products that it developed rather than open-source options. Therefore, we decided to compare Aurora versus PostgreSQL.

It turns out that AWS does charge more for PostgreSQL than for Aurora or homegrown database versus an open-source database. And the difference is around (14/86) or 16.6% more. Therefore, this in part answers how much incentive AWS has to push their databases over other alternatives. The answer from a financial perspective is “not much.”

AWS RDS is free for customers to try and provides 35 different instance configurations ranging from 2 CPUs to 128 CPUs. This includes low network bandwidth to 20 MBPS. Moreover, of course, scalability allows one to switch between the different configurations. This type of flexibility never existed before AWS introduced the RDS, and as usual, AWS is the first to introduce such things.

The Oracle Autonomous Database

While we have focused on open source databases, AWS supports Oracle. Oracle to Oracle is the most straightforward migration. However, moving on from the Oracle database is where the more extended savings can be found as AWS offers managed database service.

This means that AWS now performs much of the work ordinarily performed by the DBA. Oracle had no practical answer for this, so they came up with an impractical one. They decided to develop a fallacious concept called the autonomous database.

With the automated database, Oracle proposed the following:

- Total automation of all database tasks would be possible because of machine learning and artificial intelligence (Apparently, it had been working in secret on these topics for decades but never discussed with anyone).

- Oracle’s machine learning and artificial intelligence would make one of the highest overhead and convert it to not only a low maintenance database but a maintenance-free database. Oracle states that the automated database will instantly sense when the Oracle DB must be patched or upgraded and automatically perform the upgrade.

We analyzed the autonomous database in the Brightwork article How Real is the Oracle Autonomous Database? It was evident that Oracle’s Autonomous Database was/is fake. The reason for its introduction was to try to forestall the movement to AWS. Without AWS, the “autonomous database” is never launched by Oracle.

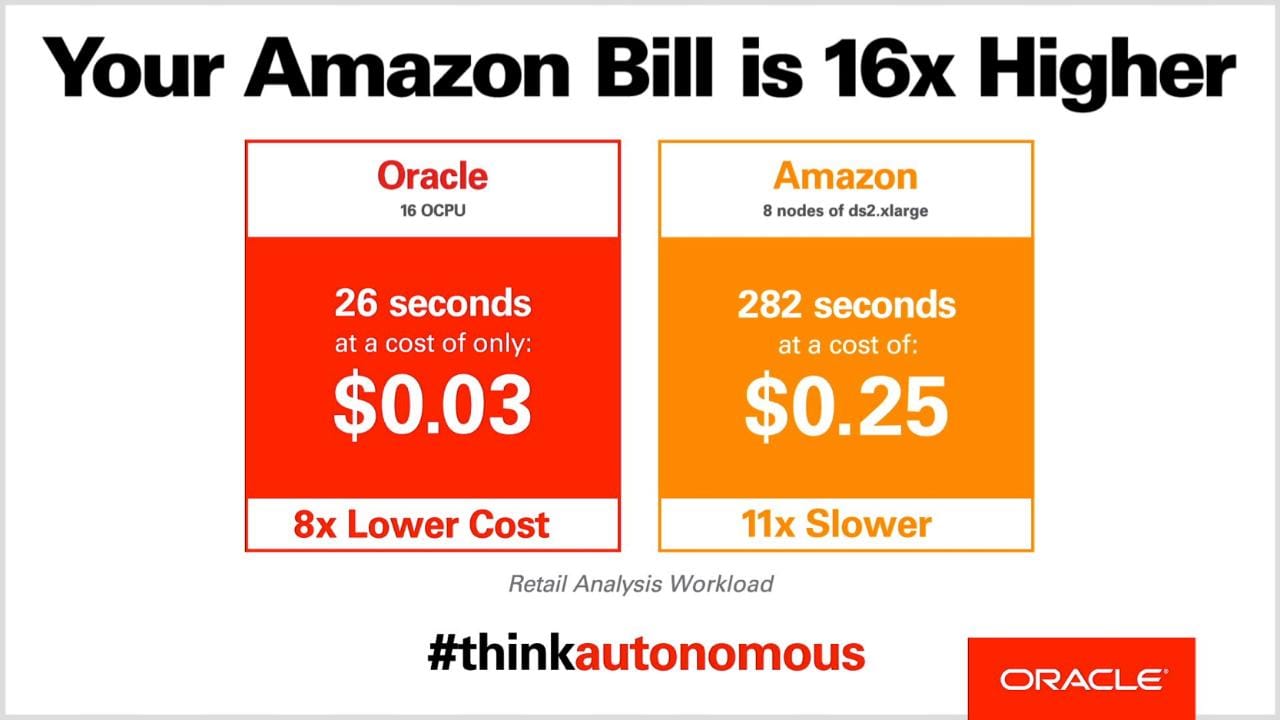

Oracle made a big push on its supposed autonomous database, but the story about this database fell apart as soon as the details were explained. Oracle increased the price of its database on AWS before this announcement of the autonomous database. AWS has an excellent reputation for database performance. The proposal of Oracle on both performance and cost is not believable. Secondly, the vast majority of customers will not want to use Oracle Cloud, which is required to run the “autonomous database.”

As shown several pages previously, our independent comparison showed that the Oracle database costs more than four times as much to run on AWS versus PostgreSQL. However, Oracle stated it would run databases at 1/8th of the cost of AWS? The following is explained by Dan Woods (#2).

“In January (of 2017), Oracle changed the fine print on some of its license terms, essentially doubling the cost of running Oracle software on AWS and Azure, while leaving the cost of running on the Oracle Cloud unchanged.

While the impact of this is obvious, making it cheaper to run the same Oracle footprint on Oracle’s cloud compared to AWS and Azure, it is not at all clear that Oracle is going to benefit in any significant way from making it more expensive to run on other clouds. Here’s a look at why Oracle may have done this and the likely impact. So then will this price change actually cause more people to use the Oracle cloud? For that to happen, someone would have to switch their entire cloud deployment from AWS or Azure to the Oracle cloud for the sake of the increased cost of one part of the infrastructure. Given that Oracle isn’t in the mix in most of the cloud deployments I’ve heard of, and that its cloud is far behind both AWS and Azure in terms of features, adoption, and ecosystem, it is likely that it would cost a huge amount more to move to the Oracle cloud than to stay with AWS or Azure, so no, this policy change isn’t going to cause a rush of Oracle cloud sales.

So is Oracle in effect giving up on competing with AWS and Azure databases on feature, function and price? Is the doubling of prices of its software the only way that Oracle can make more dough on the fastest growing clouds? Whatever this policy change is intended to be, it certainly isn’t a show of strength by Oracle. It seems more like a show of weakness and an attempt to grab a sliver of revenue that may be out there for a short while.”

Dan’s comments were published in Forbes on Mar 23, 2017. But this price change makes it easier to make the case that Oracle is lower in cost on Oracle Cloud than on AWS or Azure.

The Composition of the Autonomous Database

The Oracle autonomous database is comprised of the following items:

- Oracle database 18c enterprise edition (with all database options and management packs included)

- Oracle Exadata X7-2 is the deployment platform. Oracle autonomous database runs on Oracle Exadata X7-2. The X7-2 systems only dedicate OCPUs and a single PDB (Pluggable Database) to each cloud client. The memory, flash cache, and other resources on an Exadata system are not dedicated. Oracle will decide how many other clients share the PDB resources available within a single Exadata machine.

- Clients with strict SLAs for production workloads wouldn’t accept this multi-tenant (noisy neighbor) configuration even if they were located in the same city block as the Oracle DC. The Oracle cloud automated database and database operations components (scripts, best practices, procedures).

Configuration Choices

Oracle offers two configuration choices for the Autonomous Database: enterprise and mission-critical. The 99.995% availability is only true for the mission-critical configurations, which cost 2X the monthly subscription fee. This increased cost is based on the requirement of a standby database that doubles infrastructure and storage resources.

The Exadata X7-2 systems only dedicate OCPUs and a single PDB (Pluggable Database) to each cloud client. The memory, flash cache, and other resources on an Exadata system are not dedicated. Oracle will decide how many other clients share the PDB resources available within a single Exadata machine. Clients with strict service level agreements for their production workloads have to ask themselves if this type of multitenant configuration is acceptable. This is the sequence we predict with the autonomous database.

- The new “autonomous” database will run fine on small data sets, but it will become a severe problem when you get in the terabyte range.

- The autonomous database will perform poorly because it will take forever (and it does) to run statistics.

- It will be necessary to get a good Oracle DBA (a dying breed) to diagnose what is wrong.

- This DBA will determine the statistics job because it takes too long to gather statistics on terabytes of data.

- After the DBA does his handy work, the machine learning algorithm will make poor decisions on database tuning because the Oracle cost-based optimizer’s database statistics are out of date. The statistics will be out of date because it takes too long to run them and the DBA made a workaround.

- The performance will become a serious problem.

- When asked why the statistics are out of date, they will find out the DBA began exporting and importing statistics to improve performance.

- They will blame the DBA and fire him.

- The customer will complain.

- Oracle will go back to square one.

- A new statistics machine learning package patch will come out, and the cycle will repeat itself.

The Basis for Predictions

Where do our predictions come from? From the experience that one of the authors, Ahmed Azmi, has obtained from years of working with Oracle. And there is another problem with the autonomous database. Oracle claims that the Autonomous Database uses machine learning to detect anomalies in usage patterns automatically. This means it has to have access to sufficient customer data to train a customer-specific threat model to protect each specific customer. Furthermore, there is a question of how to preset a rule to distinguish between a spike caused by an attack from a spike caused by seasonality.

There are common scenarios that show that using the Oracle database from the Oracle Cloud versus AWS is a wrong decision. Oracle offers a well-regarded if the overpriced and high overhead database. Although how high the overhead is for the Oracle database is often understated.

“Even in areas where Oracle likes to trumpet the richness of functionality it offers, like Oracle HA, the reality is that much of the “richness” is actually external to the database itself. You “have to add a ton of stuff outside of the database [to make it work] for replication, failover, monitoring, etc,” Keep says. Of course, this being Oracle, each of these add-ons is sold separately, resulting in a fat price tag and a seriously complex system to manage. Even worse for Oracle, the only way for a developer to get access to such Oracle extras is on the Oracle cloud, which basically no one wants to use.”

But the Oracle Cloud is an entirely different matter. It is so insubstantial that there no reason to use it. And the autonomous database only “works” if it is run in the Oracle Cloud. This, of course, makes whether the database is autonomous impossible to verify. If it were autonomous, it could be run either on-premises or in the Oracle Cloud, but obviously, it can’t.

Many companies that have the Oracle database have to stay with it.

And common reasons are the following:

- Fear of migrating and the potential risk of downtime.

- Stored procedures writing for the Oracle DB.

- Contract lock-in

- Compatibility issues with applications.

And as noted by Silvia Doomra.

“If you’re using a packaged software application whose vendor does not certify on PostgreSQL, migrating is probably a non-starter.”