How to Understand The Reasons for HANA’s High CPU Consumption

Executive Summary

- HANA has high CPU consumption due to HANA’s design.

- The CPU consumption is explained by SAP, but we review whether the explanation makes sense.

Introduction

We have covered the issues with SAP HANA that SAP, SAP consulting firms, and IT media censor. As a result of this information being censored, many SAP customers have been hit with many surprises related to implementing HANA. SAP has come up with an excuse it has offered, which is just a method of gaslighting its customers. You will learn about HANA’s CPU consumption or overconsumption, the reasons for this, and why SAP’s explanation makes no sense.

Our References for This Article

If you want to see our references for this article and other related Brightwork articles, see this link.

Notice of Lack of Financial Bias: We have no financial ties to SAP or any other entity mentioned in this article.

- This is published by a research entity, not some lowbrow entity that is part of the SAP ecosystem.

- Second, no one paid for this article to be written, and it is not pretending to inform you while being rigged to sell you software or consulting services. Unlike nearly every other article you will find from Google on this topic, it has had no input from any company's marketing or sales department. As you are reading this article, consider how rare this is. The vast majority of information on the Internet on SAP is provided by SAP, which is filled with false claims and sleazy consulting companies and SAP consultants who will tell any lie for personal benefit. Furthermore, SAP pays off all IT analysts -- who have the same concern for accuracy as SAP. Not one of these entities will disclose their pro-SAP financial bias to their readers.

HANA CPU Overconsumption

A second major issue besides memory overconsumption with HANA is CPU consumption.

HANA’s design is to load data that is not planned to be used in the immediate future into memory. SAP has decreased its somewhat unrealistic position on “loading everything into memory,” but it still loads a lot into memory.

The reason for the CPU overconsumption is this is how a server reacts when so much data is loaded into memory. The CPU spikes. This is also why CPU monitoring, along with memory monitoring, is considered so necessary for effectively using HANA. At the same time, this is not an issue generally with competing databases from Oracle, IBM, or others.

SAP’s Explanation for Excessive CPU Utilization by HANA

SAP offers a peculiar explanation for CPU utilization.

“Note that a proper CPU utilization is actually desired behavior for SAP HANA, so this should be nothing to worry about unless the CPU becomes the bottleneck. SAP HANA is optimized to consume all memory and CPU available. More concretely, the software will parallelize queries as much as possible to provide optimal performance. So if the CPU usage is near 100% for query execution, it does not always mean there is an issue. It also does not automatically indicate a performance issue”

Why Does SAP’s Statement Not Make Sense

This entire statement is unusual, and it does not explain several issues.

Why HANA Times Out if the CPU is Actually Being Optimized?

Timing out is an example of HANA’s inability to manage and throttle resource usage. Timing out requires manual intervention to reset the server. If a human is needed to intervene or the database ceases to function, resources are not being optimized. We have frequent reports at some companies of their HANA server needing to be reset multiple times a week.

This is supposed to be a standard capability that comes with any database one purchases. Free open-source databases do not have the problem that HANA has.

If an application or database continually consumes all resources the likelihood of timeouts increases. It also presents a false construct that HANA has optimized CPU usage, which is incorrect. It seeks to show what is a bug in HANA as a design feature.

Why HANA Leaves A High Percentage of Hardware Unaddressed

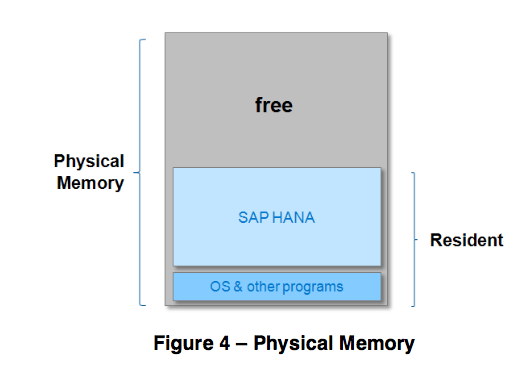

Benchmarking investigations into HANA’s utilization of hardware indicate clearly that HANA is not addressing all of the hardware that it uses. While we don’t have both related items evaluated on the same benchmark, it seems probable that HANA is timing out without addressing all the hardware. Customers who purchase the hardware specification recommended by SAP often do not know that HANA leaves so much hardware unaddressed.

The Overall Misdirection of SAP’s Explanation

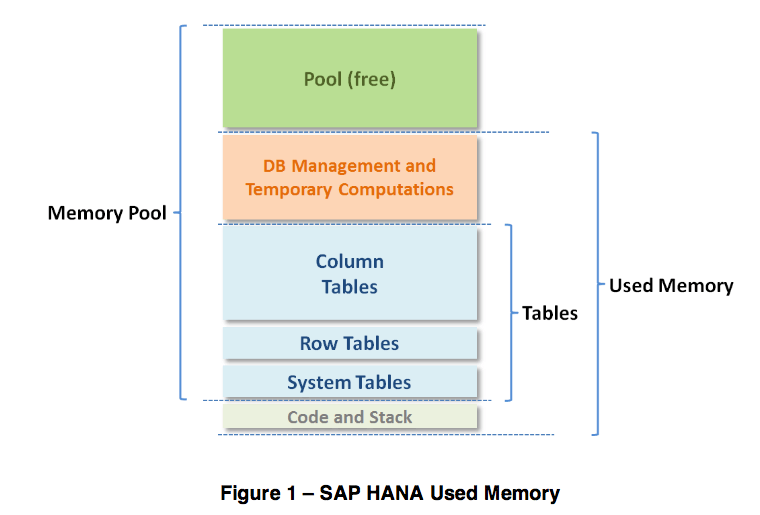

This paragraph seems to attempt to explain away HANA’s consumption of hardware resources that should concern administrators. This statement is also inconsistent with other explanations about HANA’s memory use, as seen from the SAP graphic below.

Notice the pool of free memory.

This is also contradicted by the following statement as well.

“As mentioned, SAP HANA pre-allocates and manages its own memory pool, used for storing in-memory tables, for thread stacks, and for temporary results and other system data structures. When more memory is required for table growth or temporary computations, the SAP HANA memory manager obtains it from the pool. When the pool cannot satisfy the request, the memory manager will increase the pool size by requesting more memory from the operating system, up to a predefined Allocation Limit. By default, the allocation limit is set to 90% of the first 64 GB of physical memory on the host plus 97% of each further GB. You can see the allocation limit on the Overview tab of the Administration perspective of the SAP HANA studio, or view it with SQL. This can be reviewed by the following SQL

statementselect HOST, round(ALLOCATION_LIMIT/(1024*1024*1024), 2)

as “Allocation Limit GB”

from PUBLIC.M_HOST_RESOURCE_UTILIZATION”

Introduction to HANA Development and Test Environments

Hasso Plattner has routinely discussed how HANA simplifies environments. However, the hardware complexity imposed by HANA is overwhelming to many IT departments. This means that to get the same performance as other databases, HANA requires far more hardware and far more hardware maintenance.

Development and testing environments are always critical, but the issue is particularly important with HANA. The following quotation explains the alterations within HANA.

“Taking into account, that SAP HANA is a central infrastructure component, which faced dozens of Support Packages, Patches & Builds within the last two years, and is expected to receive more updates with the same frequency on a mid-term perspective, there is a strong need to ensure testing of SAP HANA after each change.”

The Complications of So Many Clients and Servers

HANA uses a combination of clients and servers that must work in unison for HANA to work. This brings up complications, i.e., a higher testing overhead when testing any new change to any one component. SAP has a process for processing test cases to account for these servers.

“A key challenge with SAP HANA is the fact, which the SAP HANA Server, user clients and application servers are a complex construct of different engines that work in concert. Therefore, SAP HANA Server, corresponding user clients and applications servers need to be tested after each technical upgrade of any of those entities. To do so in an efficient manner, we propose the steps outlined in the subsequent chapters.”

Leveraging AWS

HANA development environments can be acquired through AWS at reasonable rates. This has the advantage that because of AWS’s elastic offering, volume testing can be performed on AWS’s hardware without having to purchase the hardware. Moreover, the HANA AWS instance can be downscaled after complete testing. The HANA One offering allows the HANA licenses to be used on demand. This is a significant value-add as sizing HANA has proven highly tricky.

Conclusion

HANA’s design problems have continued for years because the database originally had its design goals/parameters set by Hasso Plattner, who was not qualified to design a database. Since the beginnings of HANA, people lower down the totem pole have been required to continue trying to support Hasso’s original “vision.”