How to Understand The Design of Mainframes

Executive Summary

- Mainframes are engineered systems designed to run at high utilization without virtualization.

- Mainframes are designed to meet four distinct design objectives.

Introduction

To understand why AWS and Google Cloud can attain such advantages versus on-premises servers, it is necessary to comprehend mainframes at a basic level. This section’s coverage is known to all people who work in mainframes, but those outside the mainframe industry do not generally well understand it. And most of the readers of this book will not have a background in mainframes, which is why we include this brief overview.

Let us elaborate on the most critical differences in a little detail.

Our References for This Article

If you want to see our references for this article and other related Brightwork articles, see this link.

Redundancy/Stability

Coming from the server mindset, mainframes’ stability, redundancy, and longevity are mind-boggling. Quotes like this are just not found outside of mainframes.

“Any mainframe computer technician will tell you, enthusiastically, how remarkable the technology is despite its age. Those old IBMs have triple- and quadruple hardware failover mechanisms, several different electrical inputs for backup power, end-to-end encryption, and layers of advanced virtualization. They are built to last 40+ years at 100 percent uptime due to those redundancy features.”

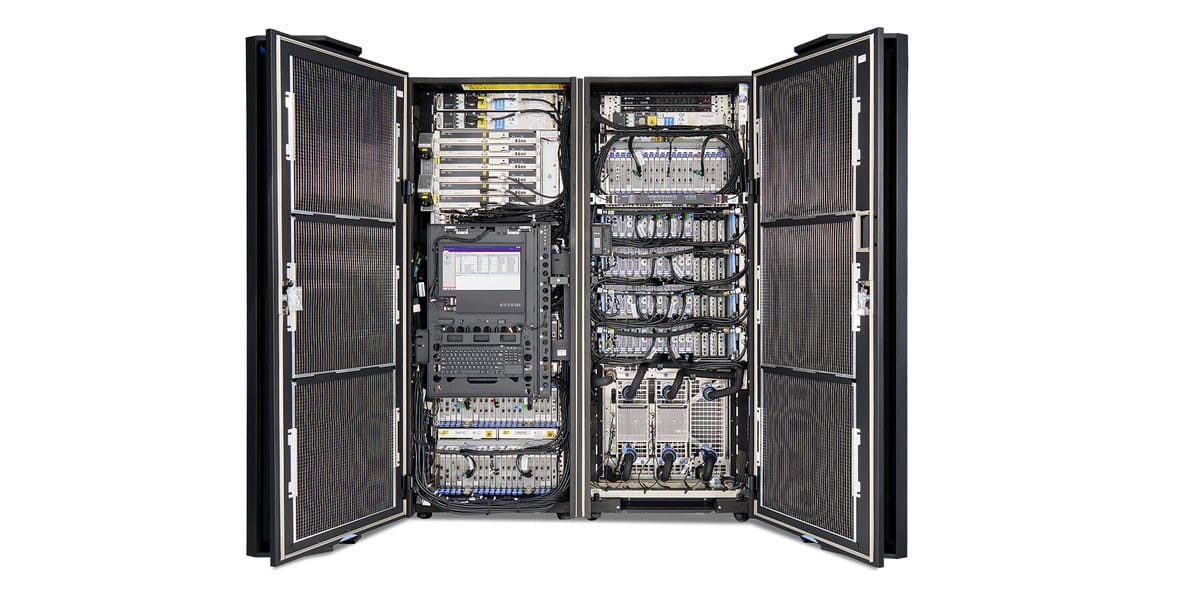

If one takes the time to investigate a modern mainframe’s interior, it begins to look like a miniaturized server farm. However, once you get past the high-level similarities, there are many differences in the details. Mainframes use proprietary hardware, and hardware performs functions managed by software in servers. Mainframes contain redundant components and follow the opposite approach to component failure versus an AWS or Google Cloud data center. Mainframes are far smaller and don’t scale as easily as racks of commodity servers (although multiple mainframes can be connected and made into a cluster). However, mainframes already have a designed efficiency, which means they do not need to scale to obtain fantastic utilization levels. Their hardware design makes them less dependent upon the software for utilization.

Much of the IT media coverage related to the longevity of mainframes casts reliability through a skewed lens. The following quotation is a prime example of this type of coverage of a 2016 Government Accountability Office (GAO) report on the state of US Federal Government IT.

“In a soon to be released report, GAO found, “federal legacy IT investments are becoming increasingly obsolete” with outdated software languages and hardware parts that are not supported. In some cases, GAO found agencies are using systems that have components at least 50 years old.”

Indeed, it’s not a good thing not to correctly invest in one’s IT systems, but when is the fact that an item that has been in functional service and adding value to entities for 50 years considered bad? Could that not be construed as a good value for taxpayers? Not only are the systems 50 years old, but the GAO found many of the individual components are 50 years old!

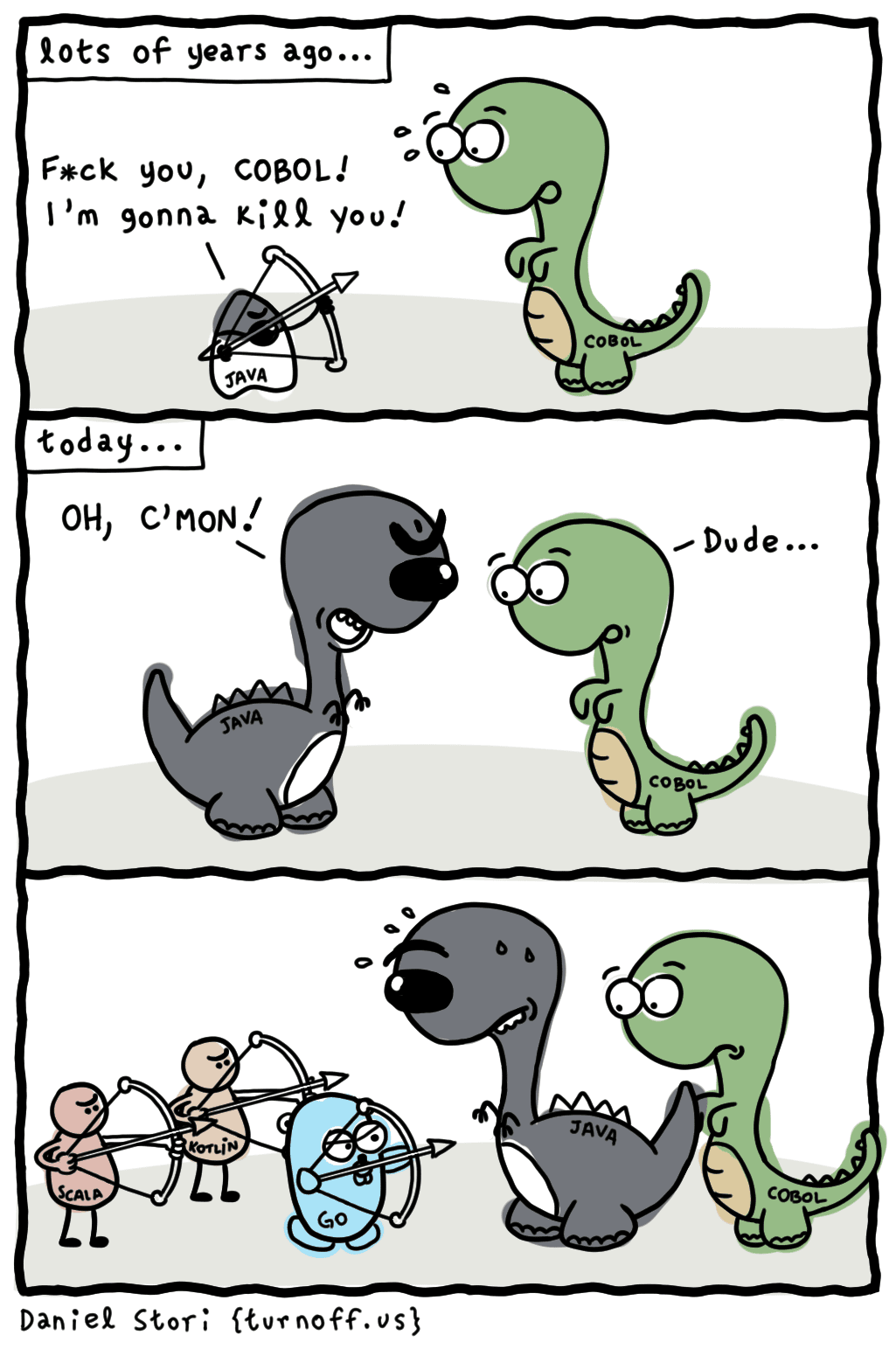

Furthermore, framing the related items that are “out of date” is also enjoyable. One of the out-of-date languages the GAO referred to was COBOL, which the Department of Veteran Affairs, the Social Security Administration, and the Department of Justice. COBOL is undoubtedly old but still a highly respected language with an estimated 200 billion code lines in operation globally.

Languages tend to turn over relatively quickly in software. As the cartoon correctly points out, Java was not that many years ago considered leading edge. This is not to say that languages won’t eventually be phased out, but much of the old languages’ issues come down to training. The US Federal government has little excuse to say they cannot find COBOL programmers when they can relatively easily implement COBOL training. They have all the incentives as they have systems written in COBOL to maintain. If COBOL exists as it did previously, and there are tons of COBOL training materials, what is the problem exactly? Secondly, many of the system’s custom-built for the government often do not have a reasonable facsimile available in commercial software vendors.

COBOL skills are declining, but they can be trained, and COBOL programs can be maintained quite well with the right trained resources. Instead of maintaining the code, the plan was to replace parts of the three agencies’ systems. A second out-of-date language was assembly language, which the Department of Treasury relies upon, and that does seem to be something to update.

Indeed, 50-year-old computer parts/components will often not be supported and are coming to the end of their life, but isn’t this something to celebrate? (while looking for eventual replacements, of course).

Mainframes Versus the Typical Server Farm

Mainframes have much more hardware design thought that went into them than does the typical server farm. Server farms replicate nearly the same server many times over, with everything connected, and as we said previously, creating what amounts to one giant computer. In the mainframe, the components are of higher quality and fail less frequently. Their initial cost is far higher than the commodity servers used by AWS and Google Cloud, but the overall TCO is, which includes the cost of maintenance, is better than on-premises servers. Mainframes are far lower in maintenance than batches of servers, and mainframes are considered “turnkey” solutions. It is also regarded as typical for mainframes to stay up for years without being rebooted. This is not the case with servers. The comparison in reliability is explained in the following quotation.

“One of the less reliable vendors in the mainframe replacement business was quite keen on announcing that it had achieved “5 nines availability, apart from planned downtime” – which turned out to be rather different to what 5 nines availability meant in the mainframe world, where you could change operating systems without bringing the system down, you didn’t need to patch the operating system every week and unplanned downtime of a few minutes a year worried your vendor.”

On-premises servers are purchased not as turnkey but more as a kit. They must be assembled with other hardware components to make an applicable emergent property that functions. They must have virtualization and other software to run even at low utilization levels. On the other hand, mainframes can be plugged into a wall and into communications connections and be ready to use. The high quality of the mainframe components means that they naturally stay up without adjustment for long periods. This is the opposite of server farms with lower quality but far less expensive components being replaced as a matter of routine. The reliability of mainframes is evidenced by companies putting their most essential workloads on them. Standard practice in many companies is to have just two mainframes. Each is at a different location, the second being the backup mainframe.

Overall, because of their design, mainframes have a mean time between the failure of decades. Yet, their low maintenance and high availability and the fact that there are examples of mainframes working and doing their jobs for 50 years are a bit unstimulating for the modern IT sensibilities that are looking for far sexier items to focus their attention.

Input/Output (I/O)

Servers use the CPU to manage I/O, which means they apply a general-purpose processor. Mainframes have specific hardware that focuses entirely on I/O (called peripheral processors, channel processors, or I/O subsystems) that removes the I/O load from the CPU. This is like a computer with a video card versus video processing on the mainboard. This means that mainframes are more specialized and provide unique processors for different tasks. This is a significant reason that CPU utilization on mainframes is so much higher than for servers. I/O turns out to be the biggest bottleneck on modern servers, limiting their utilization and something that mainframe designers knew decades ago when they designed their mainframes. IBM’s I/O Control Program is dedicated only to managing the I/O for the mainframe.

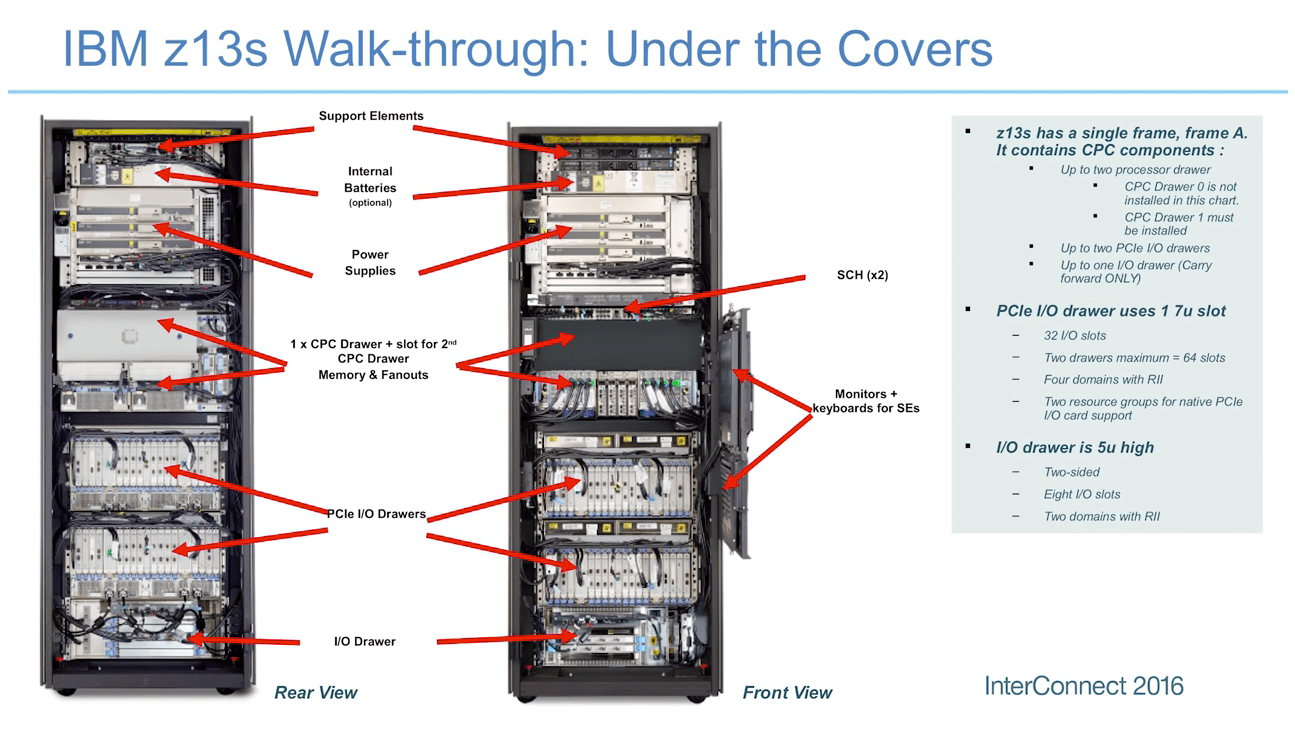

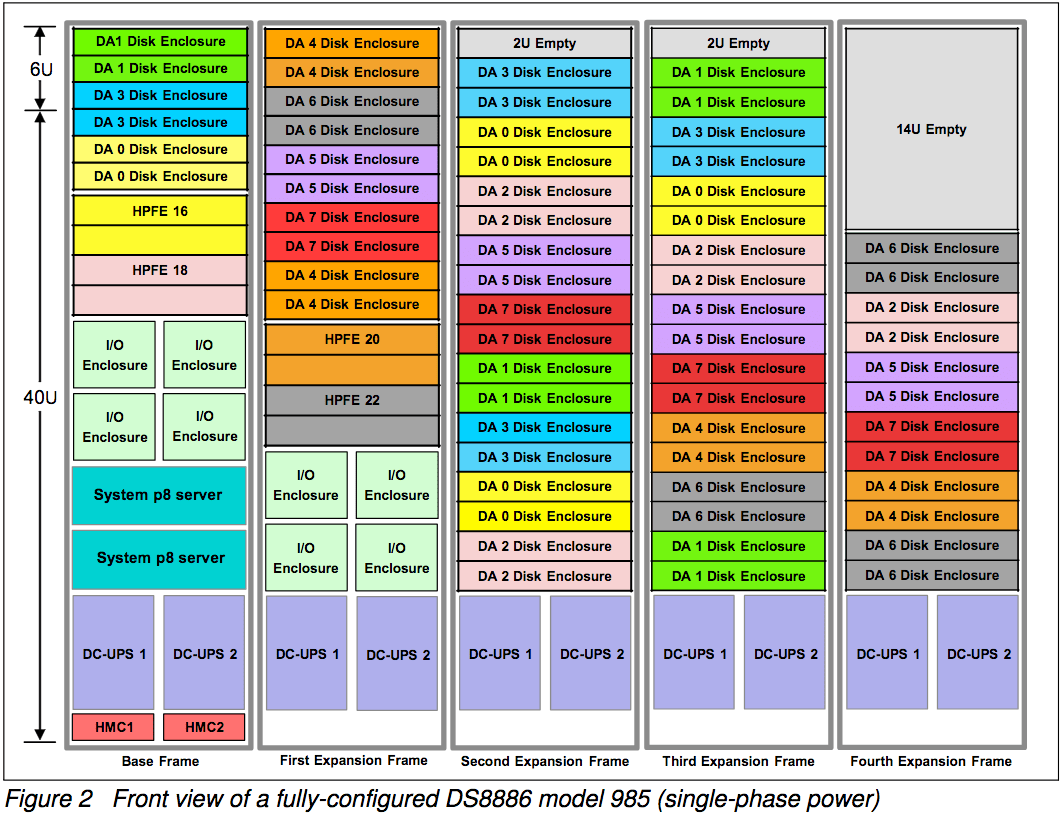

To emphasize the point about I/O, notice how much of this mainframe is dedicated to I/O drawers. Roughly 45% of the components in this mainframe, by how the cabinet is filled, are at least for I/O processing.

The Hardware Utilization Benefits of Mainframes

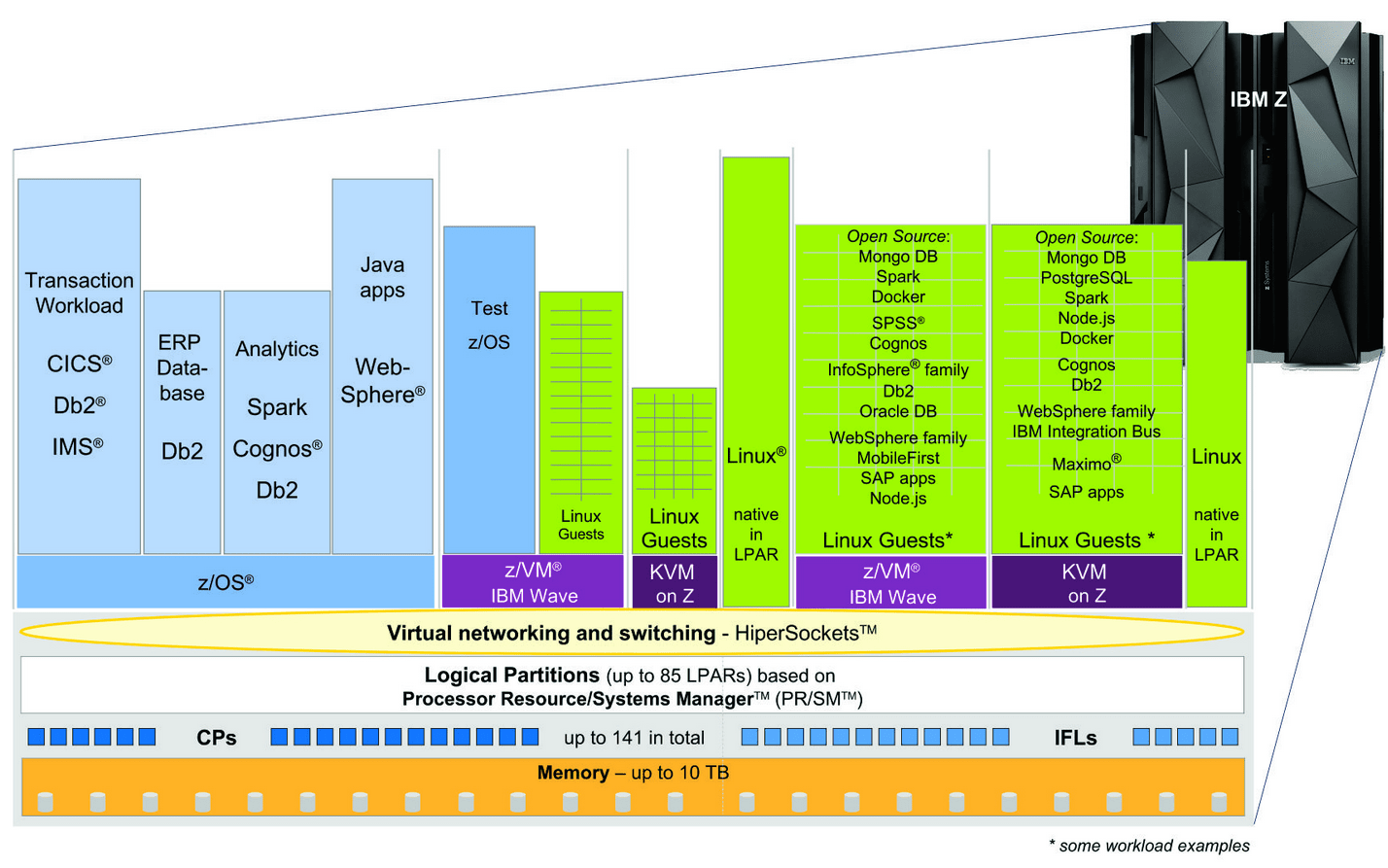

Because mainframes have so much forethought built into them, they do a much better job of hardware utilization than servers. This is why mainframes can run at 100% utilization for years and maintain peak performance up to 90% load, while servers lose efficiency at greater than 20%. Mainframes are designed explicitly for particular workloads but are not marginal or unusual. On the contrary, they are very common workloads. Transaction processing, for example, is a very common workload, and it is something for which no better hardware has been designed than the mainframe. Rather than requiring extensive virtualization, mainframes rely upon logical partitions (LPARs) that run their operating systems. The system processors and their memory are flexibly assigned to the LPARs, and resource allocation algorithms switch the processors and the memory between the LPARs depending on the load. This means that while one LPAR is running virtualization for various specialized non-mainframe type workloads, the others can skip virtualization altogether when running mainframe workloads. Within the LPAR that runs virtualization, the VMs are very lightweight, so IBM Z systems can run many of them. The IBM z13 can have up to 85 LPARs.

This diagram shows how LPARs are managed on a z Series mainframe. Notice the combination of LPARs with virtual machines (VMs)

Backward Compatible

This is one of the lesser-emphasized areas of mainframes, but backward compatibility means that old programs don’t need to be refactored. As we have described, one of the fantastic features of a mainframe is its ability to run programs for many years with so little maintenance. When a new mainframe is purchased, backward compatibility is built in.

Different CPU/Processing Unit Architectures of Mainframes Versus Servers

Mainframes use different CPUs than servers. IBM makes its mainframe processors. These processors are customized for the mainframe workloads.

This Dell server contains a single 14-core processor. Mainframes include not a single multi-core processor but many processors designed for specialized tasks. Servers try to meet a variety of needs with software rather than hardware. There is constant price pressure on servers, which results in low prices and functionality that could be handled with hardware dumped into the software. With such a simple layout and overall design, servers can be made in large numbers rapidly. Therefore, the computing problem has a “unicrop” of servers. This is where software comes in to overcome the limitations of the hardware design in addressing different workloads.

The IBM Z Series contains the following processors. The number of processors differs per configuration, so we added an (s) to each type.

- The CPU(s): Used for the OS and the applications.

- The System Assistance Processor(s) (SAP): Supports the I/O subsystems.

- Integrated Facility for Linux(s) (IFL): Used only by the z/OS

- (zAAP): For executing Java code.

- System z9 Integrated Information Processor (s) (zIIP): For processing database workloads. It helps reduce software costs.

- Integrated Coupling Facility (s) (ICF): For running licensed internal code invisible to the CPU.

- Spare (as is spare processor): Functions as a spare processor for the other processor types listed above.

While it is unnecessary to understand each of the processors above in detail, the primary takeaway is that mainframes don’t operate off of copying over the same CPU. They offer a series of specialized processors. This is one reason they are far more capable of many workloads than any other hardware design.

Mainframes as Specialized In Their Design and Configured to Order

Mainframes are thought out to an exceptional level of detail for how the hardware should be designed. A process has been refined to match workloads to components and how they interact within the overall mainframe design. Because so few mainframes are purchased (instead of purchased in bulk as servers), a configuration process is far more involved than with servers.

Mainframes like the IBM zSeries are entirely configured to order. There is no “single price.” Depending on the configuration, the zSeries can cost hundreds of thousands to multiple millions. However, the customer gets a mainframe tuned for their requirements because of the configuration.

The mainframe manufacturers have templates that meet different workloads. Therefore, the mainframe manufacturer does the design work to match the workload. This differs from on-premises servers, where the responsibility is far more on the IT department.

The larger the mainframe configuration, the more components become involved. The DS8886 storage system’s internal setup would connect to a z series mainframe for extra storage.

Servers require an enormous scale until they can compete with mainframes in the total cost of ownership.

The IBM LinuxOne is a mainframe that runs Linux, which is the primary operating system that runs the cloud, as its name implies. Those that don’t usually run IBM’s virtualization software, z/VM, directly on the hardware. A single mainframe can support thousands of virtual machines. However, mainframes do not have to be virtualized to obtain high utilization, a fundamental difference from servers.

Mainframe Workloads

Mainframes are most often associated with the relational database type, as both the relational and mainframes are designed for transaction processing. Still, they can run all the different types of databases presently available, although not at the same efficiency level. Moreover, while many people who work in IT have heard the term transaction processing because it is less discussed than it was in the past and has been primarily a background item in many ways, it is easy to forget precisely what transaction processing is.

The following quotation helps illuminate the topic.

“Making reservations for a trip requires many related bookings and ticket purchases from airlines, hotels, rental car companies, and so on. From the perspective of the customer, the whole trip package is one purchase. From the perspective of the multiple systems involved, many transactions are executed: One per airline reservation (at least), one for each hotel reservation, one for each car rental, one for each ticket to be printed, on for setting up the bill, etc. Along the way, each inquiry that may not have resulted in a reservation is a transaction, too.”

When IT media covers Big Data, IoT data, and unstructured data from social networks, websites, mobile, and others, they often leave out that data was accumulated from transactions being processed. These are the new “hot” technologies, right? Why is the term transaction processing not used in most articles covering these hot topics? If transaction processing is tedious, why is it foundational to some of the most discussed IT areas?

ERP systems are frequently discussed concerning functionality without emphasizing that most of what they do is transaction processing. ERP systems have other types of processing, as well. For example, they run mathematical procedures like MRP and DRP. Some analytics are performed within the ERP system, although in most cases, analytics are performed outside of ERP systems. However, the bulk of ERP systems is transaction processing, not running procedures, and not processing analytical queries.

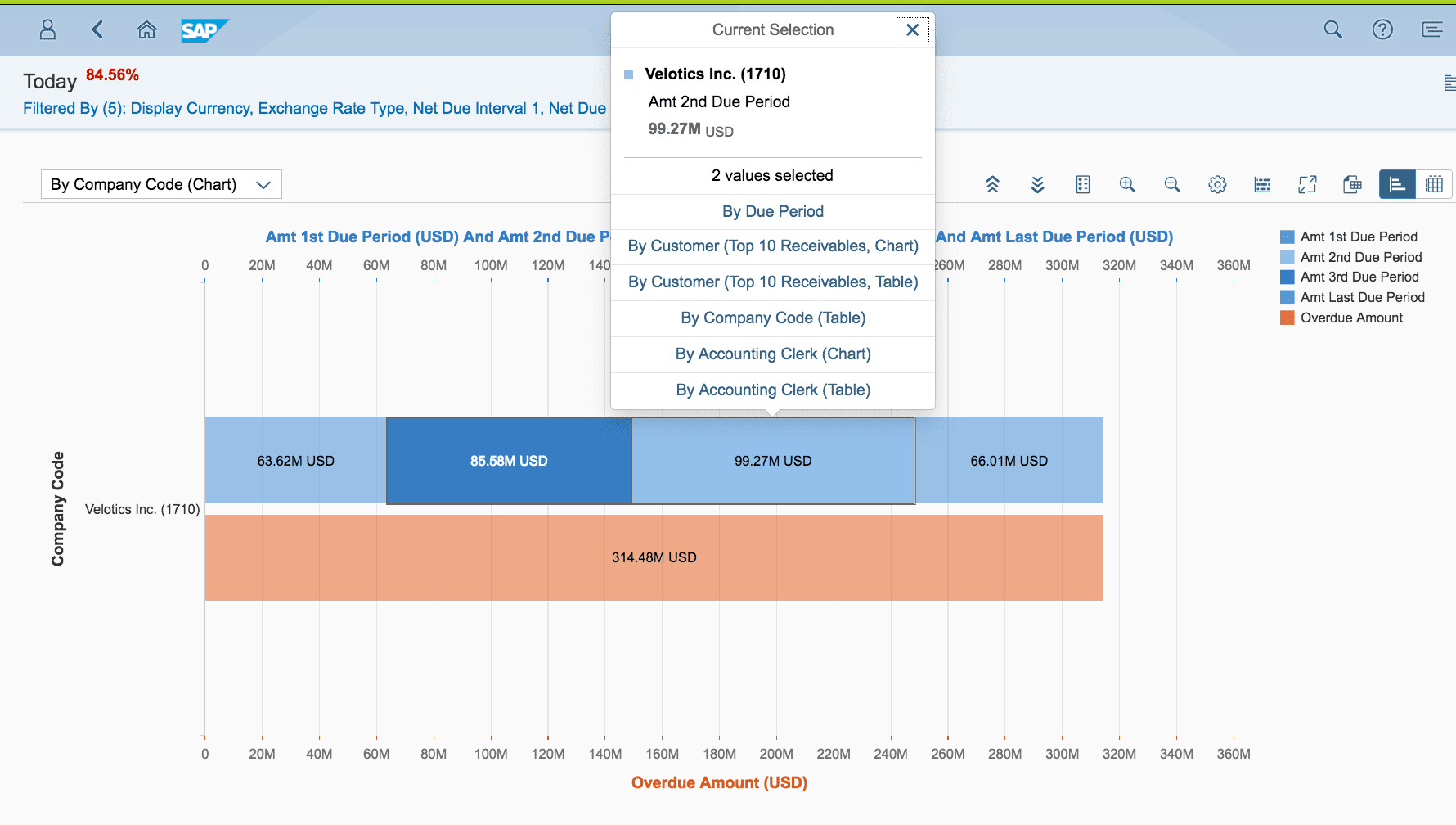

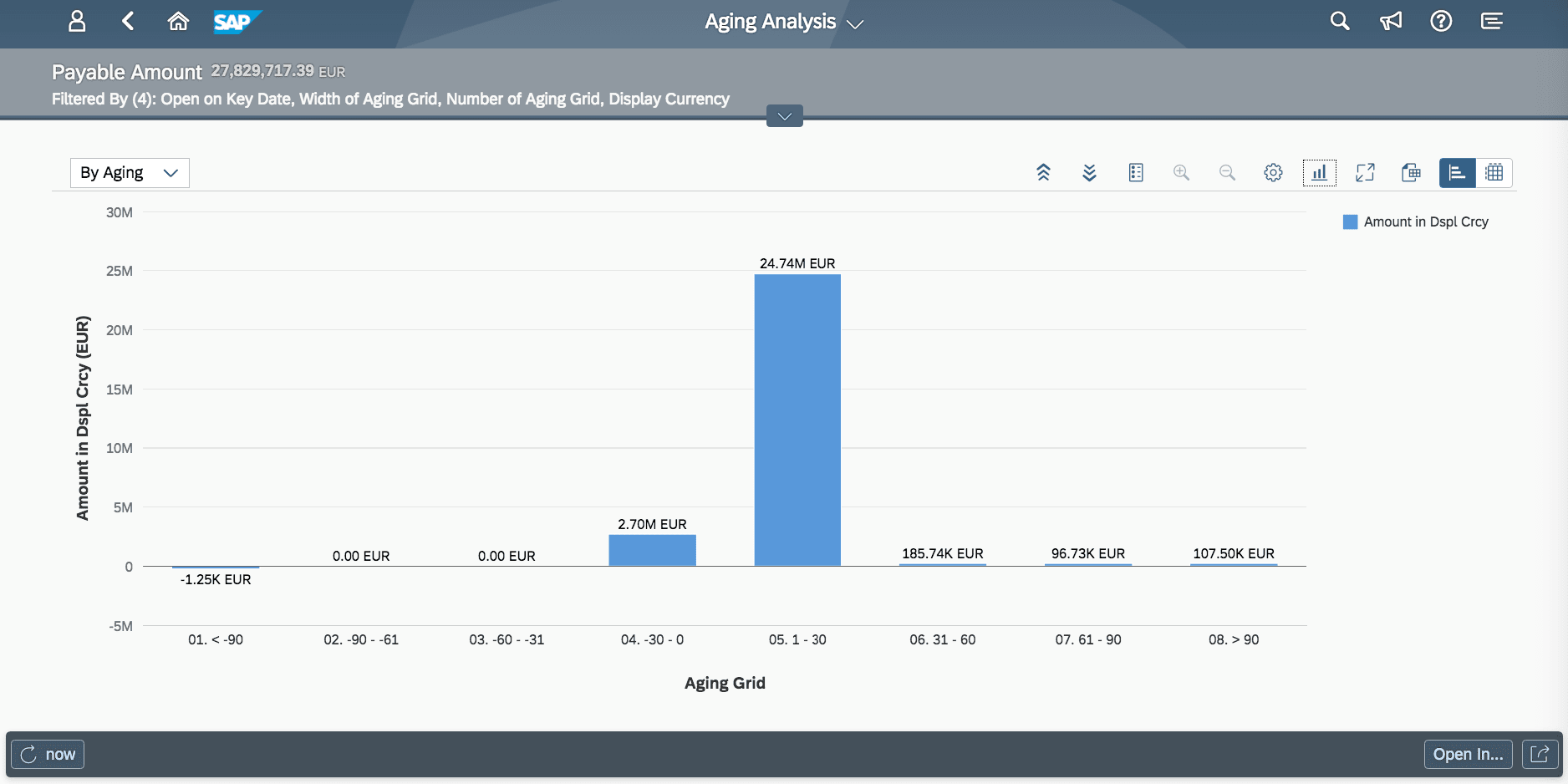

SAP’s new S/4HANA ERP system is the first SAP ERP to be highly focused on analytics instead of SAP ECC, which had virtually no analytics.

However, nothing in S/4HANA’s analytics screens requires any particular analytic-specific processing. The reports are canned and precompiled before they are viewed. However, this does not stop SAP from claiming that the in-memory HANA database is critical for analytics.

Gartner declares some areas of technology as “hot” and others as “not.” And Gartner does not have very much interest in mainframes and transaction processing. However, systems work as an integrated whole, so this US Magazine/Instagram approach to technical analysis is not particularly helpful to Gartner customers, most of whom have no idea that Gartner does not follow rules of research and is riddled with multidimensional conflicts of interest as we cover in the article The Problem with How Money Influences the Gartner Magic Quadrant. We have previously questioned whether Gartner is running a technology advisory firm or a fashion magazine, as we covered in the article How to Understand Gartner’s Similarity to the Devil Wears Prada.

While there are more and better analytics solutions than in the past, the analytics is ultimately based upon aggregated transactions, which would be very disappointing for Gartner to learn. And while transaction processing seems dull, it is only because transaction processing has become so reliable that it has become boring. When transactions fail to get processed, “excitement” quickly ensues.

Mainframes Versus Server Farms

Server farms are only possible because the software ties them into a cohesive whole, allowing processing to be virtualized onto generally uniform hardware. Without virtualization, servers have a significant problem supporting their assigned workloads. However, while mainframes can virtualize, they rely less on virtualization and instead rely upon specialized purpose-built hardware and the specific configuration of the mainframe to meet the demands of the workload.

The similarities between the mainframe and the server farm are further illuminated in the following quotation from Nikola Zlatanov.

“The mainframe is still massive in the industry. Despite the predictions and rumors, it is really not going away. While some of the smaller customers have dropped off, all of the bigger customers are growing their workloads(emphasis added) on the mainframe.

There are two sides to why the mainframe market is still going strong. One is lock-in—an old business-critical application running on a mainframe, with many years of business logic built into it, costs a fortune to migrate elsewhere. The other is the mainframe’s relevance in a modern hybrid cloud architecture, running VMs on-premise and storing petabytes of data.

The mainframe has evolved a lot over the years. You can run Linux on the mainframe. A lot of what these big providers are doing is really trying to recreate the mainframe by hashing together a lot of servers. A private cloud made from many commodity boxes—one that can provide, say, 5,000 virtual machines—has things in common with a mainframe.

Both contain huge pools of CPU and memory for virtual machines, and massive amounts of storage for objects and images.

Both run an OS that virtualizes workloads to maximize efficiency.

Both can deal with high volumes of transactions. Many of the scaling problems being encountered in the cloud were solved long ago by mainframe technicians.”