Is AI a Term That Only Applies to Science Fiction Instead of Software?

Executive Summary

- Industry and media are so busy and interested in promoting AI that there is virtually no effort to question whether the concept is accurate or valid.

Introduction

Under the constant barrage of hype around AI, it has been common to skip over the part where one understands what AI is and its validity for providing the routinely promised benefits.

We ran into an article that did something scarce. It called into question the entire use of the term AI as applied to software.

Our References for This Article

If you want to see our references for this article and other related Brightwork articles, see this link.

The Limited Scope of What is Covered by AI

Machine learning actually does work by defining a kind of spectrum, but only for an extremely limited sort of trajectory(emphasis added) – only for tasks that have labeled data,(emphasis added) such as identifying objects in images. With labeled data, you can compare and rank various attempts to solve the problem. The computer uses the data to measure how well it does. Like, one neural network might correctly identify 90% of the trucks in the images and then a variation after some improvements might get 95%.

Getting better and better at a specific task like that obviously doesn’t lead to general common sense reasoning capabilities.(emphasis added) We’re not on that trajectory, so the fears should be allayed. The machine isn’t going to get to a human-like level where it then figures out how to propel itself into superintelligence. No, it’s just gonna keep getting better at identifying objects, that’s all. – The Dr. Data Show

We highlighted the areas of these quotations.

- The use of specific labeled data.

- Improving in specific (narrow tasks)

Each of the cases that are called AI is incredibly narrow. We generally don’t consider such narrow applications to be “intelligent” unless something is performed by software or, more commonly, a computer.

The term labeled data is the input of context, and large amounts of it, allow the “AI” software to observe a pattern based upon historical data and then perform this pattern recognition in real-time.

2001: Space Odyssey was made back in 1968 and projected capabilities out in 2001 or 19 years ago. The AI demonstrated by HAL is referred to as either “strong AI” or generalized intelligence or artificial pervasive intelligence. By 1981, researchers had moved away from developing generalized intelligence AI, as it proved too challenging, and they were unable to show progress.

Fifty-one years later, there is still nothing even like this. Related to this application, we are now at the point where we can skip typing questions into Google and instead ask them to Alexa or Google Home. But once again, these are narrow tasks. Alexa and Google Home’s software is simply a combination of natural language processing with a search and retrieval mechanism. A big part of AI marketing is deliberately confusing advancements in what is called weak AI — to forecast strong AI.

To reiterate, AI is simply the automation of narrow pattern recognition or following a series of steps. Further improvement in a narrow task leads to….better narrow task accomplishment — not generalized intelligence, as is covered in the following quotation.

Machine learning actually does work by defining a kind of spectrum, but only for an extremely limited sort of trajectory – only for tasks that have labeled data, such as identifying objects in images.(emphasis added)

With labeled data, you can compare and rank various attempts to solve the problem. The computer uses the data to measure how well it does. Like, one neural network might correctly identify 90% of the trucks in the images and then a variation after some improvements might get 95%.

Getting better and better at a specific task like that obviously doesn’t lead to general common sense reasoning capabilities. We’re not on that trajectory, so the fears should be allayed. No, it’s just gonna keep getting better at identifying objects, that’s all.

No advancements in machine learning to date have provided any hint or inkling of what kind of secret sauce could get computers to gain “general common sense reasoning.” Dreaming that such abilities could emerge is just wishful thinking and rogue imagination, no different now, after the last several decades of innovations, than it was back in 1950, when Alan Turing, the father of computer science, first tried to define how the word “intelligence” might apply to computers. – The Dr. Data Show

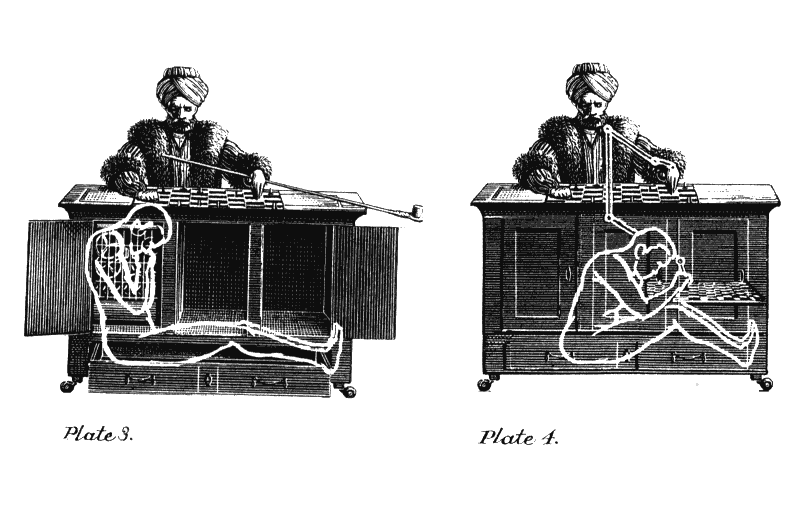

Eric Siegel of The Dr. Data Show proposes that AI is essentially a re-instantiation of the Mechanical Turk chess playing machine that had a small chess playing human inside of a box. The hidden human controlled the movement of a mechanical man that was in view, making the audience think that a robotic chess player was moving the chess pieces on a duplicate chessboard within view.

The Generalized Usage of the Term of AI

We cover in the article When Will People Notice the Gap Between the Promise and Reality of AI? The AI discussions are far more based upon what AI will do in the future than based upon what it has accomplished. From its beginnings in the late 1950s, AI has a long history of making claims that it has not accomplished. Both human brains and software or computers perform processing, but the term AI is confused between what these different things are designed to do.

For this reason, Eric Siegel proposes that the use of the term AI be terminated.

It’s time for term “AI” to be “terminated.” Mean what you say and say what you mean. If you’re talking about machine learning, call it machine learning. The buzzword “AI” is doing more harm than good. It may sometimes help with publicity, but to at least the same degree, it misleads. AI isn’t a thing. It’s vaporware. Don’t sell it and don’t buy it.

There is a complete lack of specificity concerning the term AI. It is a way for companies seeking funding or consulting firms wishing to sell projects to claim something leading edge without having to specify the method they are using. Nearly all claims around AI fall into this category. This quotation from an article around how movie studios might use AI to improve their box office returns is a specific example.

And Dr. R. M. Needham, when writing in the 1973 Lighthill report on AI in the UK, also proposed that the term AI was not a good one.

Artificial Intelligence is a rather pernicious label to attach to a very mixed bunch of activities, and one could argue that the sooner we forget it the better.

It would be disastrous to conclude that AI was a Bad Thing and should not be supported, and it would be disastrous to conclude that it was a Good Thing and should have privileged access to the money tap. The former would tend to penalise well-based efforts to make computers do complicated things which had not been programmed before, and the latter would be a great waste of resources. AI does not refer to anything definite enough to have a coherent policy about in this way.

AI for Successful Movie Prediction?

(Warner Bros.)..recently struck a deal with Cinelytic, which has built an AI-infused project management system. It is focused on the green-lighting process, such as by helping to predict the potential profits on new films. Now this is not to imply that Cinelytic has essentially cloned the genius of Steven Spielberg or James Cameron.

Rather, the technology helps with the myriad of smaller tasks, such as analyzing the impact on territories, the bidding process for talent and so on. This should free up time for filmmakers to work on more important tasks. There are other startups gunning for this market opportunity, like Pex. The company has an AI-driven platform that analyzes movie trailers to determine the potential revenue generation. This is done by crunching data like views, shares, likes and other forms of online engagement. – Forbes

Let us look at a few of the things listed that AI could use.

- Impact on Territories

- Bidding Process on Talent

- Views

- Shares

- Likes

Is this a good application of something that requires a substantial “AI” project or investment?

One can run a regression analysis on things like views, shares, and likes and then apply that model to future movies to forecast the impact on territories. (combined with other data such as how previous films performed that are similar per territory). Of course, linear regression is a type of machine learning, which is a type of AI. But as a person who has built several models like this and has run ML algorithms, I don’t see what ML algorithm will be able to be more effective than regression and a model built from the regression model. And none of what I am discussing is new or requires anything more than the everyday laptop. It also would not require any specialized software.

However, I would like to clarify that companies tend to have many conflicting interests. It isn’t easy to get the overall company to accept a purely quantitative model, even if it is highly accurate. For example, forecasts for products are routinely adjusted with manual inputs from sales and marketing to fit organizational incentives. (We cover this topic in the article A Frank Analysis of Deliberate Sales Forecast Bias). Why don’t companies stop this from happening? Because sales are too powerful politically. Secondly, most companies are not able to effectively measure their forecast error. For instance, only a minority of companies weigh their forecast error — which means that a product with monthly sales of 100 receives the same weight as a product with a monthly sales of 10,000. (we cover this in the article Knowing the Improvement from AI Without Knowing the Forecast Error?) Do AI promoters divulge this type of information? Well, you probably already know the answer, but let us review the quotes in the same article.

The Forbes article then goes on to obtain quotes from people trying to sell AI services.

As a result, a studio can change a trailer to make it more impactful. Bret Greenstein, who is the Global Vice President and Head of AI at Cognizant: “AI can absolutely help Hollywood reduce risk, in the same way it is reducing risk in other industries.

Ganesh Sankaralingam, the Director of Data Science and Machine Learning at Latent View Analytics: “Today there’s a wealth of actionable data available that movie studios and streaming entertainment companies can use to manage risk. Using AI and advanced analytics can not only help mitigate against box office flops, but also identify potential hits.

Curiously, it is not mentioned in the Forbes article that each of these individuals has financial incentives to sell these types of services. Cognizant is a firm that displaces US workers by defrauding the H1-B program with lower-paid Indian workers. I have never read a single thing written by Cognizant that was anything but mindless marketing drivel. Why would anyone listen to a company like Cognizant is a mystery to us? AI falsity from consulting companies has reached such a fever pitch that I showed a CapGemini AI promotional video at a recent forecasting conference, and the audience burst out into laughter.

The unreliable Cognizant sources go on to say..

“AI is a tool,” said Greenstein. “Judgement of people is essential. Judgement informed by AI will make big money in the box office.”

Notice that no one had an inkling as to what method they might use to gain these benefits. AI is not a method of accomplishing anything. It is merely the broadest category possible of applying some mathematics to a process. AI is excellent for various people who want to overpromise in that they don’t have to get into specifics.

Following Pied Pipers on AI

Eric Siegel also brings up the fact that can’t be disputed. Most of the people that are publicizing AI aren’t themselves particularly knowledgeable in AI.

AI is nothing but a brand. A powerful brand, but an empty promise. The concept of “intelligence” is entirely subjective and intrinsically human. Those who espouse the limitless wonders of AI and warn of its dangers – including the likes of Bill Gates and Elon Musk – all make the same false presumption: that intelligence is a one-dimensional spectrum and that technological advancements propel us along that spectrum, down a path that leads toward human-level capabilities.(emphasis added) Nuh uh. The advancements only happen with labeled data. We are advancing quickly, but in a different direction and only across a very particular, restricted microcosm of capabilities.

The term artificial intelligence has no place in science or engineering. “AI” is valid only for philosophy and science fiction – and, by the way, I totally love the exploration of AI in those areas. – Eric Siegel

Elon Musk, much like Bill Gates, has a long term established pattern of talking about things that he does not have any training or insight into — and media treats what he says, on virtually any topic, as likely valid. All of this occurs while we have university professors who study all of the issues discussed by Musk and have a real background, who are acknowledged experts in these fields, but who go un-interviewed. This shows that our media do not know who are reliable sources for information. This applies to both the topic of AI and many other topics.

Conclusion

Eric Siegel is correct, and his observation around this area is quite insightful. There is no situation where the use of the term AI allows us to understand what is being discussed. AI is a term either for science fiction or to provide nonspecific explanations to the actual method that is to be used to obtain the intended benefit.