Moderated Versus Unmoderated Remote UX Research Testing

Executive Summary

- Moderated UX testing has specific advantages and disadvantages versus unmoderated.

- We get into the major factors.

Introduction

Moderated UX testing is when the researcher participates in the research. Unmoderated is when a testing platform records the test and is a form of testing automation. In this article, we will cover each.

Our References for This Article

See this link if you want to see our references for this article and other related Brightwork articles at this link.

The Various UX Testing Platforms

There are several remote UX testing platforms or tools, and we have tested a number of them and used several in our own UX testing, but we can’t recommend one over the other as they tend to do different things a little better than other options in the market. For example, some UX testing software is designed for unmoderated UX testing (which is generally much more experience), (unmoderated UX testing is not something we do, but many companies do). But if you do both types, then naturally one will need to in most cases have two testing platforms.

In a future article, we may delve into a comparison of these tools.

The Most Common Preference for Research UX Companies

The following quote describes the preference for most research UX companies in terms of moderated versus unmoderated UX research.

Researchers often prefer unmoderated testing because they feel it will save them time — no need to run the sessions. But remember, particularly in qualitative testing, you should still be watching those recordings to analyze them. You might find that whatever time you’ve saved in conducting the session will be shifted into the analysis phase of the project. – NNGroup

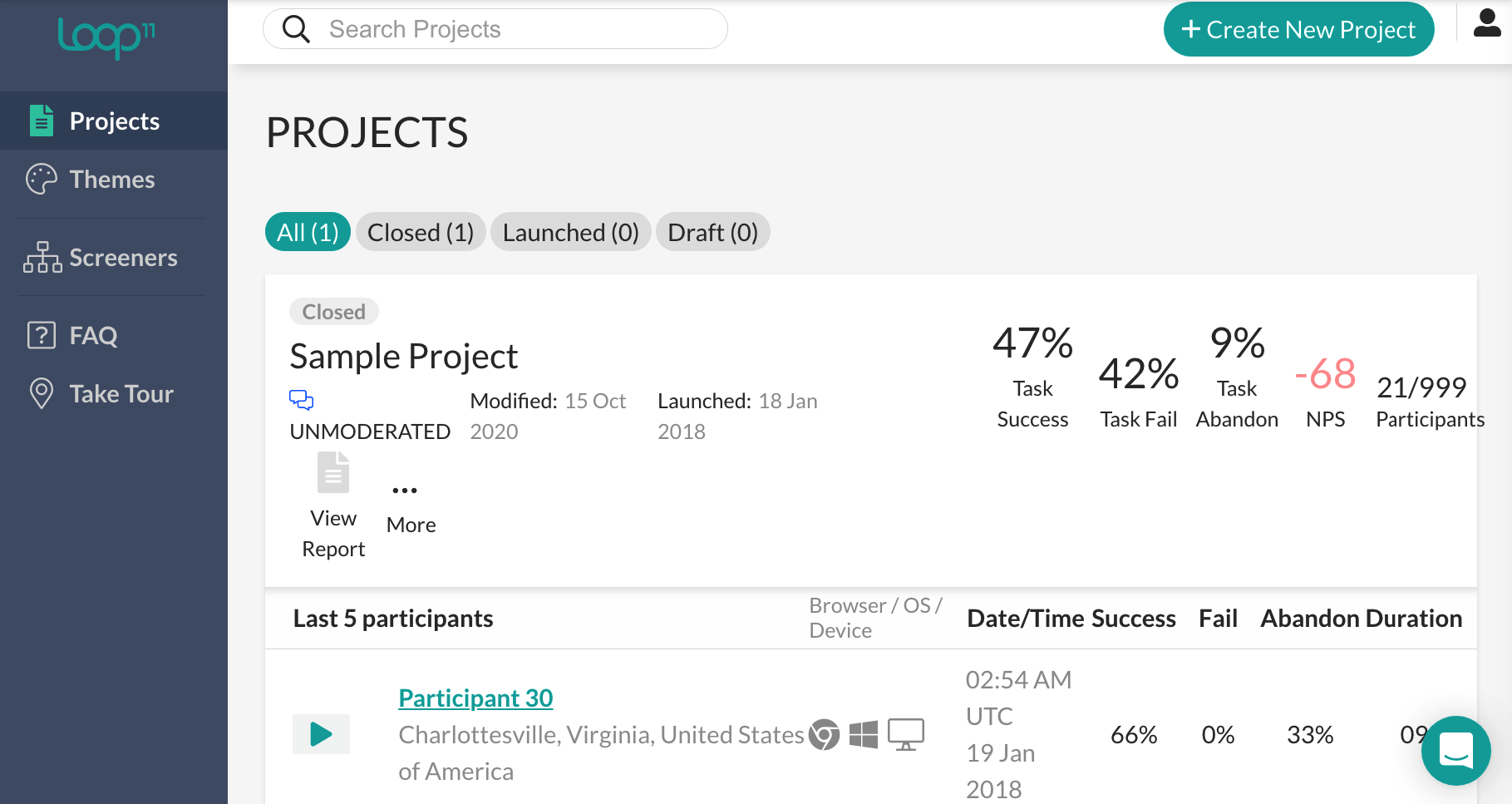

This is the calculation performed by a testing tool. Noticed the high number of participants (999). Obviously, to get such high numbers of participants one must run automated or unmoderated testing.

The problem with this approach is laid out in the previous quotation — which is that the work still has to be done to review the sessions. There is really no other way around it, you end up with more shallow and less realistic, Although more quantitative information is built up from unmoderated UI testing, that information is far less accurate than when the session is moderated. The statistics can look so convincing that they can give a false sense of accuracy to the client. This is expounded on by Kathryn Whitenton of NNg.

Metrics do not reveal causes of behavior; low participant motivation or inaccurate self-reported data can cause misleading metrics.

How Many Test Subjects?

The last section got into the important topic of how many test subjects are necessary. The number of test subjects for a reliable conclusion naturally relates to whether a test should be moderated or unmoderated. If, for example, 300 test subjects were necessary, then this is too many to reasonably do in the moderated format.

However, the guidance from NNg is that the number of test subjects for reliable UX conclusions is surprisingly small.

This is explained in the following quotation.

Elaborate usability tests are a waste of resources. The best results come from testing no more than 5 users and running as many small tests as you can afford. Testing with 5 people lets you find almost as many usability problems as you’d find using many more test participants.

Doesn’t matter whether you test websites, intranets, PC applications, or mobile apps. With 5 users, you almost always get close to user testing’s maximum benefit-cost ratio.

The size of the sample depends upon the variance between the sample and the population — this is called the sample size in statistics. What NNg illustrates is that the variance is normally low.

The pushback on this number is explained in the following quotation.

The main argument for small tests is simply return on investment: testing costs increase with each additional study participant, yet the number of findings quickly reaches the point of diminishing returns.

Sadly, most companies insist on running bigger tests.

“A big website has hundreds of features.” This is an argument for running several different tests — each focusing on a smaller set of features — not for having more users in each test. You can’t ask any individual to test more than a handful of tasks before the poor user is tired out.

This fits with our research into many areas far afield of UX research, which is that in the majority of cases the generally followed practice, does not match the research conclusion.

Furthermore, this illustrates how few entities that do UX research actually publish valuable information on UX training. It is amazing to us how often we refer to Jakob Nielsen’s NNg website. However, they stand out that far from the pack. This might be a good time to also point out that we have no relationship with NNg.

Testing And Dialing In the Unmoderated Test Script

One major, and seemingly little-discussed disadvantage of unmoderated UX testing is that the test itself must be adjusted as it is often not accurate the first time it is created. This means that explanations must be altered and this burn-in process may take 15 to 20 test subjects until there is comfort with the usefulness of the test. This increases the incentive to push off the testing and to perfect the unmoderated test — which is exactly what we recommend against doing.

And let us back up a moment before going too far down this road. Let us observe the number of test subjects that are actually needed from the previous section. According to NNg it is an average of 5.

Why would we go to the work to set up an automated test, if we can moderate 5 remote tests? As is stated on their website.

The vast majority of your user research should be qualitative — that is, aimed at collecting insights to drive your design, not numbers to impress people in PowerPoint.

Right. How are we supposed to gain qualitative insights from automated tests?

This is the philosophical problem we have with unmoderated testing that uses high numbers. And there is a chicken or egg aspect to this discussion, and it is this. Software vendors must push for automation to justify their applications, and that means being able to run many test subjects through a test. This is a case of the tail-wagging the dog, where the testing tool vendor is setting the parameters of the test, for their software sales, rather than the testing method driving what software is used.

Small Tests, Iterative Testing

Our approach is to receive feedback early in the process from a smaller number of tests subjects (what Jakob Nielsen refers to as iterative testing) in a fully moderated way. It is difficult to see, how with the same resources, this same thing can be accomplished with an unmoderated testing approach.

Our Approach

We combine lower common denominator testing with moderated sessions. With high-quality moderated sessions, it is not necessary to have 1000 (as in the example above) test subjects.

Secondly, like Jakob Nielsen, we propose testing very early in the development process. One cannot run 1000 automated unmoderated tests for the multiple stages that development goes through.

Conclusion

The primary benefits of unmoderated UI testing are lower cost and speed. There can be circumstances where unmoderated UI testing can make sense, but our approach is to not use it.

We feel in the majority of cases the data compiled by automated unmoderated testing tools are not sufficiently reliable and provides a false sense of insight. This is expressed by Kathryn Whitenton of NNg in the following quotation.

Unmoderated studies can quickly accumulate a LOT of data, so you’ll need an organized, analytical approach to turn this data into actionable insights about your design.

And this goes back to the central rule of thumb, the number of test subjects necessary for each test is normally so low, it undermines the argument for automated tools.