How Parallel Processing for the SNP Cost Optimizer Does not Work

Executive Summary

- The SNP optimizer parallelization functionality has never worked.

- We cover the OSS notes on the fake parallelization functionality.

- It is important never to commingle the concepts of parallelization with decomposition.

Introduction to Problem Decomposition

On a recent project, I was reading through documentation that stated that the SNP optimizer’s parallelization functionality did not work. This surprised me, as I have worked on some SNP Optimizer accounts where parallel processing was enabled – but the critical point is that I was never asked to troubleshoot the parallel processing setting to see if it worked. I have, however, tested the CTM parallel processing functionality along with another consultant and found that it worked quite well and exactly as expected.

Dealing with Broken Functionality in SNP

The fact that it does not work and some OSS notes on this topic floored me because it is such elementary and necessary functionality. I cannot think of a single best breed vendor with parallel processing functionality that does not work.

Secondly, SAP is going around and selling something called the COPT 10 parameters, which are partially sold as speeding up the optimizer. However, SAP’s base functionality, the most elementary way of improving the optimizer’s speed – which is to enable it to address all (instead of just one) of the processors on the server, does not even work!

OSS Notes on the Topic of Optimizer Parallelization

Quotations from OSS notes on this topic and the year they were logged are listed below:

“Note: 1359613 (2009) When running the SNP Optimizer or Deployment Optimizer and using the parallel processing, it seems that the parallel processing is not creating enough parallel processes or is slow in general.

Note: 1558875 (2012): You are running the SNP planning and are using a parallel profile. The planning run fails with MESSAGE_TYPE_X short dump when trying to write back the results to LC. The termination occurred in the ABAP program “/SAPAPO/SAPLDM_PEGAREA” – in “CONFLICT_PARALLEL_TRANSACTION”. The message raised is /SAPAPO/PEGAREA 003, “Conflict with a parallel transaction on creation of pegging area”.

Note: 1476001 (2010): When running SNP Optimizer and using the parallel processing profile, you notice that the bucket offset setup in the optimizer profile is ignored.

Note: 958520 (2006): Parallel processing with the Deployment optimizer fails with error message: ‘Optimizer: Error reading optimizer profile XXXXXXX’ where XXXXXX is the name of the deployment optimizer profile.”

Reacting to this Note

This is amazing that SAP has had this set available on the optimizer profile. The parallelization functionality has either never worked correctly or, at some point, stopped working correctly back in 2003. At least the OSS notes go back to 2003. Secondly, as I am running into problems documented as not working in 2013, SAP has not fixed the problem in a decade. I wonder if parallelization has been removed from SAP’s sales presentations on the SNP optimizer.

I can’t say for sure, but I feel that SAP salesmen have not been telling potential customers that parallel processing is broken.

The Importance of Not Commingling the Concepts of Parallelization with Decomposition

I recently also ran into an interesting decision, which was in response to the SNP optimizer’s problems with parallelization. A company created a very customized decomposition front end for the optimizer. The decomposition program was built around the resources – allowing each variant of the decomposition program to include specific resources as finitely scheduled.

Before I get to my viewpoint on this program’s logic, it’s necessary to explain the twin concepts of parallelization and decomposition.

Two Critical Features of Optimization

Two of the most essential features of the SNP optimizer and optimization generally are parallelization or decomposition. They are related but different technical aspects of how optimization is managed. I define both below:

- Parallelization: This is how the problem is divided before being sent to the server’s processor. Parallelization has no “business” output implication. It is purely a characteristic of how the problem is sent to one or multiple processors or multiple servers. It has everything to do with processor duration. Parallelization has become increasingly important because microprocessors have reached the limitations of how much faster they can be made. After all, the channels within which the electrons traverse cannot be made much more narrow. This has been the main driving force behind improvements in processor speeds for decades – the Moore’s Law. Moore’s law is so often quoted in business magazines as a universal rule no longer applies to the future and has not applied to processors for at least five years. This is why you will notice that recent microprocessors for your laptop or desktop from Intel have been “dual” core – or two microprocessors on top of each other. Therefore, most servers that run APO have four or quad processors, which sounds great – but the software must be designed to divide the problem appropriately, which is tricky – because the problem must be divided so that the interdependencies are accounted for.

- Decomposition: Decomposition is covered in more detail in this article. Almost all supply and production planning methods have some decomposition. The only method I am aware of that does not – and this is with a caveat which I will discuss momentarily – is inventory optimization and multi echelon planning (MEIO)

How Inventory Optimization & Multi-echelon Planning Works

MEIO looks at the entire supply network at every iteration, decides to create a recommendation, and then moves onto the next iteration with the inclusion of the recommendation from the last iteration. Therefore MEIO’s decomposition methodology can be considered time-based (although it is an entirely different type of time decomposition than the time decomposition that I am just about to discuss). After millions of these “snapshots,” MEIO arrives at an end state that is the supply plan.

However, the SNP cost optimizer does use decomposition. The decomposition types are listed on the optimizer profile that is in the screenshot above. One can use time decomposition (which segments the planning horizon and processed them in a sequence with the closer in periods being processed first.)

Other ways of performing decomposition – which can be used along with time decomposition but may not be used together — are resource and product decomposition. Each decomposition method divides the problem according to that master data element. So with product decomposition, the problem looks like the following:

Overall Supply Network

San Diego, San Francisco, Las Vegas, Portland, Los Angeles, Bakersfield, Seattle, Phoenix

- Sub-Problem 1 Product: A Locations: San Diego, Los Angeles

- Sub-Problem 2 Product: B Locations: Portland, Seattle, Phoenix

Each subproblem is the sub-network of that product.

This is an example taken from my book Supply Planning with MRP, DRP, and APS Software.

On the other hand, resource decomposition would look like the following:

Overall Resource Network

San Diego Bottling Resource, San Diego Liquid Resource, Napa Valley Bottling Resource, Napa Valley Liquid Resource

- Sub-Problem 1 Product: A Resources: San Diego Bottling Resource, San Diego Liquid Resource

- Sub-Problem 2 Product: B Locations: Napa Valley Bottling Resource, Napa Valley Liquid Resource

As should be evident, one cannot both decompose on resources and locations. Resource decomposition is generally employed when resource utilization is more important than the location interaction with the company and vice versa.

The Max Runtime

Now that we have covered the difference between parallelization and decomposition, we can move to the next analysis stage. Firstly, the SNP cost optimizer allows the setting of a maximum runtime. This is because given present server technology versus the size and complexity of problems that the optimizer is required to solve – the optimizer will keep running far beyond the time the company’s business must implement the optimizer to receive the planning results. Therefore employing a maximum runtime is always necessary. The optimizer then takes this time and divides the number of subproblems by the time available and then provides this amount of time to each sub-problem. The only exception to this is if it solves the subproblem optimally before its allotted time, then moves on to the next sub-problem.

The SNP cost optimizer is unsophisticated because it does not apply different amounts of time to the differently sized problem. A subproblem with one location for a product receives the same amount of time as one with ten locations.

The Implication of What Has Been Covered Up To This Point

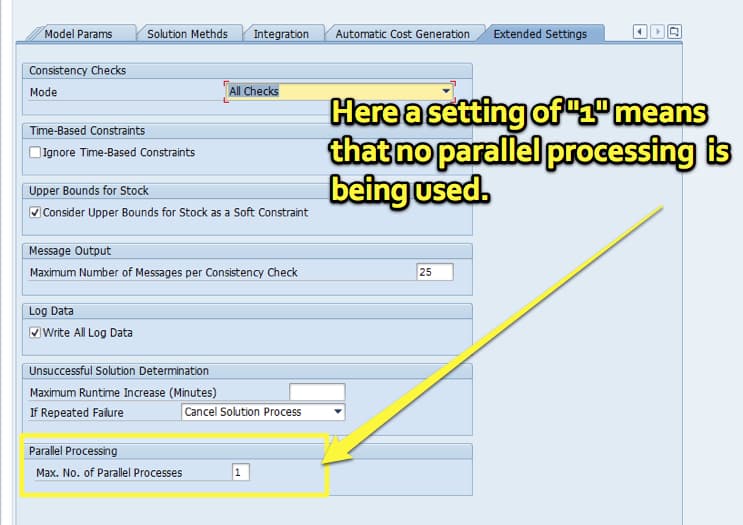

In summary, while any custom decomposition method can be used as one sees fit – it does not have anything to do with parallelization. While both technical settings relate to runtime, any decomposition method employed will not address the fact that the optimizer can still only address one of the server’s microprocessors. The other microprocessors are idle.

Conclusion

The evidence points to basic functionality for the SNP cost optimizer not working and that it has not worked for at least a decade – possibly more. SAP has known parallelization not functioning and has done nothing to inform their customers of this fact. Even though there have been multiple APO releases since 2003, SAP has not removed the setting from the optimizer profile. Instead, it sits there – tempting companies to use it – each of these companies has to go through the cycles of learning that it is not operational.

The second point of this article is that decomposition is not a substitute for parallelization. Decomposition does not increase the processing capacity as does parallelization – instead, it is a business-driven construct to allowing the optimizer to logically divide the problem into subproblems, something that it must do to perform its work.

SNP already has decomposition types based on time, resources, and product, which all do, in fact, work. There is nothing to say that a more customized decomposition front end cannot be placed onto the optimizer. But it can never address the issue of non-functional parallelization functionality in the SNP optimizer.