The Complicity of the Media in Pushing The AI Bubble

Executive Summary

- The IT media has helped push the AI bubble to feverish heights by bringing close to no critical thinking to AI coverage.

- We have assembled several quotations that point out the issue.

Introduction

These quotes provide insight into the reality of coverage on AI by IT media.

Our References for This Article

If you want to see our references for this article and other related Brightwork articles, see this link.

The Quotes

“In recent years broader interest in topics like “machine learning” and “deep learning” has led to a deluge of this type of opportunistic journalism, which misrepresents research for the purpose of generating retweets and clicks – he calls it the “AI misinformation epidemic”. A growing number of researchers working in the field share Lipton’s frustration, and worry that the inaccurate and speculative stories about AI, like the Facebook story, will create unrealistic expectations for the field, which could ultimately threaten future progress and the responsible application of new technologies.” – The Guardian

And this one..

“In February 1946, when the school bus-sized, cumbersome Electronic Numerical Integrator and Computer (Eniac) was presented to the media at a press conference, journalists described it as an “electronic brain”, a “mathematical Frankenstein”, a “predictor and controller of weather” and a “wizard”. In an attempt to tamp down some of the hype around the new machine, renowned British physicist DR Hartree published an article in Nature describing how the Eniac worked in a straightforward and unsensational way.

Much to his dismay, the London Times published a story that drew heavily on his research titled An Electronic Brain: Solving Abstruse Problems; Valves with a Memory. Hartree immediately responded with a letter to the editor, saying that the term “electronic brain” was misleading and that the machine was “no substitute for human thought”, but the damage was done – the Eniac was forever known by the press as the “brain machine”. – The Guardian

And this one..

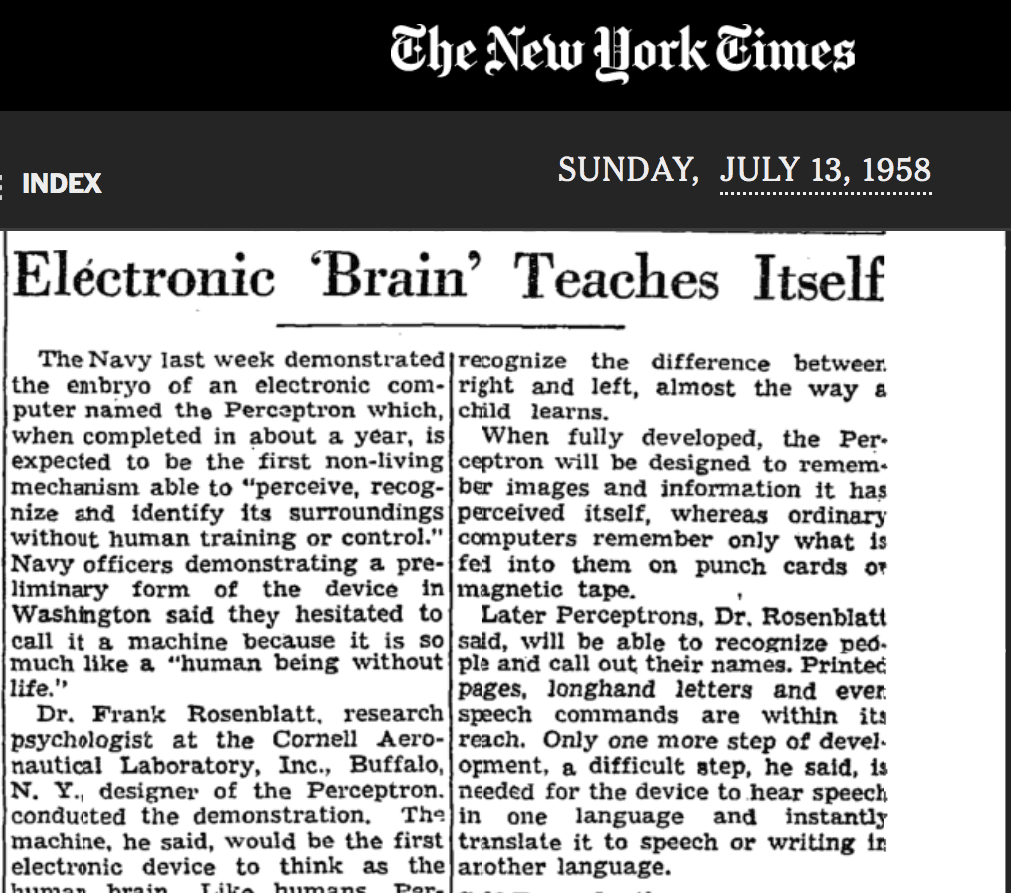

“It was a similar story in the United States after Frank Rosenblatt, an engineer at Cornell Aeronautical Laboratory, presented a rudimentary machine-learning algorithm called the “perceptron” to the press in 1958. While the “perceptron” could only be trained to recognize a limited range of patterns, the New York Times published an article claiming that the algorithm was an “electronic brain” that could “teach itself”, and would one day soon “be able to walk, talk, see, write, reproduce itself and be conscious of its own existence”. – The Guardian

This is the quote from the New York Times.

“The Navy revealed the embryo of an electronic computer today that it expects will be able to walk, talk, see, write, reproduce itself and be conscious of its existence.

The embryo — the Weather Bureau’s $2,000,000 “704” computer– learned to differentiate between right and left after fifty attempts in the Navy’s demonstration for newsmen. The service said it would use this principle to build the first of its Perceptron thinking machines that will be able to read and write. It is expected to be finished in about a year at a cost of $100,000. Dr. Rosenblatt a research psychologist at the Cornell Aeronautical Laboratory, Buffalo, said Perceptrons might be fired to the planets as mechanical space explorers. Later Perceptrons will be able to recognize people and call out their name and instantly translate speech in one language to speech or writing in another language, it was predicted. Mr. Rosenblatt said in principle it would be possible to build brains that could reproduce themselves on an assembly line and which would be conscious of their existence.” – New York Times, 1958

On IBM Watson

“Watson’s Jeopardy triumph was a publicity bonanza that was worth millions. After its stunning victory, IBM announced that Watson’s question answering skills would be used for more important things…IBM has been deploying Watson in health care, banking, tech support, and other fields where massive data bases can be used to provide specific answers to specific questions.

Dave Ferrucci, the head of IBM’s Watson team, admitted, “Did we sit down when we built Watson and try to model human cognition? Absolutely not. We just tried to create a machine that could win at Jeopardy.”

However, I avoided using the word read because Watson does not know what words and phrases mean, like World War II and Toronto, nor does it understand words in context, like “its second largest.” Watson’s prowess is wildly exaggerated. Like many computer programs. Watson’s seeming intelligence is just an illusion. Watson’s performance is in many ways a deception designed to make a very narrowly defined set of skills seem superhuman. Imagine a massive library with 200 million pages of English words and phrases and a human who does not understand English, but has an infinite amount of time to browse through this library looking for marching words and phrases. Would we say that person is smart? Even Dave Ferrucci, the head of IBM’s Watson team, admitted, “Did we sit down when we built Watson and try to model human cognition? Absolutely not. We just tried to create a machine that could win at Jeopardy.” …unfortunately Watson is not nearly as generally powerful as it might seem. Almost 95 percent of Jeopardy! answers, as it turns out, are titles of Wikipedia pages. Winning at Jeopardy! is often just a matter of finding the right article.” – The AI Delusion

This brings up the question of why IBM selected Jeopardy! to make a splash with Watson. It seems quite likely that IBM selected the easiest well known game show, that has the most narrow degree of response that fit with the limits of its technology, and then used its success with Jeopardy! to make several exaggerated claims around its technology, extending to other applications, that would be far more difficult to master than Jeopardy! The necessity of a robot that returns the result of a Wikipedia title does not have many applications outside of…well, playing Jeopardy!

“Thus far, IBM hasn’t even turned Watson into a robust virtual assistant. When we looked recently at IBM’s web page for such a thing, all we could find was a dated demo of Watson Assistant that was focused narrowly on simulating cars, in no way on par with the more versatile offerings from Apple, Google, Microsoft, or Amazon.” – The AI Delusion

Technological Illiteracy Among IT Journalists

“What Lipton finds most troubling, though, is not technical illiteracy among journalists, but how social media has allowed self-proclaimed “AI influencers” who do nothing more than paraphrase Elon Musk on their Medium blogs to cash in on this hype with low-quality, TED-style puff pieces. “Making real progress in AI requires a public discourse that is sober and informed,” Lipton says. “Right now, the discourse is so completely unhinged it’s impossible to tell what’s important and what’s not.”

“She adds that while many researchers stand to benefit from hype, as a writer who wants to critically examine these technologies, she only suffers from it. “There are few outlets interested in publishing nuanced pieces and few editors who have the expertise to edit them,” she says. “If AI researchers really care about being covered thoughtfully and critically, they should come together and fund a publication where writers can be suitably paid for the time that it takes to really dig in.” – The Guardian

Conclusion

Media coverage of AI is quite inaccurate. First, they tend to repeat claims made by the companies they cover, and second, they have financial entanglements with many of the industry sources that promote AI. The IT media has been critical to exaggerating the AI bubble.