The Data Implications of AI and ML Projects

Executive Summary

- AI and ML projects are typically initiated without a proper analysis of the data preparedness for such projects.

- In these videos, we cover what to consider about the data side of the equation.

Introduction

Our References for This Article

If you want to see our references for this article and other related Brightwork articles, see this link.

Cap Gemini on AI

See how much emphasis Capgemini places on explaining data limitations and how much AI is presented as virtually limitless in its potential.

Part 2 of the Data Implications of AI and ML

Many AI projects have either totally failed or been greatly undermined by problems from the data side.

About IBM’s Presentation of Watson

Watson AI’s for medical analysis has been widely panned when actually used. IBM has been widely accused of making false claims of what Watson was able to do. Furthermore, IBM’s problems with Watson are even at the data level. That is, IBM has not even demonstrated much in the way of the capability of building integrated data sets to feed any AI that Watson may have.

AI Failures Due to a Lack of Data

This issue is covered in the article How Many AI and Machine Learning Projects Will Fail Due to a Lack of Data.

Issues with Data Labeling

Data labeling is one of the first steps necessary to make sense of the data. The lack of data labeling holding back AI projects is described in the following quotation.

The problem normally is not in the data itself but in the absence of labels for such data. Behind every modern achievement in AI field enormous efforts in labeling. For instance description of ImageNet (http://image-net.org/about-overview) includes the following quotation “Images of each concept are quality-controlled and human-annotated.”. This ImageNet consists of 14 million unique images. Even if the labeling of every photo took 1 minute, it means 250+ men-years of effort. This is a hidden price of AI success. When somebody spouts about AI in legal, finance, logistics, maintenance, it usually means years of involvements for domain experts or just another false claims. Basically about anybody can recognize a lemon on a photo, but what level of qualification will be required for a “cash gap” labeling? – Denis Myagkov

The amount of effort in data labeling is described as follows:

According to a report by analyst firm Cognilytica, about 80 percent of AI project time is spent on aggregating, cleaning, labeling, and augmenting data to be used in ML models. Just 20 percent of AI project time is spent on algorithm development, model training and tuning, and ML operationalization. – VentureBeat

This effort is highly manual, as is explained in the following quotation.

Banavar is seeing the growth of these types of jobs at IBM. “We are hiring people that we were not hiring even five years ago,” he said. “People who just sit down and label data.”

Why is this important? It’s for the machines to learn.

“Without labeling, you cannot train a machine with a new task,” he said. “Let’s say you want to train a machine to recognize planes, and you have a million pictures, some of which have planes, some of which don’t have planes. You need somebody to first teach the computer which pictures have planes and which pictures don’t have planes.” So IBM hires labelers, or outsources the work.

“This is a somewhat depressing job for the future,” said Walsh.

“All the impressive advances we see with deep learning have come about using what is called ‘supervised learning’ where the data is labelled ‘good’ or ‘bad,’ or ‘Bob’ and ‘Carol,'” said Walsh.

It’s a necessary part of machine learning. “We can’t do unsupervised learning as well if the data is unlabelled,” said Walsh. “The human brain is excellent at this task. And deep learning needs lots and lots of labelled data. It’s likely though a very repetitive and undemanding task.” – TechRepublic

The Software Category for Data Labeling

Naturally, companies are also trying to automate data labeling, which is explained in this quotation.

5) Automated data labeling is key. Solutions leveraging ML to automate labeling will be best-positioned to win because they won’t need to build a large workforce, train labelers, and deal with quality control to the same degree. Their solution will also offer a better margin structure for the customer and accelerated time to value.

The data labeling market was $1.5B in CY18 and is expected to grow to $5B in CY23, a 33% CAGR. The market for third-party data labeling solutions is $150M in CY18 growing to over $1B by CY23. According to Cognilytica Research, for every 1x dollar spent on third-party data labeling, 5x dollars are spent on internal data labeling efforts, over $750M in CY18, growing to over $2B by end of CY23. – Medium

How to Think of Data Labeling

The following quote explains how to think of data labeling.

A simple way to think about data labeling is to liken it to the process of converting crude oil to refined fuel. While data is the new currency in AI/ ML, quantity and availability of data is only part of the story. Supervised ML models cannot run on data that lacks targets, in the way a vehicle cannot run on raw petroleum. In fact, the lack of ability to scale labeled data or training data is often the biggest blocker to accelerated ML model development. – Alegion

This is also explained in the following quotation.

A massive elephant lives in the digitization room, and it’s one which frankly most data ingestion, AI and RPA solution providers completely ignore. This elephant represents unstructured data. – Antworks

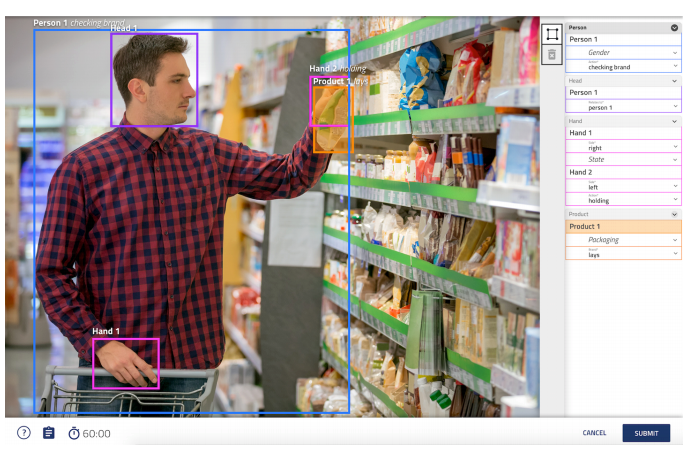

Data labeling of an image in software from Alegion. This image must be labeled to be used in any mathematical sense for analysis.

Supervised and Unsupervised Learning and Labeled Data

The power of unsupervised methods is widely touted recently, but the term unsupervised has become overloaded. The preferred term for using ML to harness the power of the vast amounts of data without requiring external data labeling is self-supervised learning2. This approach can be powerful, given a set of data containing meaningful associations or segmentations.

When the business needs the answers to these specific questions to derive value, teams can label the data after-the-fact, in order to embed the targets into the available data. The labeled data set enables data science teams to take the supervised approach and broaden their business applications. – Alegion

Conclusion

There is a lot of complex work involved in creating the data sets necessary to run AI and machine learning. Unfortunately, in the white-hot area of AI/ML marketing, there is little tolerance for communicating the reality around these topics.

But these are some clear results from the lack of emphasis on data before initiating AI/ML projects.

- AI/ML projects are being initiated without sufficient analysis of the data availability.

- This guarantees waste.

- There is little published on the limitations of data for AI/ML.

- Data labeling is set to become the next major area of discussion around AI/ML as this process comes before running algorithms.