The Statistical Falseness of Much AI, Data Science, and Big Data Results

Executive Summary

- AI, data science, and Big Data are frequently based upon false statistical relationships.

- These false relationships are only set to increase as the pressure mounts to obtain benefits from massive AI investments.

Introduction

There are massively false statistical relationships being promoted by AI, Big Data, and data science. This is expressed in one dimension by the following quotation concerning Google Flu, an attempt to use AI to predict the flu’s incidences.

Our References for This Article

If you want to see our references for this article and other related Brightwork articles, see this link.

We begin this article with the following quotation.

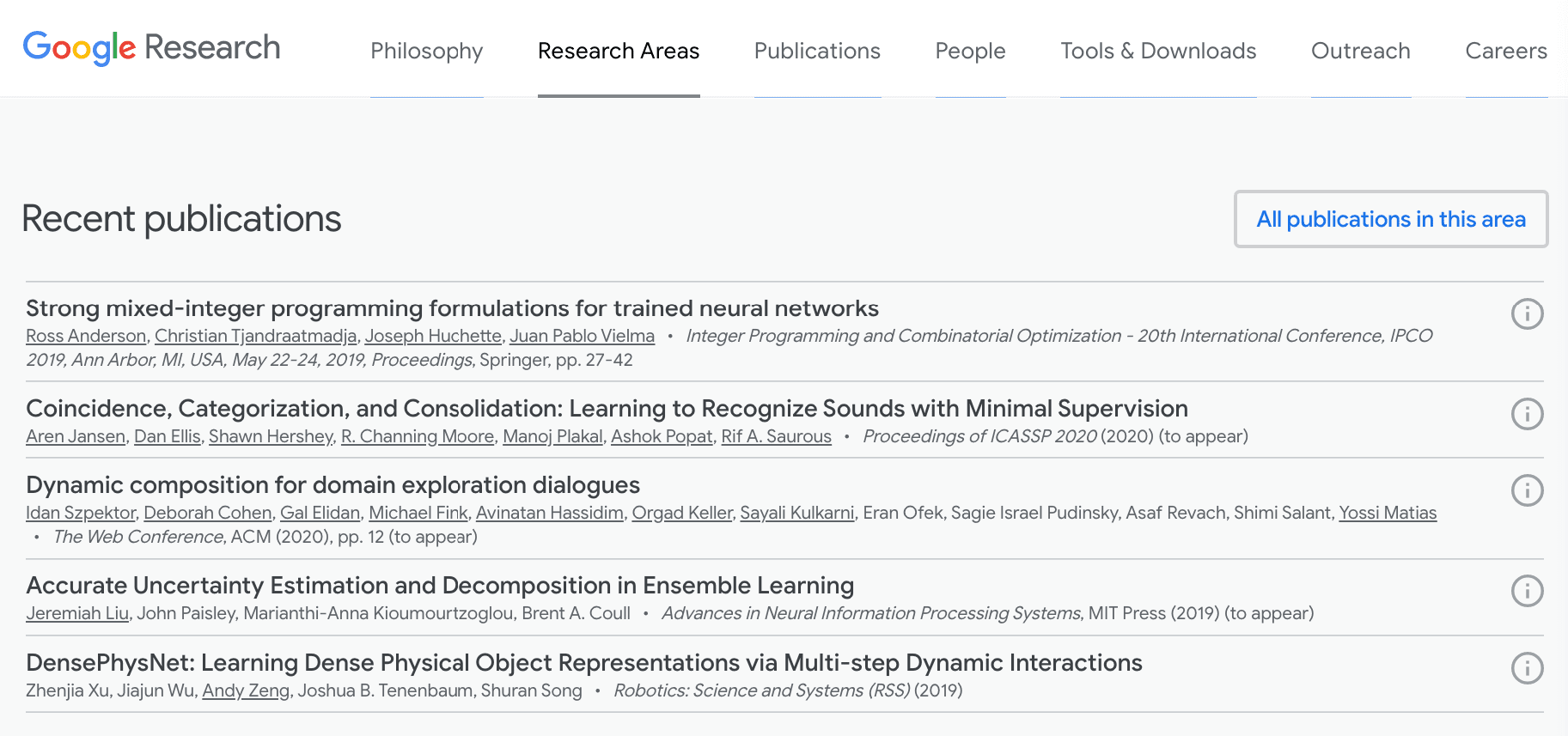

“In 2011, Google reported that its researchers had created an AI program called Google Flu that used Google search queries to predict flu outbreaks up to ten days before the Centers for Disease Control and Prevention (CDC). They boasted that, “We can accurately estimate the current level of weekly influenza activity in each region of the United States, with a reporting lag of about one day.” Their boast was premature.

Google’s data mining program looked at 50 million search queries and found that the 45 key words that best fit 1,152 observations on the incidence of flu, using IP addresses to identify the states the searches were coming from.

In its 2011 report, Google said that its model was 97.5 percent accurate, meaning that the correlation between the model’s predictions and actual CDC data was .975 during the in-sample period. An MIT professor praised the model: “This seems like a really clever way of using data that is created unintentionally by the users of Google to see patterns in the world that would otherwise be invisible. I think we are just scratching the surface of what’s possible with collective intelligence.”

However, after issuing this report, Google Flu over-estimated the number of flu cases by 100 out of 108 weeks, by an average of nearly 100 percent. Out of sample, Google Flu was far less accurate than a simple model that predicted that the number of flu cases in the coming weeks will be the same as the number last week, two weeks ago, or even three weeks ago.” – The AI Delusion

A natural question arises.

How did Google report the high predictive power of its AI algorithm publicly, when it could have waited to know for sure what its predictive power was for sure? Google is a highly respected technical entity – however, who trustworthy are they if they are lying about their projects?

It is all “sugar and spice and everything nice” at the Google AI website.

Google lists an enormous number of research areas related to its AI. However, is Google a research entity? Not to say they don’t publish research, but is Google dedicated to telling the truth about its research outcomes? Does Google lack a bias regarding the outcome of its AI research?

In Google We Trust?

The answer is it isn’t, and it doesn’t. Google has already been caught rigging results from progressive political candidates like Tulsi Gabbard. Google prefers corrupt establishment candidates that it supports with funds. Google does not want true progressive candidates that may attempt to do something like….say regulate Google.

The lawsuit was thrown out, under substantially the presumption that Google has no public responsibility to allow for free speech on its “platform.” Google is regulated by really no one and can do what it likes.

This is the company we want performing research, or we want to pose as a research entity?

Google is a publicly-traded company, and publicly traded companies do not have a good history when it comes to publishing accurately on their research.

Google has an enormous number of publications. Many of the publications are presented at conferences. Google is an immensely rich company and is known for getting prominent individuals to come to Google to speak for free. How will publications that want to be associated with Google’s powerful brand resist the influence of Google? History, such as the influence of pharmaceutical companies’ research integrity, already tells us what the answer is.

Secondly, this was a public project, so its accuracy could be measured.

Most AI, data science, Big Data projects are internal, and their claims cannot be verified. Thirdly, media entities show far more interest in publishing claims around AI (which is easy as all one needs to do is record statements from the company’s marketing department) versus verifying the claims. Which, of course, in private projects are impossible in any case. We cover in several articles how there has been very little post mortem of the false claims made that lead up to the first two AI bubbles. Why would there be any introspection or analysis of the false claims around the third AI bubble?

False Statistical Relationships

The problem with the interpretation and presentation of the relationship between machine learning and statistics is expressed in the following quotation.

“In 2017 Greg Ip, the chief economics commentator for the Wall Street Journal, interviewing the co-founder of a company that develops AI applications for businesses. Ip paraphrases the co-founder’s argument:

If you took statistics in college, you learned how to use inputs to predict an output, such as predicting mortality based on body mass, cholesterol and smoking. You added or removed inputs to improve the “fit” of the model.

Machine learning is statistics on steroids: It uses powerful algorithms and computers to analyze far more inputs, such as the millions of pixels in a digital picture, and not just numbers but images and sounds. It turns combinations of variables into yet more variables, until it maximizes its success on questions such as “is this a picture of a dog” or at tasks such “persuaded the viewer to click on this link.”

No! What statistics students should learn in college is that it is perilous to add and remove inputs simply to improve the fit.

The same is true of machine learning. Rummaging through numbers, images, and sounds for the best fit is mindless data mining — and the more inputs considered, the more likely it is that the selected variables will be spurious. The fundamental problem with data mining is that it is very good at finding models that fit the data, but totally useless in gauging whether the models are ludicrous. Statistical correlation are a poor substitute for expertise.

In addition to overfitting the data by sifting through a kitchen sink of explanatory variables, data mining algorithms can overfit the data by trying a wide variety of nonlinear models.” – The AI Delusion

This is further explained by the following quotation.

“When I applied a stepwise regression procedure, I found that, if 100 variables were considered, 5 true and 95 fake, the variables selected by the stepwise procedure were more likely to be fake variables than real variables. When I increased the number of variables being considered to 200 and then 250, the probability that an included variable was a true variable fell to 15 percent and 7 percent, respectively. Most of the variables that ended up being included in the stepwise model were there because of spurious correlations which are of no help and may hinder predictions using fresh data.

Stepwise regression is intended to help researchers deal with Big Data but, ironically, the bigger the data, the more likely stepwise regression is to be misleading. Big Data is the problem, and stepwise regression is not the solution.” – The AI Delusion

The Problem with Proxies

The following describes the problems with the indicators or proxies that are selected on data modeling projects.

“When modelers can’t measure something directly, they use proxies — in fact, it’s virtually always true that the model uses proxies. We can’t measure someone’s interest in a website, but we can measure how many pages they went to and how long they spent on each page, for example. That’s usually a pretty good proxy for their interest, but of course there are exceptions. Note that we wield an enormous amount of power when choosing our proxies; this is when we decide what is and isn’t counted as “relevant data.” Everything that isn’t counted as relevant is then marginalized and rendered invisible to our models. In general, the proxies vary in strength, they can be quite weak. Sometimes this is unintentional or circumstantial — doing the best with what you have — and other times it’s intentional — a part of a larger political model. Let’s take on a third example. When credit rating agencies give AAA ratings to crappy mortgage derivatives, they were using extremely weak proxies. Specifically, when new kinds of mortgages like the no interest, no job “NINJA” mortgages were being pushed onto people, packaged, and sold, there was of course no historical data on their default rates. The modelers used, as a proxy, historical data on higher quality mortgages instead. The models failed miserably.

There’s lots at stake here: a data team will often spend weeks if not months working on something that is optimized on a certain definition of accuracy when in fact the goal, is to stop losing money. Framing the question well is, in my opinion, the hardest part about being a good data scientist.” – On Being a Data Skeptic

Companies Classified as AI That Are Not Using AI

This is explained in the following quotation.

MMC studied some 2,830 AI startups in 13 EU countries to come to its conclusion, reviewing the “activities, focus, and funding” of each firm.

“In 40 percent of cases we could find no mention of evidence of AI,” MMC head of research David Kelnar, who compiled the report, told Forbes. Kelnar says that this means “companies that people assume and think are AI companies are probably not.”

As noted by Forbes, the claim that these startups are “AI companies” does not necessarily come from the firms themselves. Third-party analytic sites are often responsible for the classification, and it’s not clear from MMC’s report what percent of fake AI startups it identified were actively misleading their customers.

But the report’s findings show that there are clear incentives for companies not to speak up if they’re misclassified. The term “artificial intelligence” is evidently catnip to investors, and MMC found that startups that claim to work in AI attract between 15 and 50 percent more funding compared to other companies. – The Verge

Conclusion

There is an enormous number of “AI” projects ongoing. Many individuals and companies are more about trying to attain AI’s claims than verifying if these claims are true. Statistically false relationships are easy to find in big data sets, and can help justify projects.

AI projects are getting longer in the tooth now and are under pressure to produce results.

Some statistical relationships are just errors that are part of the exaggerated statistical knowledge base of so many “data science” resources. However, others are being provided as valid relationships when they are known to be untrue. The primary motivation is to try to meet the extraordinarily exaggerated claims that were made by salespeople to help sell AI projects. Look at so many companies that have sold AI projects.

The number of lies and false information contained in the marketing literature of consulting firms like CapGemini is extensive. Does anyone think that Capgemini, IBM, Accenture, Wipro, etc.. would hesitate for a moment to lie to their clients and to the general market about statistical relationships that they found from AI projects?