What Happened to the Second AI Bubble’s Expert Systems?

Executive Summary

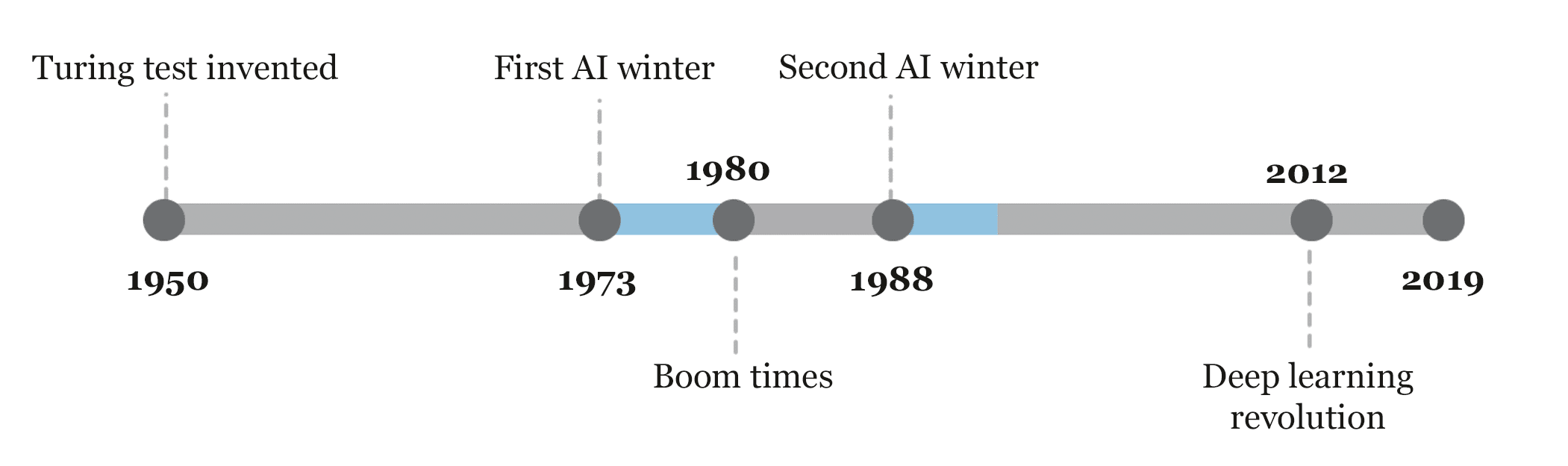

- Most people that work with or read about AI know of the first and second bubble and winter.

- The second AI bubble was centered on expert systems. So what happened to them?

Introduction

The first AI bubble was based upon highly inaccurate projections on what could be accomplished in generalized intelligence or Strong AI by proponents like Marvin Minsky. The second AI bubble was about AI research switching away from generalized intelligence and towards something called expert systems, an area that is, for some reason, barely discussed in the present day and at the time of the third AI bubble.

Our References for This Article

If you want to see our references for this article and other related Brightwork articles, see this link.

Graphic from Sebastian Schuchmann.

The third AI bubble (the one we are present in) is driven not by generalized intelligence or expert systems but by neural networks/deep learning.

What is an Expert System?

Wikipedia describes an expert system as follows.

In artificial intelligence, an expert system is a computer system that emulates the decision-making ability of a human expert. Expert systems are designed to solve complex problems by reasoning through bodies of knowledge, represented mainly as if–then rules rather than through conventional procedural code.

The first expert systems were created in the 1970s and then proliferated in the 1980s. Expert systems were among the first truly successful forms of artificial intelligence (AI) software. An expert system is divided into two subsystems: the inference engine and the knowledge base. The knowledge base represents facts and rules. The inference engine applies the rules to the known facts to deduce new facts. Inference engines can also include explanation and debugging abilities.

Upon reading the description, it almost appears as if expert systems were not AI at all. And expert systems were successful?

How is that the case?

In decades of IT consulting, I never witnessed an expert system. The term expert system went entirely out of vogue after the 2nd AI bubble popped. Examples of expert systems that I researched were little more than domain expertise databases.

Something else left out is why the focus moved to expert systems — which were “baby systems” compared to what was promised during the first AI bubble. Marvin Minsky and other AI experts promised full generalized intelligence sometime around the 1980s. All that had to be done was for computers to model the human brain — which, according to the major proponents of the first AI bubble, worked exactly like a computer.

Are SAP, Siebel, and Oracle Expert Systems?

The following quote is also from Wikipedia. It isn’t easy to find much substance written on expert systems, and in reviewing the web pages on expert systems, we found the most substantial content on Wikipedia. Still, there is a problem with this quotation.

In the 1990s and beyond, the term expert system and the idea of a standalone AI system mostly dropped from the IT lexicon. There are two interpretations of this. One is that “expert systems failed”: the IT world moved on because expert systems did not deliver on their over hyped promise. The other is the mirror opposite, that expert systems were simply victims of their success: as IT professionals grasped concepts such as rule engines, such tools migrated from being standalone tools for developing special purpose expert systems, to being one of many standard tools. Many of the leading major business application suite vendors (such as SAP, Siebel, and Oracle) integrated expert system abilities into their suite of products as a way of specifying business logic – rule engines are no longer simply for defining the rules an expert would use but for any type of complex, volatile, and critical business logic; they often go hand in hand with business process automation and integration environments.(Emphasis added)

Yes, the first interpretation is accurate, and the second is not.

Again, expert systems or anything like expert systems have not been a topic of conversation. And SAP and Siebel are programmed functionality. How is running MRP recording an accounts receivable transaction or displaying the sales forecast in an expert system?

It isn’t.

But the person who made this entry into Wikipedia appears to have an incentive to try to define “expert system” so broadly as to be meaningless. This goes along with a long-term pattern, not just in AI, to justify failed investments. SAP ECC and Seibel CRM are designed to record the transactions. Their only “decision support,” if it can be called that, can view reports. But is a report an expert system? If so, why don’t visualization vendors like Tableau or Power BI use the term expert system in their marketing collateral?

AI is when the software can respond to changes. It is not when it is explicitly programmed to provide functionality for data input. In the article Why Train Neural Networks When One Can Perform Preset Programming, we cover the distinction between preset programming and machine learning.

If applications like those from SAP, Oracle, or Salesforce (Salesforce, for example, has Einstein, which is some gussied-up AI, but that is not what his article refers to), then the term expert systems does not have any real meaning.

Conclusion

Expert systems appear to be a type of programmed domain expertise. It was a huge step back from the first AI bubble’s claims to lead to a virtually conscious intelligence by the 1980s.

What is curious is that while the second AI bubble is well known, there is a little discussion that the second AI bubble’s primary artifact appears to have not been AI at all. We have reviewed some comments that perhaps expert systems were subsumed into other systems or competitive advantage within companies. Companies don’t discuss their competitive advantages (try to keep a vendor or consulting firm quiet about their successes with anything within their customers).

What is curious is we found no commentary that perhaps expert systems were nothing more than marketing fluff. In our research into the first AI bubble, we found many false claims, with expert systems being just another in a long line of false information provided by those who worked in the AI industry — including some of the most prestigious academics like Marvin Minsky.

Now, when we turn our attention to the second AI bubble, we again find vaporware and, as with the first AI bubble, a general disinterest in discussing what happened to the promises made by these systems. In nearly all the coverage of expert systems, it is mentioned by those that cover the topic that expert systems were the second phase of AI, but almost no coverage that the claims made by proponents of expert systems were not true. The 2nd AI bubble popped because what was promised by expert systems was very far from what was promised. Ever since the origin of AI in the 1950s, there appears to be a constant orientation to cover up AI’s false claims.

The major problem is that journalists working for significant establishment media don’t know anything about AI/ML and tend to gullibly repeat whatever they are told by the AI expert they are pointed to. These AI experts tend to have significant financial biases in favor of AI. Note how McDonald’s received positive coverage by claiming they were adding AI to their menus by acquiring an AI company. As we cover in the article, How Awful Was the Coverage of the McDonald’s AI Acquisition?

We are already predicting a pop to the third AI bubble based on neural networks, triggered first by IBM’s win at Jeopardy!

When this third bubble pops, will there be as little interest in researching the claims made by those who helped promote the bubble? Will there be a fourth AI bubble, where, just as with the 2nd and 3rd AI bubbles, the history of false claims of the previous bubbles is swept under the carpet? History says yes. As we cover in the article Why Couldn’t Gorbachev Figure Out The Strategic Defense Initiative or Star Wars, even outside of IT, there is a long-term pattern of not accurately critiquing failure.