What is the Definition of Forecast Accuracy?

Executive Summary

- Forecast accuracy has a simple definition but many important details.

- We cover the definition of forecast accuracy and its implications.

Video Introduction: Definition of Forecast Accuracy

Introduction

Understanding and measuring forecast error is critical to improving forecast accuracy but not many people actually understand how measuring forecast accuracy or forest error works. In this article, we will explain some of the most important, and least discussed areas of forecast accuracy or forecast error measurement.

Our References for This Article

If you want to see our references for this article and related Brightwork articles, visit this link.

Why Understand The Definition of Forecast Accuracy?

It is crucial to comprehend forecasting error as it provides the necessary feedback to improve forecast accuracy eventually.

Forecast error is deceptively easy to understand. The vast majority of people who work with forecast errors can often be caught off guard about the forecast error they rely upon.

The following realities apply to forecast error or forecast accuracy measurement.

Inconvenient Fact #1: Forecast Accuracy or Error Measurement is Far More Than What is Generally Explained

The term “the devil is in the details” certainly applies to forecast accuracy. Forecast error or accuracy is deceptive. It seems easy to understand but isn’t. Most books that cover forecast accuracy measurement are satisfied to stop at just the forecast error math. Actually, most of the officially used forecast error calculations methods (MAPE, MAD, MRSE, etc..) are not very effective and measuring error. However, the forecast error calculation method is only one a number of different dimensions for forecast accuracy that must be understood.

Inconvenient Fact #2: Most People That Discuss Forecast Accuracy Do Not Understand the Topic

In our experience, most people lack the attention to detail to understand forecast accuracy in its full form, and no matter how many times they have explained what it is, they will never actually understand it. This includes the majority of the population and a sizable percentage of the population that works in forecasting.

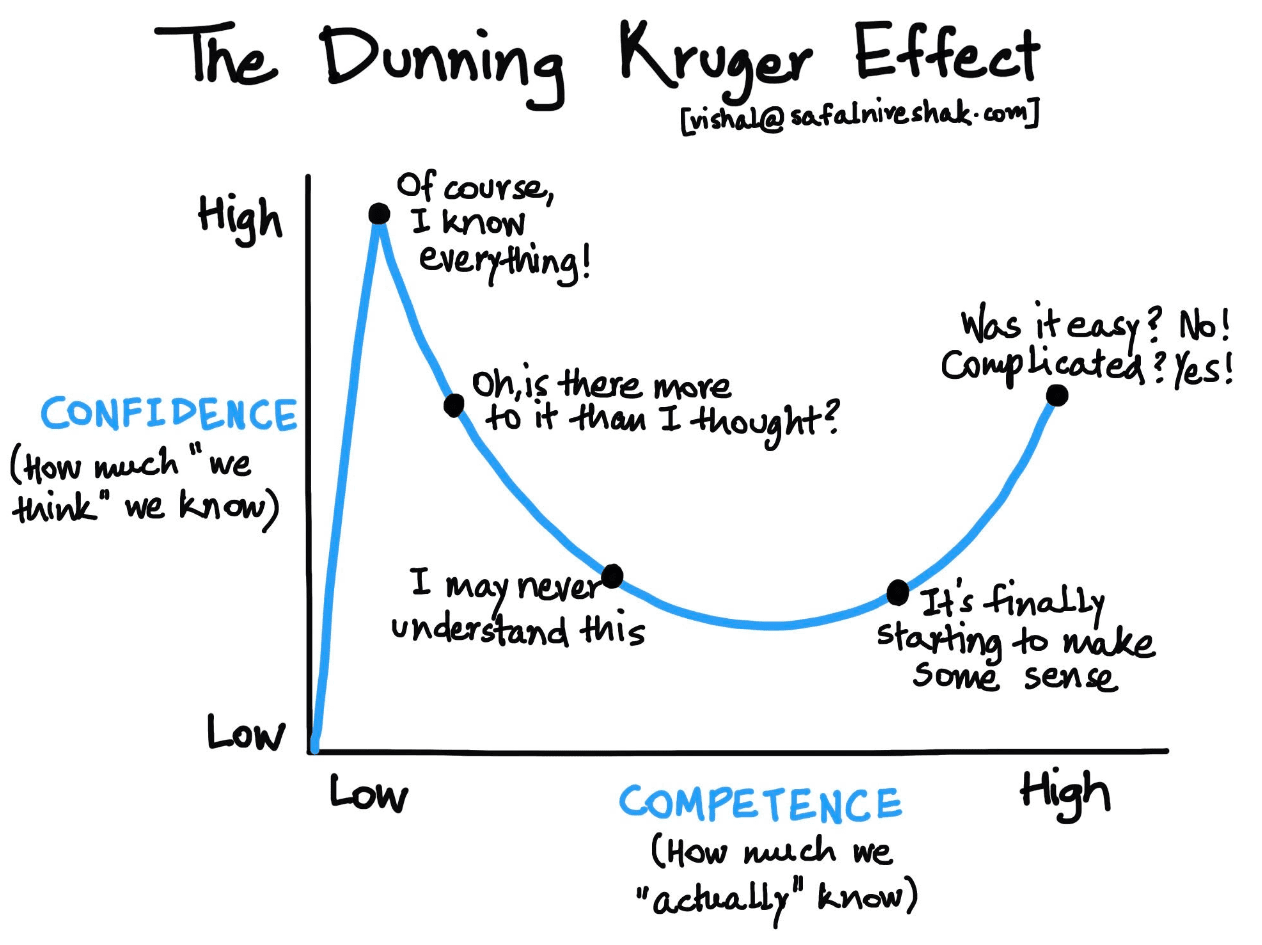

For executives, because they work at such a high level, and are so unfamiliar with getting into details, extremely few executives can ever understand forecast accuracy. The problem is that executives fund forecast improvement projects, but the forecast improvement is measured using forecast accuracy measurements that it is impossible for them to understand. This is a bit like science research funding. Science research is funded by individuals who don’t understand science and can’t differentiate between good and bad science. Both executives and the top administrators at science funding entities suffer from what is known as the Dunning Kruger Effect. This is the condition of knowing so little about a topic, and one does not understand how little one knows.

Executives sit in the first position. The vast majority of executives have never worked in forecasting and have never calculated a forecast error, and if you send this article to them, they will say that they have read it to try to show you they are smart, but in fact, will not read it.

An Effective Definition of Forecast Accuracy

Forecast accuracy is at a high level the difference between the forecast and what actually happened. However, let us elaborate on this general definition.

Item #1: The Difference Between The Forecasted Values and Actual Values

Forecast accuracy is the degree of difference between the forecasted and the actual values and the agreed-upon forecasting bucket (so weekly, monthly, quarterly, etc.). The size of the forecasting bucket of measurement completely changes the forecast error. A forecast error measured on a weekly bucket will always be lower than a forecast error measured on a monthly bucket.

Item #2: Can Only Be Known Historically

Forecast accuracy is never known until the event has passed. This is why all forecast accuracy measurement is historical.

Item #3: Future Error Probabilities

Future forecast accuracy can only be described in terms of accuracy probability. This accuracy probability is based upon historical accuracy.

Weighed Forecast Accuracy 1

| Forecast | Actual | 6 Month Average Forecast | Unweighed Accuracy | Weighed Accuracy | |

|---|---|---|---|---|---|

| Product B | 20 | 5 | 20 | 25% | .045 |

| Product A | 200 | 150 | 200 | 75% | 1.36 |

| Average | 50% | 70% |

Here is a sample forecast error/accuracy measurement. Notice it is declared what the measurement bucket is — which is monthly. The measurement bucket is nearly always the planning bucket.

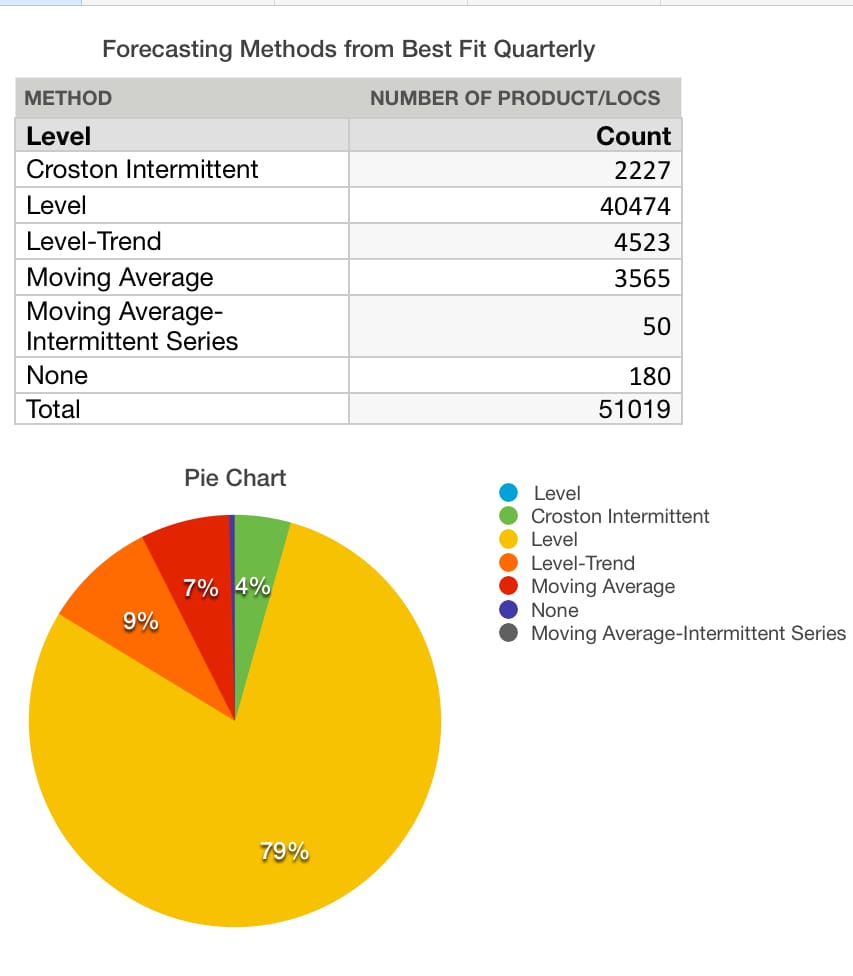

Results of An Analysis of The Demand History at One Company

Here is an example of an analysis (best fit) performed as a test outside of the company’s planning bucket. The planning bucket at this company was monthly. However, we wanted to test what would happen to model selection if the planning bucket was switched to quarterly. Changing the planning bucket is valuable for analysis. However, normally the error bucket is the planning bucket.

And this gets into another question.

What is the Correct Planning Bucket for Error Measurement?

The correct error measurement bucket or interval is the lead time of the product location combination. The error should be the “forecast over lead time.”

This is because the forecast is used to commit to stock a product at a particular location over the product’s lead time at that location.

This is a topic rarely discussed as it is on the ethereal side, but it is true. However, this is too complicated to implement as a database of products has a wide variety of lead times. Therefore, in most cases, the forecast lead time is not discussed, and the forecast error is set to an arbitrary unified interval, which is normally a month.

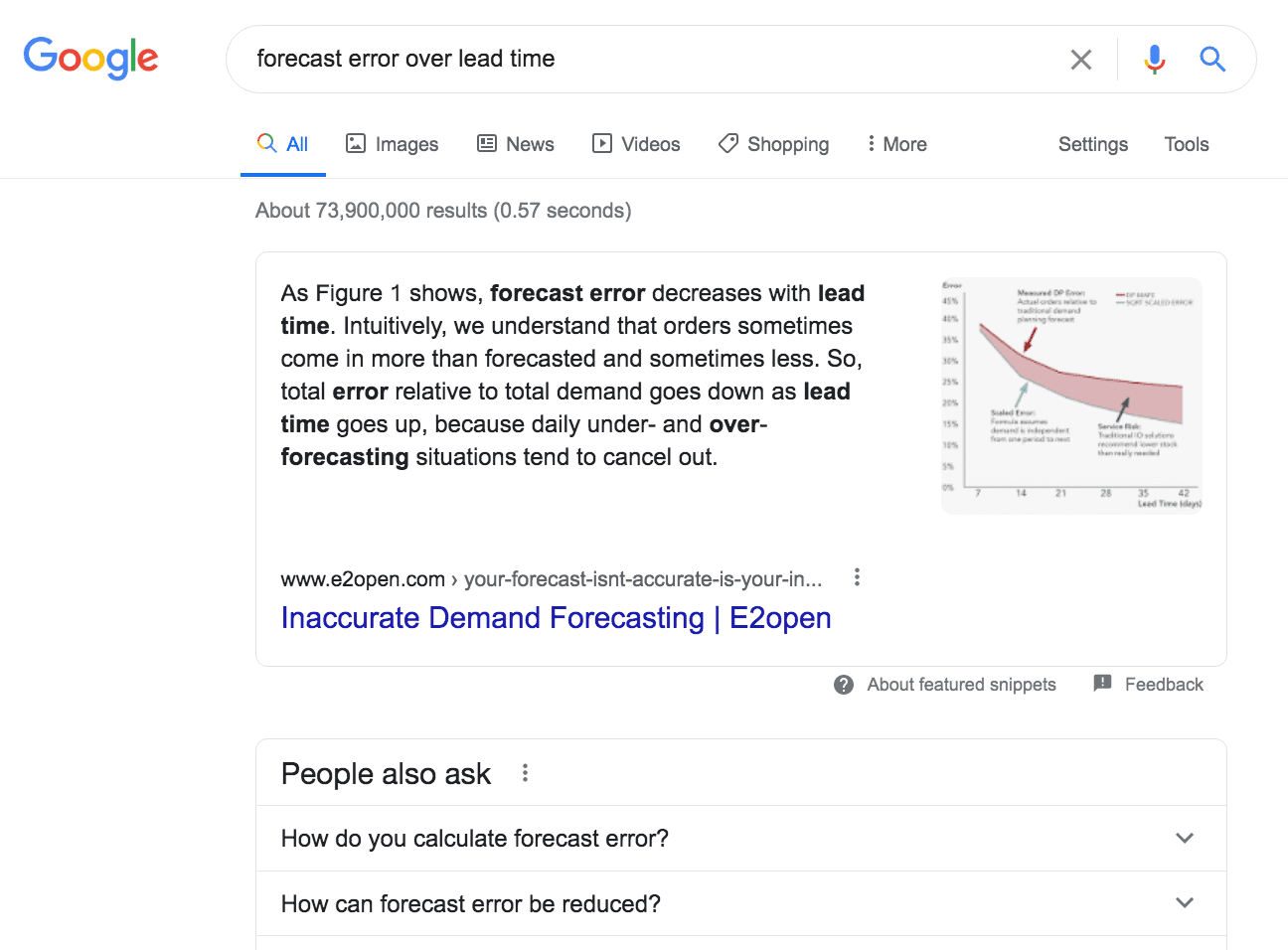

When one does a search in Google for “forecast error over lead time,” it is curious how few results come back related to the question. This topic is off the radar of most supply chain planning forecasting departments. However, it is important as the safety stock driven from the forecast (hypothetically, as dynamic safety stock is not very widely implemented) is supposed to be for the lead time of that product location combination.

Let us move to another area of forecast accuracy measurement.

Moving Past the Easy Part of Forecast Error Measurement

- Defining forecast accuracy is the easy part.

- The difficult part is getting into details of forecast accuracy.

The Importance of Listing the Multiple Dimensions of Forecast Accuracy or Forecast Error Measurement

Forecast accuracy has many dimensions.

Any forecast error or accuracy must have a complete listing of its dimensions, or else the forecast error or accuracy does not have any meaning. It cannot be compared to other forecast errors or accuracy calculations. It does not mean anything to declare a forecast accuracy of 75%, without listing the dimensions of the forecast accuracy measurement that were used. This is a topic we cover in the article How is Forecast Error Measured in All of the Dimensions in Reality?

Why the Complexity in Forecast Accuracy?

The reason for the complexity is that there are multiple dimensions of forecast accuracy that must be defined before reporting the forecast accuracy is understood. When companies discuss forecast accuracy, there is a strong tendency to select a forecast error measurement and then move on from that point to observe the measurement output. However, the dimensional analysis will, in most cases, be vastly underexplored.

Even beyond the topics raised in the article just references, there are also important distinctions to be understood regarding what “is” the forecast and what “is” the actual.

Let us address the definition of both of these values used to calculate forecast error or accuracy.

Distinction #1: Defining the Forecast

Was this the forecast before lead time, or were changes made within lead time doing something like demand sensing?

For a forecast accuracy measurement to be useful, it must not be altered after the time to respond to the forecast has passed. Demand sensing alters the forecast within lead time, which is a type of forecast accuracy cheating. I cover this in detail in the article How to Best Understand Demand Sensing and Demand Shaping.

The other part of the forecast accuracy measurement is the actuals. However, here also, we run into issues with obtaining an accurate set of values.

Distinction #2: Defining the Actuals

For the actuals, it is normally one of two values. One is the number that was sold. This is called actual demand. The second is the number that could have been sold if capacity has been available. This is called authentic demand. However, authentic demand is not what was provided or available in the databases of companies. Instead, they normally have a record of the constrained demand, actual demand. Authentic demand is what was demanded, which is not the same as what was sold. If a company stocks out of an item, demand is not recorded as a sales order and therefore is lost to the demand history. When the statistical forecast is generated, it uses the constrained demand history, not the authentic demand history. We cover this topic in the article How to Best Understand Measuring the Unconstrained Forecast.

Conclusion

Forecast accuracy has a simple high-level definition. But this is deceptive.

The more one dives into the specifics of forecast accuracy, the more explanation is required for what is actually being measured. The vast majority of those that have some connection to forecasting completely underestimate what is involved in producing a correct forecast accuracy measurement.

All companies should have their forecast accuracy assumptions and settings documented so that those that work with forecast accuracy can know what is being measured. It is truly amazing how rare it is to find companies that do this. When I ask not only executives in companies, as well as people that work adjacent to or in the forecasting department, they most often do not know the dimensions of the forecast accuracy measurement. However, this is what makes the numbers that come out of the forecast error measurement have meaning.