When Will People Notice the Gap Between the Promise and Reality of AI?

Executive Summary

- We are in the throes of an enormous amount of exaggerations regarding AI.

- A historical look at AI promises and current promises shows the gap between the two is massive.

Introduction

Under the constant barrage of hype around AI, it is curious how AI has consistently failed to deliver promises or how AI is currently failing to meet the hype.

Our References for This Article

If you want to see our references for this article and other related Brightwork articles, see this link.

The Constant Issue with AI

Anytime AI fails, a host of issues ends up being held to account. One that we covered in the article How Many AI Projects Will Fail Due to a Lack of Data?

In other cases, a lack of skills is blamed, which continues to the present day, even though an enormous number of people are training or have trained to be data scientists. Universities have added large numbers of university degrees in data science. There is an unending number of books and training material on data science. Most of the foundations of data science are not even that new (for instance, the algorithms are mostly quite old, while a specific technology may be original).

But still, we are told that we need enormous numbers of people to be trained to work in AI. Vishal Sikka, who has an AI startup, claims that AI must begin to incorporate everyone in a company, as he states in the following quotation.

We can bring AI to everybody, we can build AI systems that are transparent, easy to explore, easy to understand, and explainable in the sense that the developer understands. We can dramatically simplify the footprint that these AI systems carry, and we can bring problem-finding into the mix, by bringing business people, the domain experts and IT people into the construction and evolution of intelligent systems, not only the data scientists and the AI engineers.

The Lack of Sufficient Context

A significant problem with AI is that it is said to lack context. This means that not enough dimensions of information have been added to the AI model.

Higher-level animal brains build up an understanding over decades, and the brain is designed to be very efficient because it relies upon heuristics and does not calculate every possibility. This is easily observed by merely asking Alexa a non-standard question, and finding it does not know how to react.

As a person who has run ML algorithms for forecasting, many technologies overestimating by calling them “AI.” In reality, the only work in an automated way for a short period until human intervention is required.

2001: Space Odyssey was made back in 1968 and projected capabilities out in 2001 or 19 years ago. The AI demonstrated by HAL is referred to as either “strong AI” or generalized intelligence or pervasive artificial intelligence. By 1981, researchers had moved away from developing generalized intelligence AI, as it proved too challenging, and they were unable to show progress.

Fifty-one years later, there is still nothing even like this. Related to this application, we are now at the point where we can skip typing questions into Google and instead ask them to Alexa or Google Home. We might recommend remaining AI into something more modest and closer to what “AI” can do in the foreseeable future. The term machine learning or ML is also a human interprets, techniques that are categorized as ML, merely output metrics that a human interprets. So it seems that it might be called “computer-enabled human learning.” But then it is not sufficiently differentiated from any other use of the computer.

Alexa could not deny us entry through the bay doors even if she wanted to.

As this video explains, while 2001: A Space Odyssey is science fiction, director Stanley Kubrick used several AI advisors like Marvin Minsky to inform the script, and like his movie Dr. Strangelove, 2001, was based upon the current thinking of the time in 1968. HAL, the AI is essentially a fully autonomous intelligence that is shown as a “person in a box.”

However, despite repeatedly failing to meet its projections, in 2020, AI is considered one of the hottest fields, and its previous failures have been nearly erased from the collective consciousness.

AI and Flim Flam Men

Unethical business people are using AI as a mechanism to gain wealth. The AI field is filled with people lying about AI’s potential because venture capitalists begin writing checks when they hear the term “AI.”

Vishal Sikka raised $50 million, talking up the potential of AI. Vishal very clearly exaggerated both AI’s potential and his company’s ability to contribute to AI. And there is a lot of this going around. If one reads VentureBeat, as we cover in the article Is VentureBeat Independent Journalism?, it is a seemingly unending list of companies raising money based on this or that AI breakthrough.

Promoters have figured out that people are susceptible to exaggerated stories around AI. Notice that this video claims growth in AI capability was previously promised back in the 1960s but never occurred. This is a common feature of current AI forecasts; they entirely ignore and erase previous forecasts’ inaccuracies. If individuals can’t even (or more likely have lacked the interest to) measure forecast error, it seems that they aren’t in a good position to opine on the future of AI.

Like many AI technologies, self drivings cars have been postponed. Another question is why is there a shortage of drivers?

This is increasingly the reality of driving. Isn’t it great that someday these cars may be self-driving?

While funding is being directed to self-driving cars, traffic, in the US, and countries around the world have massively increased in just the past several decades. The biggest problem getting around the US is not that you have to drive yourself, but that all large and midsize and many smaller cities have steadily worsening traffic conditions, and there is no solution in sight.

The emphasis on self-driving cars is distracting from real issues, which are congestion and an already unsustainable carbon footprint from internal combustion engines. The most self-driving promises are some margin increased fuel efficiency; it does not address the primary issue.

Measuring The Real Life Impact of AI?

One measurement of the lack of progress on AI is merely observing how little it has changed the lives of the population and comparing them to inventions that have changed the lives of the population.

Advances like microprocessors or smartphones are readily apparent.

Virtually every person in the developed world owns multiple microprocessors (incorporated into various devices). Smartphones (which contain one two two microprocessors) are visible throughout society and have massively increased the accessibility of information. There is no debating the effect of these inventions on the world.

However, what is the contribution of AI to our daily lives? Voice recognition is one. This is explained in the following quotation as an example of narrow AI.

Narrow AI is something most of us interact with on a daily basis. Think of Google Assistant, Google Translate, Siri, Cortana, or Alexa. They are all machine intelligence that use Natural Language Processing (NLP). – Interesting Engineering

It is nice not to have to type a question using a keyboard, but this is such a minor benefit, the author barely uses the functionality on his phone.

Supervised Versus Unsupervised Learning

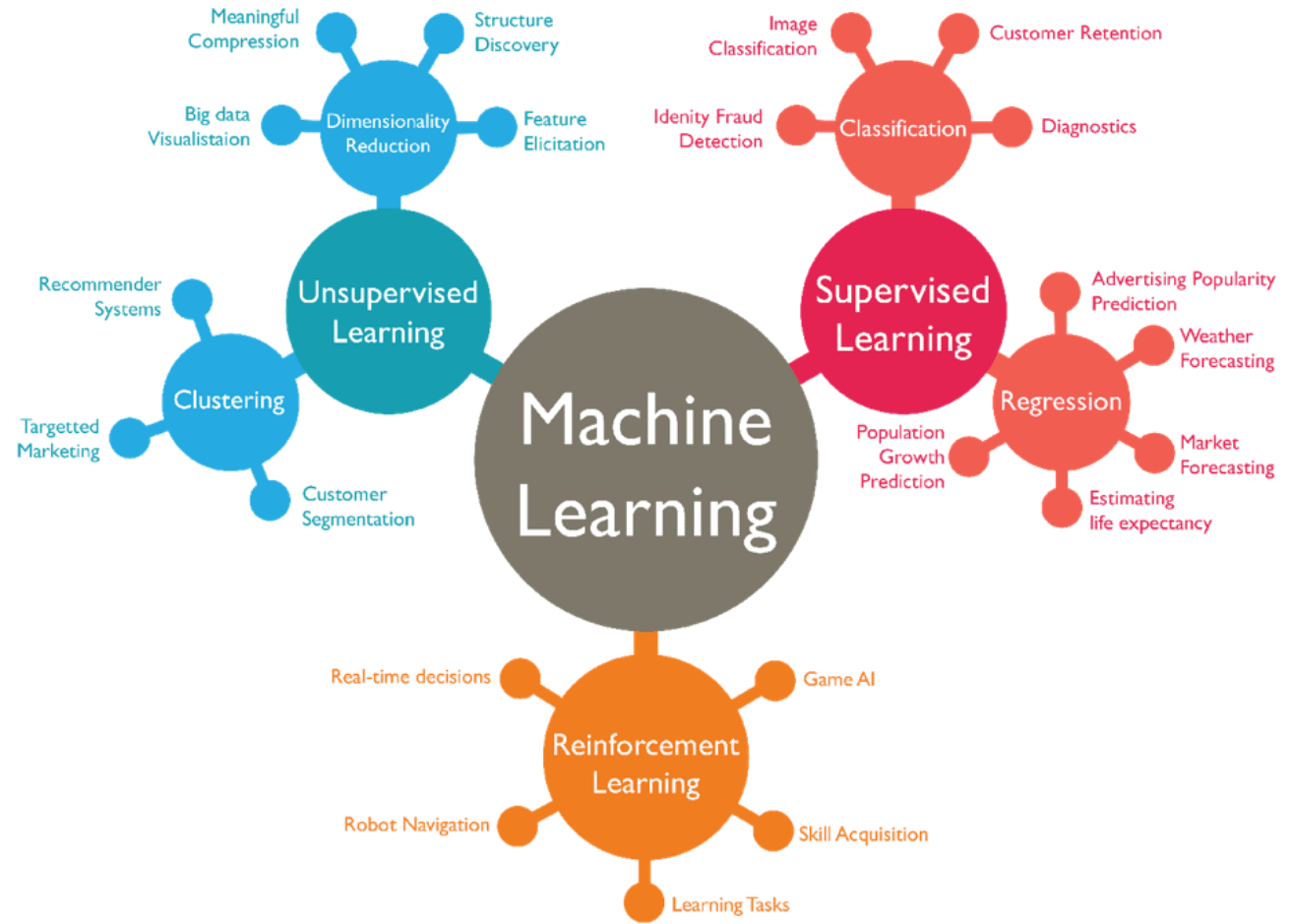

AI’s benefits to forecasting tend to be isolated and specific rather than generalized and somewhat dubious. For one reason, the category of AI used for forecasting is called machine learning, and these are just short algorithms that can run on multivariate data. This is the category of AI called “weak AI,” which means that there is no thinking going on; it is just the application of a preconfigured routine.

The vast majority of forecasting is under the supervised learning category of machine learning. It is technically all under AI, but calling supervised learning “AI” is quite a reach.

Unsupervised learning is much closer to the description of AI and has proven to have far fewer applications. And the improvements have been occurring in unsupervised rather than supervised learning.

This is covered in the following quotation.

I gotta say, it wins the Awesomest Technology Ever award, forging advancements that make ya go, “Hooha!”. However, these advancements are almost entirely limited to supervised machine learning,(emphasis added) which can only tackle problems for which there exist many labeled or historical examples in the data from which the computer can learn.

This inherently limits machine learning to only a very particular subset of what humans can do – plus also a limited range of things humans can’t do. – Eric Siegel

But here again, there is a definite limitation.

So our hopes and dreams of talking computers are dashed because, unfortunately, there’s no labeled data for “talking like a person.” You can get the right data for a very restricted, specific task, like handling TV quiz show questions, or answering the limited range of questions people might expect Siri to be able to answer. But the general notion of “talking like a human” is not a well-defined problem. Computers can only solve problems that are precisely defined. – Eric Siegel

Good Old Fashioned Regression is Now ML

Any person who has worked in analysis and statistics has used regression analysis.

However, now it is referred to as a type of machine learning. If regression is machine learning, then if I create a custom forecasting algorithm and apply it to data sets and match the algorithm to the data sets for which it works, is that also machine learning?

How, because the machine does not at all appear to be learning. Jay Tuck’s definition of AI is that it is software that writes itself. This seems bizarre until one realizes from a career perspective that DNA evolved to do just this.

However, from a career perspective, it would be much better if I categorized the world as AI rather than using my pattern recognition to create the model. We are moving to a situation where we elevate things that are machine-driven versus human intelligence-driven.

Dancing Robots

Do you find that dancing robots enhance your life? Well, NAO, the dancing robot, was considered one of the breakthroughs for AI. This robot seems to have programmed dances given different songs that it hears.

A Loss of Privacy in Asia…and Soon in the West

This type of surveillance used only occurs in casinos. However, it now allows for a surveillance state. It solves some crimes. However, its potential for abuse is so extensive that it is not a matter of if, but when it will be abused. China has already abused it.

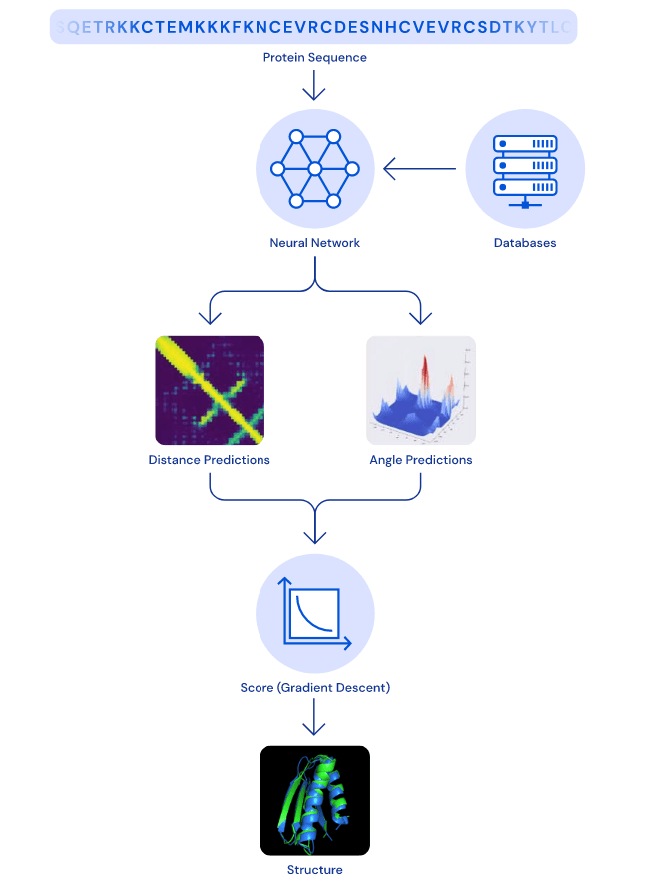

Neural Network for Modeling Protein Folding

Deepmind had claimed to developed a neural network that models the highly complicated process of how some proteins fold, as explained by the following quotation.

Scientists have long been interested in determining the structures of proteins because a protein’s form is thought to dictate its function. Once a protein’s shape is understood, its role within the cell can be guessed at, and scientists can develop drugs that work with the protein’s unique shape.

We trained a neural network to predict a distribution of distances between every pair of residues in a protein (visualised in Figure 2). These probabilities were then combined into a score that estimates how accurate a proposed protein structure is. We also trained a separate neural network that uses all distances in aggregate to estimate how close the proposed structure is to the right answer.

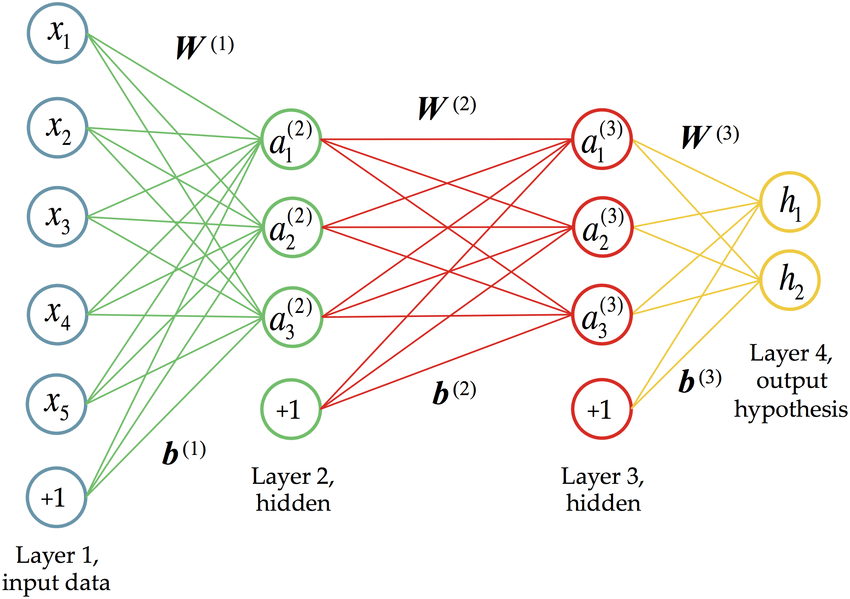

A neural network is just a decision tree that performs pattern recognition.

Naturally, one would try to apply mathematics to model various phenomena. However, neural networks, which are one approach to performing modeling, are somehow attributed with extra cache because they are categorized as AI.

AI for Automated Game Playing?

AI has mastered the game show Jeopardy and chess playing. There is now no human who can match the performance of a computer in Jeopardy and chess — although these are not particularly high value-added things in society.

Other Applications of AI

Navigation systems, such as Google Maps, are not AI. There are claimed benefits of AI for things like cancer identification, but these tend to be controlled studies produced by financially biased entities. And while it is difficult to find actual hard examples of the impact of AI on our lives, the AI industry continues to receive entirely outsized funding versus its contribution to making things more efficient or better.

AI to the Rescue for Everything?

There is a mindless AI application to seemingly solve every issue, even issues that can’t be addressed through AI. Humans need to reduce their global population quickly. They need to reduce their carbon footprint, how much garbage they generate, and a host of other issues. These are things that require real sacrifices, but instead of facing stark trade-offs, profit-oriented AI marketing infused are pitching AI “solutions” to problems that do not require any sacrifices. Most peculiarly, AI is being used to disarm our actual human intelligence by saying…

“It’s ok…….because AI will take care of it.”

Conclusion

The shortcomings or “reality gap” of AI have received little coverage in media entities and profit-oriented companies trying to raise money using AI promises. It has similarly had little impact on many executives,decision-makers, as explained by the following quotation.

According to a Gartner Survey of over 3,000 CIOs, Artificial intelligence (AI) was by far the most mentioned technology and takes the spot as the top game-changer technology away from data and analytics, which is now occupying a second place.

AI is set to become the core of everything humans are going to be interacting with in the forthcoming years and beyond. – Interesting Engineering

I have worked with and met CIOs.

There is no way that CIOs are the correct group to ask if they think that AI will be a “game changer” for IT because most CIOs don’t have enough background in either math or AI to know how to interpret or fact check the topic. This survey was conducted by Gartner, and entity that makes its money catering to people like CIOs and presupposing that all knowledge is contained within the executive ranks of companies. This is an example of how laymen can be influenced by the marketing hype of vendors and consulting firms. Secondly, when Gartner’s polling of executives is mentioned, what is never, literally never mentioned, is that many AI vendors pay Gartner to promote the AI vendor market.

Curious about which software vendors paid Gartner to be included in their Magic Quadrant for AI? It is easy to find out. Most of the companies included in this AI MQ paid Gartner. What incentive do so many companies paying Gartner to create for Gartner to be objective on this or any other technological/software category?

What CIOs would have a much harder problem doing is identifying the actual applications of AI. And that is the problem with AI.