Why Did AI Rise for a Third Time After the 1st and 2nd AI Winters?

Executive Summary

- The fact that AI has risen to be so prominent is undeniable.

- Less obvious is what lead to the third rise of AI.

Introduction

It has been proposed that the third and current AI bubble started in the early 2010s. Two factors seem to get the majority of the credit. The first is expressed in the following quotation.

Our References for This Article

If you want to see our references for this article and other related Brightwork articles, see this link.

See the following quote.

“The ice of AI’s first winter only fully retreated at the beginning of this decade after a new generation of researchers started publishing papers about successful applications of a technique called “deep learning”.” – The Guardian

As we cover in the following chapter how Minsky and Papert shut down neural network research with their book Perceptrons.

“In 2013, John Markoff wrote a feature in the New York Times about deep learning and neural networks with the headline Brainlike Computers, Learning From Experience.

Not only did the title recall the media hype of 60 years earlier, so did some of the article’s assertions about what was being made possible by the new technology. “In coming years,” Markoff wrote, “the approach will make possible a new generation of artificial intelligence systems that will perform some function that humans do with ease: see, speak, listen, navigate, manipulate and control.” – The Guardian

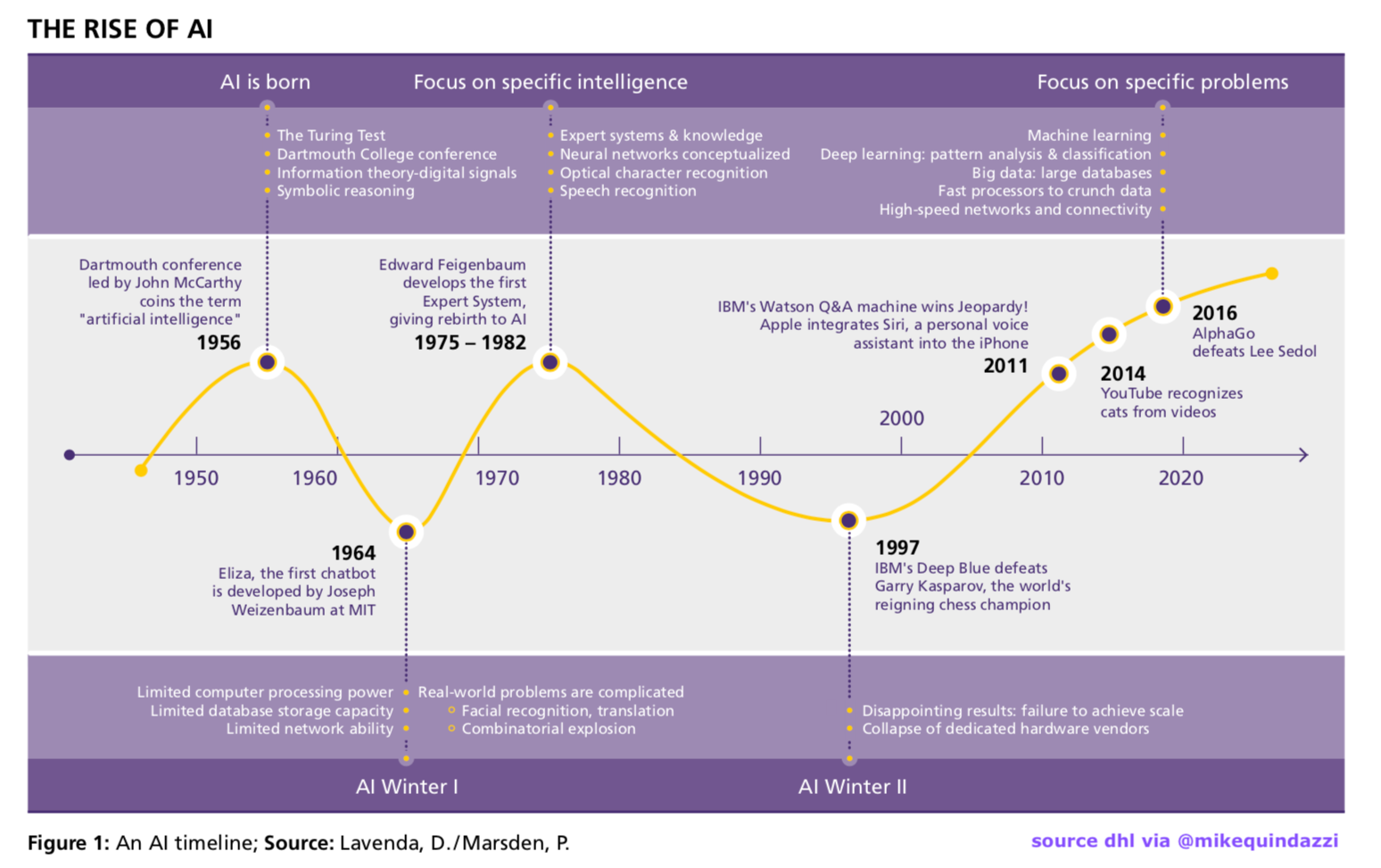

There is no perfect graphical representation of the history of AI. Every graphic will leave out some data points and include some that not everyone will agree with deserve to be included. This graphic above is educational, but it leaves out the magnitude of the difference in investment between the first and second AI bubbles and the current bubble.

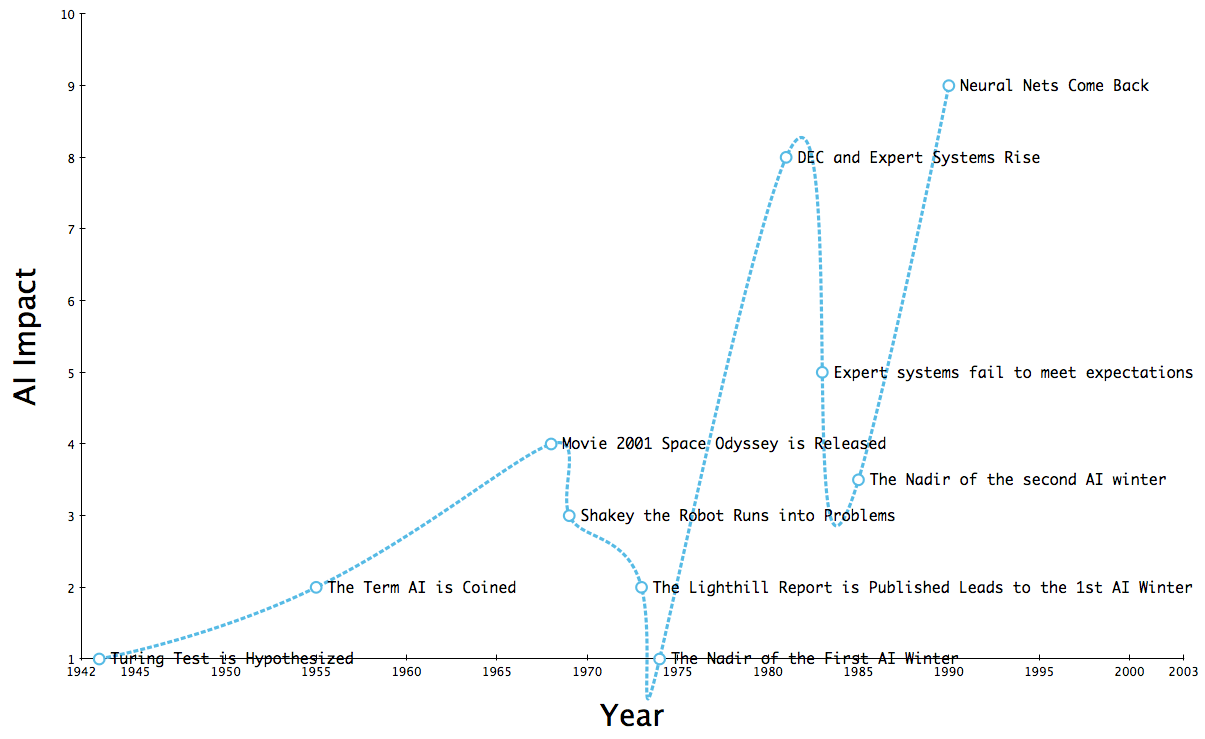

We created the following graphics that give a rough indicator of how much greater the current AI bubble is than the previous bubbles.

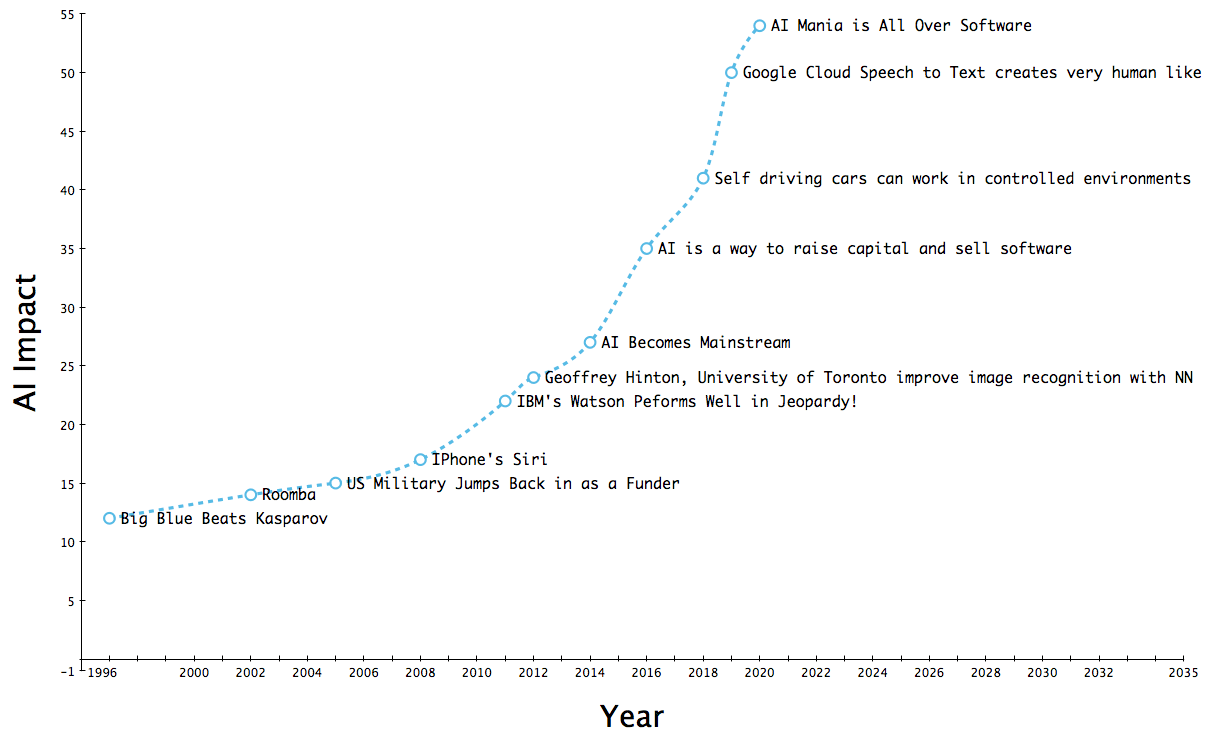

We could not fit the timeline on one graphic that would fit into the site. So we split the timeline into two graphics.

This second graphic is just a continuation of the last graphic. Notice that the vertical scale is different, showing the continual increase in AI since the late 1990s, but particularly after 2010.

A significant difference that can be seen is that while the first two AI bubbles were considerably smaller, they also delivered very little in terms of usable items.

The Repetition of AI Claims

What is curious is the repetition of claims, as explained in the following quotations from Sebastian Schuchmann at Towards Data Science.

Many public figures are voicing claims that are reminiscent of those of early AI researchers in the 1950s. By doing this, the former group creates excitement for future progress, or hype. Kurzweil, for instance, is famous for not only predicting the singularity, a time when artificial superintelligence will be ubiquitous, will occur by 2045, but also that AI will exceed human intelligence by 2029. In a similar manner, Scott is predicting that “there is no reason and no way that a human mind can keep up with an artificial Intelligent machine by 2035.” Additionally, Ng views AI as the new electricity. Sentiment analysis in media articles shows that AI-related articles became 1.5 times more positive from 2016 to 2018. Especially in the period from January 2016 to July 2016, the sentiment shifted. This improvement could be correlated with the public release of Alpha Go in January 2016, and its victory against world champion Lee Sedol in March.

Further, a meta-study on AI predictions has found some evidence that most predictions of high-level machine intelligence are around 20 years in the future no matter when the prediction is made. In essence, this points to the unreliability of future predictions of AI. Moreover, every prediction of high-level machine intelligence has to be viewed with a grain of salt.

In this chapter, criticisms of deep learning are discussed. As demonstrated, deep learning is at the forefront of progress in the field of AI, which is the reason a skeptical attitude towards the potential of deep learning is also criticism of the prospects of AI in general. It is a similar situation to the 1980s, when expert systems dominated the field and their collapse led to a winter period. If deep learning methods face comparable technological obstacles as their historical counterpart, similar results can be expected.

“It [deep learning] will not solve the more fundamental problem that deep learning models are very limited in what they can represent, and that most of the programs that one may wish to learn cannot be expressed as a continuous geometric morphing of a data manifold.” As a thought experiment, he proposes a huge data set containing source code labeled with a description of the program. He argues that a deep learning system would never be able to learn to program in this way, even with unlimited data, because tasks like these require reasoning and there is no learnable mapping from description to sourcecode. He further elaborates that adding more layers and data make it seem like these limitations are vanishing, but only superficially.

In his book “Deep Learning with Python,” Chollet has a chapter dedicated to the limitations of deep learning, in which he writes: “It [deep learning] will not solve the more fundamental problem that deep learning models are very limited in what they can represent, and that most of the programs that one may wish to learn cannot be expressed as a continuous geometric morphing of a data manifold.”

As a thought experiment, he proposes a huge data set containing source code labeled with a description of the program. He argues that a deep learning system would never be able to learn to program in this way, even with unlimited data, because tasks like these require reasoning and there is no learnable mapping from description to sourcecode. He further elaborates that adding more layers and data make it seem like these limitations are vanishing, but only superficially.

He argues that practitioners can easily fall into the trap of believing that models understand the task they undertake. However, when the models are presented with data that differs from data encountered in training data, they can fail in unexpected ways. He argues that these models don’t have an embodied experience of reality, and so they can’t make sense of their input. This is similar to arguments made in the 1980s by Dreyfus, who argued for the need of embodiment in AI. Unfortunately, a clear understanding of the role of embodiment in AI has not yet been achieved. In a similar manner, this points to fundamental problems not yet solved with deep learning approaches, namely reasoning and common sense.

In short, Chollet warns deep learning practitioners about inflating the capabilities of deep learning, as fundamental problems remain.

Conclusion

The third wave of AI has been pushed primarily by the promises of neural networks and or deep learning. These areas of AI have been similarly exaggerated as the previous claims around AI in the first two AI bubbles. One distinction is that neural networks have been providing narrow applications, whereas the first and deliveries very little in practical applications.