How Can Forecast Error Be Reduced?

Executive Summary

- Most companies are dissatisfied with their forecast error but are not aware of how to forecast error can be reduced.

- We cover some of the significant shortcomings and, therefore, areas of opportunity for forecast improvement.

Video Introduction: How Can Forecast Error Be Reduced?

Text Introduction (Skip if You Watched the Video)

There is a lot of poor quality advice on how to improve forecast accuracy. For example, software vendors tend to make the solution to improved forecast accuracy to be a new forecasting application. Those that want to sell AI/machine learning say the answer is more machine learning. The unfortunate fact is that what is “hot” in forecasting and what is pushed by vendors and consulting firms at a particular time often has more to do with a strategy to sell software and services rather than actually reducing forecast error. You will learn about some indisputable problems in forecasting departments, making sense to place effort to improve.

Our References for This Article

If you want to see our references for this article and related Brightwork articles, see this link.

Top Issues At Companies that Forecast

There are major and undeniable issues that hold back forecast improvement and how forecast error can be reduced. These are some of the top issues that we have seen on most, if not nearly all, of our forecasting clients.

Issue #1: Lack of Forecasting Bandwidth

Demand planning departments are typically understaffed versus the number of forecasting items and forecasting work that is available. One might have a company with billions in revenue. Not more than ten people involved in forecasting units (financial forecasting, marketing forecasting, and sales forecasting have their people partially involved in forecasting). Typically forecasting departments are staffed with a combination of a Director and then a series of planners, each of which is assigned to a grouping of products. However, that is the extent of the staffing. There is normally no staffing for someone to work on designing the forecasting systems or process to perform testing.

When bandwidth is so limited, there is often not enough workforce to perform the necessary testing required to improve forecast accuracy. When a software vendor or consultant comes into these companies, it is normally tough for them to know if the vendor or consulting firm is telling them the truth.

Issue #2: A Lack of Error Measurement of All the Forecasting Inputs

We cover in the article Forecast Error Myth #3: Sales And Marketing Have their Forecast Error Measured how it is infrequent for Sales and Marketing to have their forecast errors measured. And therefore, to be held accountable for their forecast inputs. In part, this is related to the first issue, which is a lack of bandwidth. However, the end result is that without measurement, Sales and Marketing continually reduce forecast accuracy, and they do not face the consequences. Neither department has a genuine interest in forecast accuracy, and in most cases, provides deliberately inaccurate inputs.

Issue #3: Overemphasis on Complexity

Companies that try to improve their forecasting will often get taken advantage of by either a vendor or a consulting company. What typically happens is the consulting company or vendor makes promises around the complexity that is overstated. This is very much based upon what is called asymmetrical knowledge. It means taking advantage of the fact you know something someone else does not know and lying to them. And this is why the technique is used so often with things that are complex. Forecasting has a bunch of items that are made more complicated than they need to be. One example we cover in the article How to Make Sense of the Natural Confusion with Alpha, Beta, and Gamma.

A Current Example of the Overemphasis on Complexity in Forecasting

Currently, the most significant exaggerated claims happening in forecasting are around AI/machine learning, which with the claims so large that at this point has become farcical.

As we cover in the article The AI, Big Data and Data Science Bubble and the Madness of Crowds, we have been tracking some of these claims.

- Much of what companies like IBM or CapGemini are promising related to AI is not new.

- Many of these companies have little in the way of AI/machine learning success themselves. IBM famously failed with Watson, as we cover in the article How IBM is Distracting from the Watson Failure to Sell More AI.

Obviously, most of the claims will not come true, and clients who believed these claims would be forced to cover up their flawed investments, as we cover in the article The Next Big Thing in AI is to Excuse AI Failures.

When so much has been invested, with so little output, the results must be hidden.

Issue #4: An Inability to Address Forecast Bias

Companies will focus their efforts on a wide variety of forecast improvement options while leaving the topic of forecast bias on the sideline.

Bias is an uncomfortable area of discussion because it describes how people who produce forecasts can be irrational and have subconscious biases. This relates to how people consciously bias their forecast in response to incentives.

This discomfort is evident in many forecasting books that limit the discussion of bias to its purely technical measurement. No one likes to be accused of having a bias, which leads to bias being underemphasized. However uncomfortable as it may be, it is one of the most critical areas to focus on to improve forecast accuracy. Yet, it is the last area that most companies look to address to improve forecast accuracy.

Issue #5: Not Recognizing the Poor Quality of the Demand History of the Product Location Database

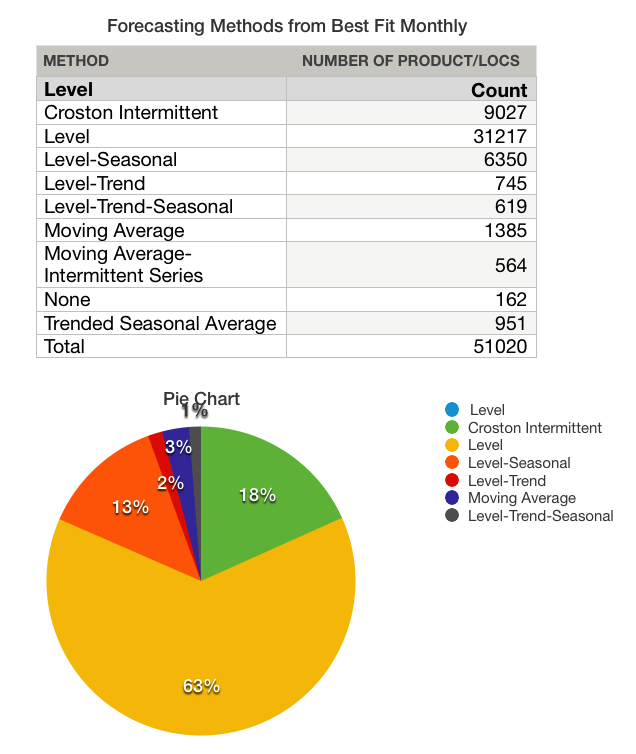

Companies often think of using techniques for improving forecast accuracy without considering the quality of their demand history. Here is an example of an anonymized result for a client where we performed a The Brightwork Forecast Model Assignment and Profiling Analysis.

This company wanted to move to “advanced forecasting methods” and funded a project to do just this. However, notice the results of the forecast model assignment below.

Notice the distribution of the forecast methods over the data set. This was performed with a forecasting system with many complex methods, but the forecasting system applied simple methods in most cases. (*Crostons’ Intermittent is a complex method, but when supply planning is accounted for, it is often the case that Crostons’ adds little value over a level forecast.)

- This company had a minimal ability to improve forecast accuracy because its demand history was so intermittent.

- The reason for this outcome is that more complex forecasting methods require a strong pattern. However, these strong patterns are rarer and rarer in modern product datasets.

- If you run a best fit, and most of the assignments end up being an intermittent forecasting method or a level, you have a low-quality demand history.

Yet even after I presented this analysis, the client was still interested in maybe doing some “machine learning.” That is they wanted to focus on the area that had the lowest opportunity for them rather than address the fundamental issue. Furthermore, this company did not have other data that could be used for a machine learning analysis. Machine learning is a multivariable analysis.

Issue #6: An Inability to Effectively Measure Forecast Accuracy

Companies are nearly universally held back in how they measure forecast error. Literally, in most cases, the forecast error is entirely unrelated to improvements in forecast accuracy. You can’t know what is working if you cannot effectively measure forecast accuracy. Things that are often missing include:

- A weighted forecast error that is reported for the overall product location database.

- A documented approach to the dimensions and assumptions of the forecast error calculation.

- A determination of forecast value adds by comparing the forecast error of a naive forecast versus the forecast error of the current forecast process (the final forecast) and the company’s statistical forecast.

What is the Most Important Factor in How Can Forecast Error Be Reduced?

In most cases, companies place creating a forecast first and the forecast error measurement at secondary importance. We recommend the opposite. Fairly good forecasts can be created using elementary methods. For example,

- A two or three-period moving average.

- A level (a many periods moving average).

- A trend, with a specific percentage increase month year.

- A seasonal, with a repeating factor.

All of these are easy to create in Excel or in R (which allows for automation).

And while they are not as sophisticated as ARIMA or other models, they usually work pretty well. The percentage of complex forecasting methods applied to companies’ product databases tends to be greatly exaggerated.

- The broader focus in our view should be on forecast error measurement.

- Yet, this is the weakest area of more forecasting departments. We think a significant reason for this is a misunderstanding (which is communicated by consulting firms, vendors, and nearly all the literature on forecasting) about using forecast error to drive forecast accuracy improvement.

Why Do the Standard Forecast Error Calculations Make Forecast Improvement So Complicated and Difficult?

It is important to understand forecasting error, but the problem is that the standard forecast error calculation methods do not provide this good understanding. In part, they don't let tell companies that forecast how to make improvements. If the standard forecast measurement calculations did, it would be far more straightforward and companies would have a far easier time performing forecast error measurement calculation.

What the Forecast Error Calculation and System Should Be Able to Do

One would be able to for example:

- Measure forecast error

- Compare forecast error (For all the forecasts at the company)

- To sort the product location combinations based on which product locations lost or gained forecast accuracy from other forecasts.

- To be able to measure any forecast against the baseline statistical forecast.

- To weigh the forecast error (so progress for the overall product database can be tracked)

Getting to a Better Forecast Error Measurement Capability

Getting to a Better Forecast Error Measurement Capability

A primary reason these things can not be accomplished with the standard forecast error measurements is that they are unnecessarily complicated, and forecasting applications that companies buy are focused on generating forecasts, not on measuring forecast error outside of one product location combination at a time. After observing ineffective and non-comparative forecast error measurements at so many companies, we developed, in part, a purpose-built forecast error application called the Brightwork Explorer to meet these requirements.

Few companies will ever use our Brightwork Explorer or have us use it for them. However, the lessons from the approach followed in requirements development for forecast error measurement are important for anyone who wants to improve forecast accuracy.

Getting to a Better Forecast Error Measurement Capability

Getting to a Better Forecast Error Measurement Capability