How Accurate Was IFS on the Potential of In Memory Computing?

Executive Summary

- Dan Matthews, the CTO of IFS, wrote an article about memory.

- We review how accurate he was in his article.

Introduction

Dan Matthews’s paper 3 Things Business Decision Makers Need to Know About In Memory Enterprise Software was published in May 2017.

Our References for This Article

If you want to see our references for this article and other related Brightwork articles, see this link.

Notice of Lack of Financial Bias: We have no financial ties to SAP or any other entity mentioned in this article.

The Quotations

Gartner Defines What is In Memory?

Gartner says that in order for a technology to be classified as in-memory, it requires “the database structure to be in-memory, specifically the main memory of the server.” This, according to Gartner, is in contrast with databases that would commonly rely on a disc-based Database Management System (DBMS) that feeds data in and out of a database stored on a disc or server, and may perhaps keep some data in cache to speed up performance. Gartner’s definition of an in-memory application requires an In-Memory DBMS, or IMDBMS.

We have previously critiqued Gartner for not understanding databases and being paid by SAP to promote HANA, which we covered in the article How Gartner Got HANA So Wrong. We estimate Gartner is paid over $120 million per year to promote SAP products and move them up in the rankings. Gartner makes the rather absurd proposal that having a single employee who works as an “ombudsman,” as we covered in the article How to Best Understand Gartner’s Ombudsman., makes that $120 million per year irrelevant.

Therefore, it is difficult for us to take what they seriously on these topics. The statement…

“the database structure to be in-memory of the server”

It is a meaningless statement. It sounds like it means something, but it doesn’t. What is “the database structure”? Is that a table? What does Gartner mean here? They don’t know. Again we have yet to see a single time that Gartner has displayed any knowledge of databases. In our analysis of Gartner’s ODMS MQ, which is at Can Anyone Make Sense of the ODMS Magic Quadrant?, we should make this quite clear.

The following sentence…

“This, according to Gartner, is in contrast with databases that would commonly rely on a disc-based Database Management System (DBMS) that feeds data in and out of a database stored on a disc or server”

It is also meaningless.

This is because all databases, even SAP HANA, which has stated that it stores all of the databases in memory doesn’t. All databases move data from storage into memory as needed in something called memory optimization.

However, we don’t mark down Dan or IFS for quoting Gartner. Even though Gartner adds no value and is a considerable value subtract in discussions around databases, they are still widely respected. It should also be mentioned that no vendor can call out Gartner for either being corrupt or not knowing their subject matter. This is because Gartner can retaliate against any vendor that does not show them the “proper deference” as Gartner has a near-monopoly or vendor ratings.

Now let us see what Dan does with this quote.

“Under this definition, the in-memory column store capabilities of the Oracle 12C Enterprise Edition, which IFS leverages to deliver its in-memory offering, qualifies as a true in-memory solution, but one that recognizes real-life challenges faced in enterprise computing. It contains both a traditional DBMS and an IMDBMS working in parallel and always in sync. It enables an application user to keep all or part of the database in memory, so that columns and tables that are frequently queried by business analytics tools or referenced in ad hoc queries can be kept in memory while other data is stored in a physical disc.”

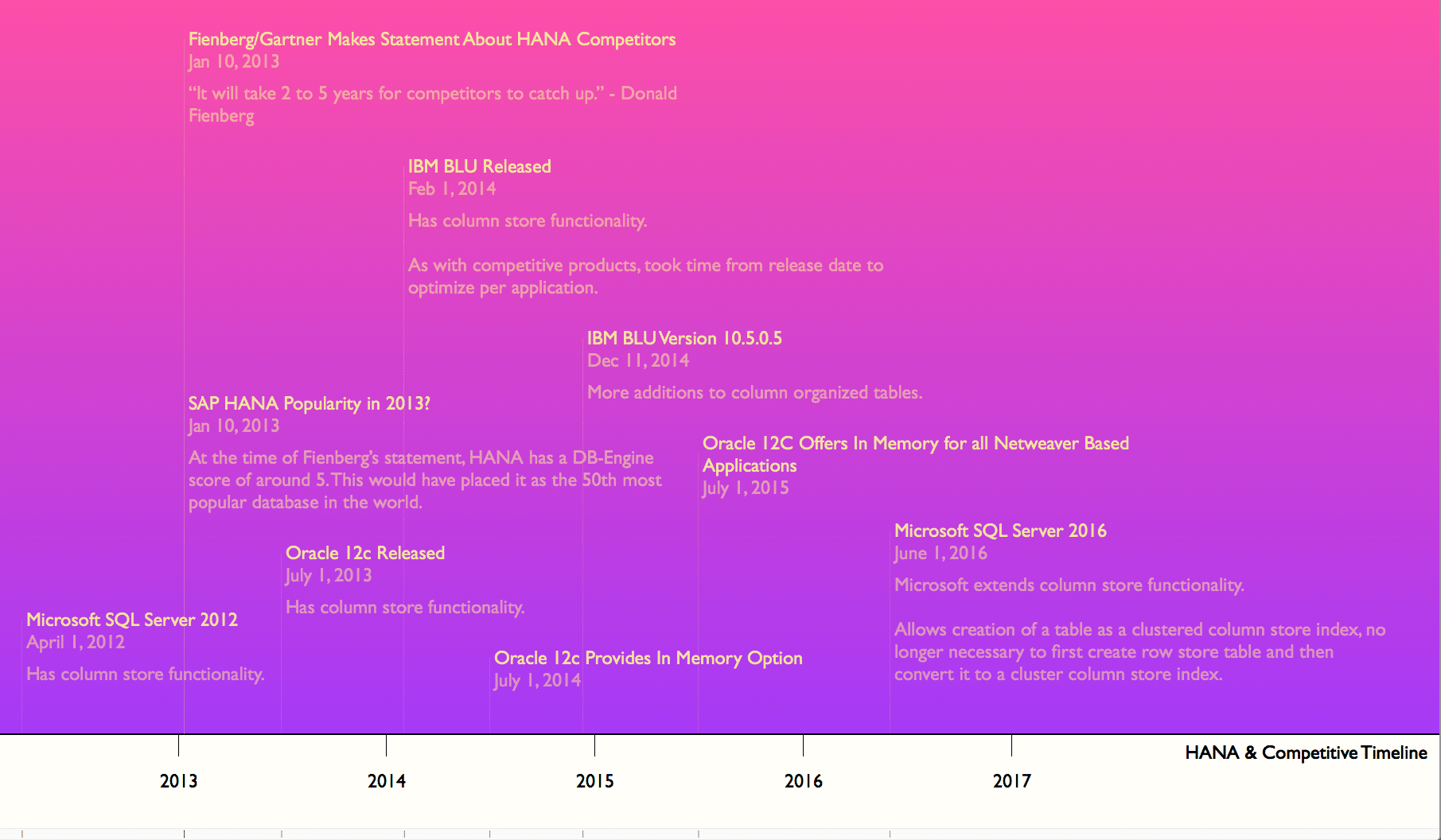

Well, if can now kick Gartner to the curb, Oracle does have an in-memory capability, and it was added to the Oracle database back in 2013, as the graphic below illustrates.

Therefore yes, Oracle provides “in memory” functionality with a column-oriented store.

100% In memory?

“A real-life ERP in-memory application should always be, in a manner of speaking, at least some type of hybrid solution between RAM-based and disc-based data storage. In theory, a pure in-memory computing system will require no disc space or file I/O. This is impractical in the world of ERP since a modern enterprise application may store not only structured data, but unstructured and unwieldy information like photos, technical drawings, video and other materials that are not used for analytical purposes and would consume a great deal of memory. This is one drawback of ERP applications, which by default run the entirety of a transactional database in memory. Meanwhile, the in-memory feature set of IFS Applications, for instance, will give end-users a choice of which data to house on a physical drive and which to store in-memory. Or of course, if they really want to, run the entire database and application in-memory.”

This is all quite true. And here, Dan is directly contradicting Hasso Plattner of SAP. We have been opposing Hasso Plattner on this topic since 2016. Hasso Plattner is wrong. Only a small portion of the overall database needs to be loaded into memory. Data changes depending upon what is being processed at the time.

The Need for ERP Speed?

“The chief benefit of in-memory computing in ERP is obvious—enhanced processing speed, particularly when dealing with larger data sets and queries of non-indexed tables. Data stored in memory can be accessed hundreds of times faster than would be the case on a hard disc or even flash drives. But also the columnar orientation of the in-memory storage means that it becomes very fast to find a smaller subset of data inside a very large set. In-memory is optimal for what is called “narrow queries”, where a smaller number of columns for a subset of rows is extracted from a very large data set.

This speed is particularly useful when companies are running ad hoc queries of the database underlying their ERP software product, for instance to identify customer orders that conform to specific criteria or determine which customer projects consume a common part.”

This is true.

But it leaves out the fact that if you spend time on ERP system accounts, the ERP system’s performance is rarely the issue. We live in a time of excellent hardware capacity. And the processing requirements of ERP systems have not increased very much over the past few decades, but hardware capabilities very much have increased.

Secondly, “in memory”/column oriented solutions speed analytical workloads primarily. As we covered in the article HANA as a Mismatch for S/4HANA and ERP, ERP systems are mainly transaction processing applications with a few CPU intensive operations like MRP and DRP. Therefore, they do not benefit much from the analytical processing capabilities of in-memory databases. The vast majority of companies still perform reporting on a specialized data warehouse, making sense to use some “in memory” capabilities. However, it does not need to reside within a “Swiss Army Knife” database like Oracle 12c. For example, one could use Redis combined with a row-oriented database.

Dan addresses this issue of data warehousing in the following quote.

Real-Time Visibility?

“In order to eliminate the database as a constraint, most business intelligence tools or analytics instead query a copy of the transactional data that is kept separately in a data warehouse. This data is updated periodically, so it does not truly offer real-time visibility. In-memory technology can provide that real-time view of the business, at least when the data is coming from a single source system or application. In-memory technology in itself does not replace the need for transformation and mapping that typically has to happen when performing analysis across data from multiple source systems.”

This is true, but real-time visibility is not particularly important. A report based upon data that is a day old or 12 hours old, or 6 hours old usually will not tell you much more than a real-time pull. The biggest problem in companies is not data currency, its subject matter expertise. I work in forecasting improvement projects. The problem that faces these projects is knowledge of things like forecast error measurement, data storage of different inputs, testing knowledge, how to document, how to follow a scientific approach. The lack of real-time visibility is not a high priority issue. Secondly, any specific item can be found in real time from the ERP system. And ERP systems also have more initial reports that are also real time.

The Incentives to Add In-Memory to ERP

“The incentives that may drive a company running ERP to adopt in-memory computing are straightforward.

For the enterprise software vendor, though, in-memory computing may be a way to address underlying issues in their application architecture. If an enterprise software product was originally designed in too complex a fashion, the application may have to look in more than a dozen locations in a relational database to satisfy a single query. They may be able to simplify this convoluted model and speed up queries by moving from disc-based to in-memory data storage.”

This is an interesting observation. Our interpretation (although we can’t prove that Dan means this) is that memory can counteract poor application design. Analyzing this article is timely because we just finished the article The Four Hidden Issues with SAP’s BW-EML Benchmark. And in this article, we pointed out that the BW-EML benchmark entirely leaves out the quality of the SAP BW applications, which is atrocious. We have previously easily beaten with different software running on a laptop. That is, an intelligent application design can be useful with far fewer resources.

Increasing Sales with In-Memory?

“Promoting an enterprise application that relies entirely on an in-memory database may also be a way for an ERP vendor to derive more revenue from the software sale by pushing customers to purchase a new database rather than the Oracle, Microsoft or IBM databases they would typically otherwise use. For the customer, however, this could mean re-learning and re-training of IT staff to manage a new, and proprietary, in-memory database in addition to the additional license investment for this technology.”

Yeeeeeees! Vendors try to maximize revenues. And indeed, SAP does this. This quote is directly aimed at SAP. SAP has been selling HANA on false claims since HANA was first introduced. Brightwork Research & Analysis has been the most vocal entity calling out SAP on this. While virtually the entirety of the IT media, Gartner, Forrester, and SAP’s massive consulting ecosystem has parroted SAP’s false claims, as we covered in What Is the Difference Between an SAP Consulting Company and a Parrot on HANA?

And Dan is also correct that the costs of transitioning to HANA are huge. Although we also would add that HANA is far less stable than more mature databases like Oracle or DB2. Brightwork receives no income from any vendor, so we have no reason to take any vendor’s side and report what our research has concluded.

Valid Uses for In-Memory: Big Data?

“Analyzing enormous quantities of data while it is in movement requires tremendous computing resources and real-time access to data. Information in a traditional data warehouse will be old and therefore less useful, but continuous queries on the transactional database could lead to performance issues.”

True. Although we would be remiss if we did not mention that companies are often challenged in performing analysis on univariate data. And many benefits of Big Data are conjecture. They presume that looking at many data factors will lead to great insights. The first Big Data bubble was mostly about throwing large amounts of unstructured data into data lakes and saying, “we will look at it later.” Data scientists are having great difficulty showing the forecasted benefits of this combination of Big Data and data science. We have run many of the ML algorithms ourselves and are often unimpressed with the outcomes.

Therefore we see a need for more understanding applied to data analysis rather than a focus on memory.

Valid Uses for In-Memory: In Memory Queries?

“If there is data in an application that is subject to frequent queries for decision support or ad-hoc reporting, it may make sense to move those tables in-memory. Otherwise, these queries could take a while to complete—long enough to affect the user experience. The load on the transactional database could also affect the experience of other users. If you want to summarize a thousand rows out of a million or a billion, or to retrieve a handful of columns in a table for one thousand of a percent of the total data volume, this is one area where a targeted approach to in-memory computing shines.”

Sure. Nothing wrong with that.

In Memory and Transaction Processing?

“Running an entire transactional database in-memory will probably never be optimal, but it is possible. Databases may run faster in-memory by the time there are hundreds of millions of rows in a table. For a very large database with tens or hundreds of thousands of transactions per second, in-memory across the board may be the best way to ensure performance without event loss.

High-volume transactional environments on this scale are rare, however. In most cases, it will still make sense to move only carefully-chosen subsets of a transactional database in-memory. If these critical subsets of the database, cumulatively, are numerous or extensive enough to constitute the majority of the database, it may be easier and make more sense to load the entire database in-memory. But again, these situations will be vanishingly rare.”

Yes exactly. This is simply back to memory optimization. Perhaps more memory is used — more memory will generally always be used as hardware specifications continually increase.

What Data Gets Moved into Memory?

“A hybrid approach to in-memory, with some data stored in a spinning disc or flash memory environment, makes even more sense when we remember that in a fully functional enterprise application, we are not just talking about tabular data but, often, attached files. The benefit of moving imagery—like the photos an electric utility may take of meters—into memory would be minimal whereas the cost could be high. These data are not queried, do not drive visualizations or business intelligence, and would consume substantial memory resources.”

This is an excellent point that I have never heard brought up before. But many data types make no sense to move into memory. It is good for Dan to point this out and the specific reason why it makes no sense to do so.

How About a Reasonable Approach to What is Loaded into Memory?

“IFS Applications customers can choose to keep some, all or none of their database in memory. Although our technology supports running the entirety of IFS Applications in memory, we believe that a more focused in-memory approach may be desirable. To help our customers choose the right things to put in memory, we provide an In-Memory Advisor as well as pre-configured In-Memory Acceleration packages for common scenarios in manufacturing, asset and service management.

In essence, at IFS, we have worked hard to package this technology in a way that is accessible enough for middle-market companies, robust enough for the largest global organization, and agile enough to adapt to changing data usage patterns over time.”

This is in significant opposition to SAP’s approach — to hype customers upon in-memory to get them to buy the exorbitantly priced HANA database, the pricing of which we covered in the article How to Understand S/4HANA and HANA Pricing.

Conclusion

This article receives a 10 out of 10 for accuracy.

The enterprise software market is so filled with promotional information. It is extremely rare for an article to receive a high score from us, much less a perfect score. There is nothing communicated which is inaccurate, and the article is brave for going against the conventional wisdom on in memory. It is easy to simply write an article telling customers and prospects that whatever new thing is necessary, but this article has a genuine interest in educating the reader.