Why SAP HANA Has Problems with Transaction Processing

Executive Summary

- HANA has performance problems when supporting transaction processing applications like S/4HANA.

- We cover one possible reason for this.

Video Introduction: Why SAP HANA Has Problems with Transaction Processing

Text Introduction (Skip if You Watched the Video)

SAP proposed that HANA is optimal for processing either OLAP or OLTP. And SAP had a specific design which SAP claimed allowed for this to happen. However, our analysis shows that HANA is not optimized for both analytics and transaction processing. By pushing the database in the direction of analytics, SAP reduced HANA’s capabilities with transaction processing. Secondly, SAP had to alter its design from its first design because it so misunderstood what primarily column oriented tabled would be able to do and what applications they could support. You will learn not the marketing hype but the reality of HANA’s performance.

Our References for This Article

If you want to see our references for this article and related Brightwork articles, see this link.

Notice of Lack of Financial Bias: We have no financial ties to SAP or any other entity mentioned in this article.

- This is published by a research entity, not some lowbrow entity that is part of the SAP ecosystem.

- Second, no one paid for this article to be written, and it is not pretending to inform you while being rigged to sell you software or consulting services. Unlike nearly every other article you will find from Google on this topic, it has had no input from any company's marketing or sales department. As you are reading this article, consider how rare this is. The vast majority of information on the Internet on SAP is provided by SAP, which is filled with false claims and sleazy consulting companies and SAP consultants who will tell any lie for personal benefit. Furthermore, SAP pays off all IT analysts -- who have the same concern for accuracy as SAP. Not one of these entities will disclose their pro-SAP financial bias to their readers.

HANA’s Design with Respect to Column Oriented Tables

The following quotation explains this from Rolf Paulsen.

The common quotation listed below.

“HANA combines OLTP and OLAP capabilities, with row store and columnar store in the same box” –

..is misleading at least because it suggests row store belongs to OLTP and columnar store to OLAP in HANA. Unlike products of other vendors, HANA does not provide “hybrid” tables that combine row store and columnar store in parallel. A HANA table is either columnar or row stored but row store tables are the exception for very volatile and fast-changing data. E.g. the size of all row store tables together has a hard limit of 1,945 GB per instance. The sophisticated issue are the transactional operations on the many columnar tables. The price for having data in the columnar form convenient for fast analysis has to be paid on data manipulation. No “row” of a columnar table gets updated, there are only inserts and complex “delta merges” on a short-lived row representation of the columnar data.”

How HANA’s Design Has Changed Over Time (and How the Explanation of HANA Has Changed Even More)

It is important to remember that it was introduced as a 100% column-oriented table database when HANA was first introduced. Obviously, with this design, HANA ran into major problems in transaction processing. This was one of the many indications that SAP did not understand what it was doing when it first designed HANA. Our view is that HANA was overly “boxed in” but the original design objectives of Hasso Plattner. This is explained in the following quotation from John Appleby.

With ERP on HANA, we, of course, don’t need separate row and column stores for transactional and operational reporting data. Plus as Hasso Plattner says, we can use the DR instance for read-only queries for operational reporting.

That is what SAP thought at this time, but then in SPS08, suddenly SAP added rows oriented tables to HANA. Yes, running an entirely column-oriented database for a transaction processing system never made any sense. Appleby himself describes this change in our critique of his article in How Accurate Was John Appleby on HANA SPS08? This article is written in November 2014, and in June of 2014, or seven months later, SAP already had to change its design.

Therefore, what Appleby states here is reversed. HANA’s performance for ERP systems must have been atrocious before they added row oriented stores.

More analysis of this quote can be found in the article John Appleby, Beaten by Chris Eaton in Debate and Required Saving by Hasso Plattner.

Later versions of HANA decreased the percentage of column-oriented tables to roughly 1/3 of the total tables in the database for S/4HANA.

The Oracle Database Design

Oracle’s design is different, which is covered in part by this quote from Oracle.

“The IM column store encodes data in a columnar format: each column is a separate structure. The columns are stored contiguously, which optimizes them for analytic queries. The database buffer cache can modify objects that are also populated in the IM column store. However, the buffer cache stores data in the traditional row format. Data blocks store the rows contiguously, optimizing them for transactions.”

The Issue in the Interaction Between Transaction Processing and Columnar Tables

This quote on Rolf’s part is compelling.

“The sophisticated issue is the transactional operations on the many columnar tables.

The price for having data in the columnar form convenient for fast analysis has to be paid on data manipulation.

No “row” of a columnar table gets updated, there are only inserts and complex “delta merges” on a short-lived row representation of the columnar data.”

The CPU Consumption Issues of HANA

If we can restate this, the following can be said about Rolf Paulsen’s quotation.

- Rolf proposes that the columnar tables are updated by a transaction (let us say HANA supports a transaction processing system rather than BW).

- Therefore this update is problematic from transactions being processed to the database.

Results from the Field

Results from the field indicate HANA still has problems with both transactions and running CPU intensive processes like MRP/DRP, as we covered in the article HANA as a Mismatch for S/4HANA and ERP.

The CPU issue is clearly because of the overload of data into memory, causes significant CPU consumption, often requiring a HANA reboot, as we covered in the article How to Understand HANA’s High CPU Consumption. However, the continued transaction processing performance issues could be in part related to the exact problem you bring up here in Rolf’s quote above.

Removing Duplicate Data by Porting an Analytics Database Design to the Non-Analytics Application?

John Appleby and others repeatedly proposed that HANA would eliminate duplicate data (that is, the redundant data between the ERP system and the data warehouse). Analysis of this claim performed in this article How Accurate Was John Appleby on HANA Replacing BW?

But the “solution” meant turning the ERP system database into something like the database for a data warehouse (if one does not want to use star schemas). The row-oriented databases supported data warehouses quite well but used an intermediary structure called the star schema. As a point of comparison, Brightwork Research & Analysis uses an application that creates star schemas in the background without the user seeing anything and easily beats the performance of SAP BW. SAP BW requires the configuration of star schemas when BW sits on top of a row-oriented DB. Creating star schemas does not need to be as rigorous as it is in SAP’s BW. It can be made to create the background schemas from just loading a flat-file, which contains the relationships (as one example).

How SAP Has Been Hiding HANA’s Transaction Processing Performance

John Appleby stated that SAP no longer would use the SD benchmark as we covered in the article The Hidden Issue with the SD HANA Benchmark. This is because it no longer fits how companies used SD (that is, the sales and distribution module of ECC, a transaction processing system). And, even in 2019, there is still no SD benchmark for HANA published by SAP. Instead, SAP created an analytics benchmark called the BW-EML, which we covered in The Four Hidden Issues with SAP’s BW-EML Benchmark.

All of this is strongly indicative that the SAP has run the SD benchmark internally but choose not to publish the baseline because the performance would match what has been reported to us from the field. HANA simply performs poorly for transaction processing. However, this never stopped SAP from claiming HANA had far better performance than any other database, including Bill McDermott’s claim that HANA performed 100,000x faster than any other database. SAP needs its customers to replace their current database with HANA further to meet their revenue objectives further and increase account control. Once HANA is installed, SAP will apply indirect access rules to HANA, including requiring second HANA instances and licenses to copy any data out of HANA, as we covered in the article The HANA Police and Indirect Access Charges.

Conclusion

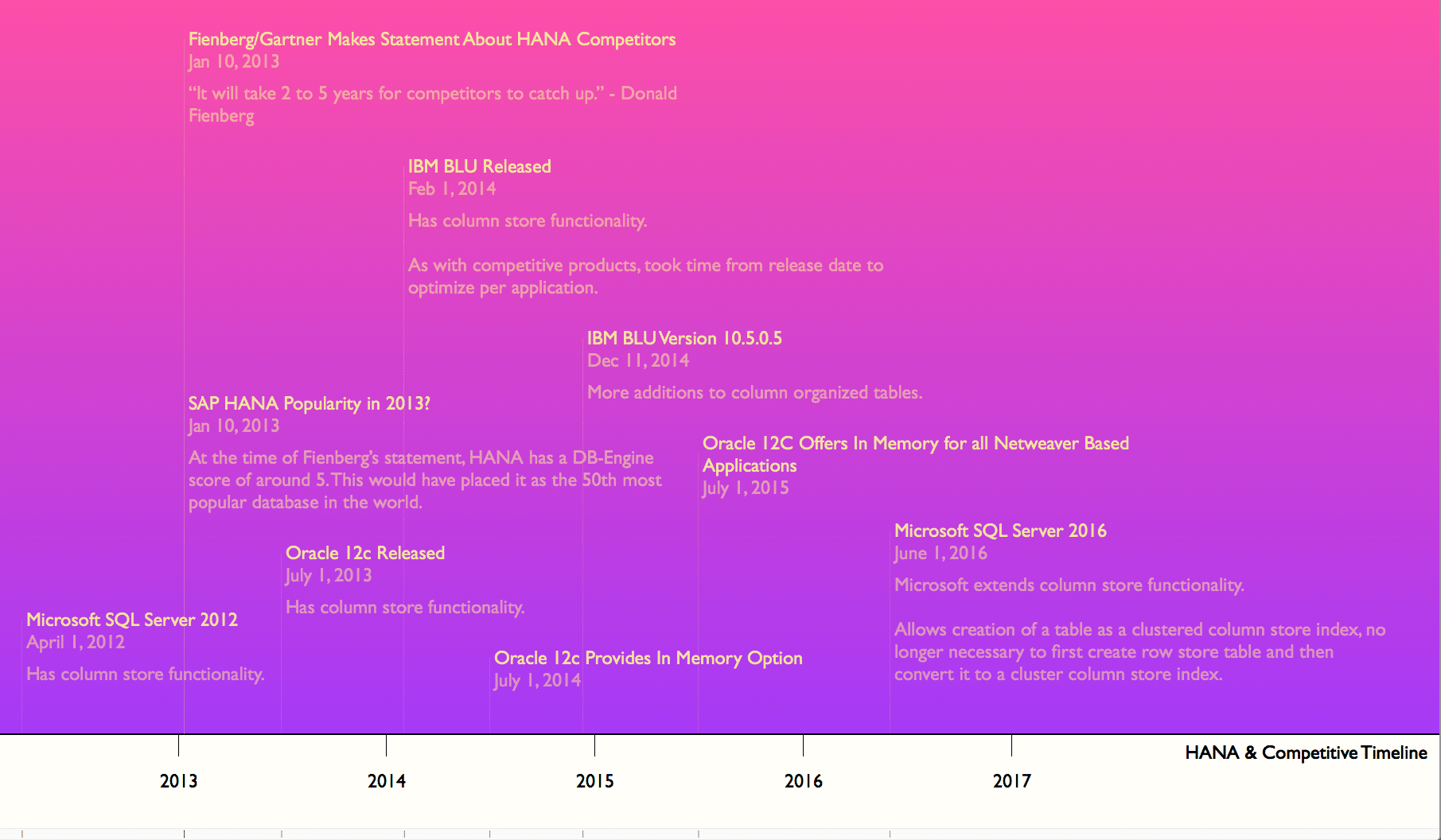

As far as adding columnar capabilities to a row-oriented database, all of the primary database vendors could do the same thing. Sybase IQ was around for at least 15 years before HANA and had a similar HANA design, but it was just never prevalent.

Oracle, IBM, SQL Server added column stores to their databases after SAP did (and all of these vendors had superior knowledge of memory optimization versus SAP).

But it is not a question of if one can. It’s a matter of “why would you?” SAP essentially proposed that an analytics database is a fantastic database for a transaction processing system — and that furthermore, they were the only one to figure this out.